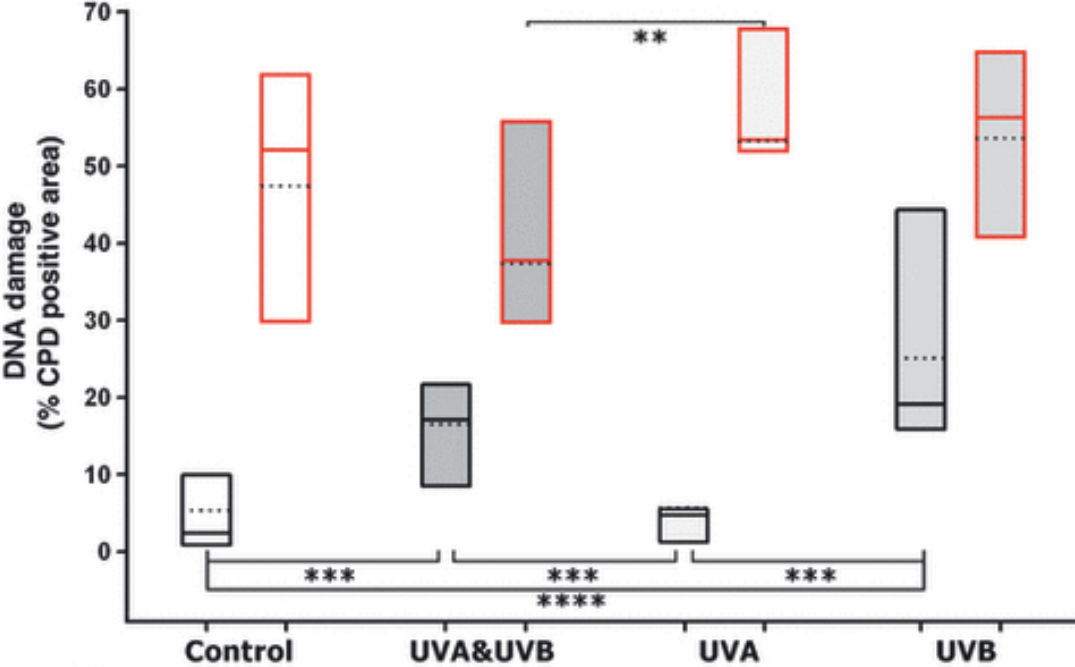

Published on February 17, 2026 9:34 PM GMTCompanies send employees to systems theory courses to hone their high-load systems’ designing skills. Ads pop up with systems-thinking courses claiming it’s an essential quality for leaders. Even some children’s toys have labels saying “develops systems thinking”. “An interdisciplinary framework for analyzing complex entities by studying the relationships between their components” – sounds like excellent bait for a certain kind of people.I happen to be one of those people. Until recently, I’d only encountered fragmented bits of this discipline. Sometimes those bits made systems theory seem like a deep trove of incredibly useful knowledge. But other times the ideas felt flimsy, prompting questions like “Is that all?” or “How do I actually use this?”.I didn’t want to make a final judgment without digging into the topic properly. So I read Thinking in Systems (Donella Meadows) and The Art of Systems Thinking (Joseph O’Connor, Ian McDermott), a couple books that apply the principles of systems thinking, and several additional articles to finally form an honest impression. I hope my research helps you decide whether it’s worth spending time on courses and books about systems thinking.TL;DR: 5/10, in depth research is probably not worth your time, unless you want to obtain a bunch of loose heuristics which you probably already know and which are hard to make the use of.An Example System for AnalysisTo make the critique more concrete, let me show a short example of the kinds of systems discussed in the books above.A system (per Meadows) is a set of interconnected elements, organized in a certain way to achieve some goal. If you’re thinking this definition describes almost anything, you’re absolutely right. Systems theory academics aim to develop a field whose laws could describe both a single organism and corporate behavior or ecological interactions in a forest. This is allegedly the power of systems theory — but, as you’ll soon see, also its weakness.Systems theorists suggest decomposing any complex system into its constituent stocks. These can be material (“gold bars,” “salmon in a river,” “battery energy reserve”) or immaterial (“love,” “patience”). Stocks are connected by flows of two types: positive, where an increase in one resource increases another, and negative, where an increase causes a decrease.(This is not the only way to define “system” or “relationships in a system,” but it’s the clearest one and easiest to explain. Systems theory isn’t limited to such dynamic systems. The text below doesn’t lose much by focusing on this type — static systems have roughly the same issues.)Suppose we want to represent interactions between animals and plants in the tundra. Wolves reduce the number of reindeer, and reindeer reduce the amount of reindeer lichen. This can be expressed with the following diagram:There are negative connections between wolves and reindeer, and between reindeer and lichen. Almost all systems include time delays. For example, here wolves can’t immediately eat all the reindeer.A system may contain feedback loops — cases where a stock influences itself. These loops can also be positive (increasing the stock leads to further increase) or negative (increase leads to decrease). If we slightly complicate the previous example to include reproduction, we get something like this:The larger the population of wolves (or reindeer, or lichen), the more newborns appear — again, with a delay. This is a positive feedback loop.For simplicity, influence is usually assumed linear: more entities on one side of an arrow lead to proportionally greater influence on the other side. But in general there are no constraints on transfer functions — they can be arbitrary. Let’s add another layer: the amount of available food influences population survival.When there are more reindeer than wolves, it has no direct effect on the wolves. But when there are fewer, wolves starve and die off. That’s an example of negative feedback: the wolf population indirectly regulates itself. (Even though only one side of the difference matters, the arrow is still marked as positive — the further below zero the difference gets, the fewer wolves or reindeer remain.)Such systems can show very complex behavior due to nonlinear transfer functions and delays. For example, in an ecological system like this one, you can predict population waves:Wolves grow in number while they have enough foodThey hunt too many reindeerWolf numbers then crash because there’s nothing left to eatReindeer recover without predatorsWolves recover because reindeer recoverCycle repeatsIf the lichen is abundant and grows fast enough, you can predict oscillations in its quantity as well (driven by reindeer population swings) but much less pronounced.Real systems are far more complex. There are other animals and plants than wolves, reindeer, and lichen. You’d also want to consider non‑biological resources like soil fertility and water. Systems theory encourages limiting a system’s scope sensibly based on the question at hand.Systems theory says: “Let’s see how such systems behave dynamically! Let’s examine many such systems and find common patterns or similarities in their structure!” Systems thinking is the ability to maintain such mental models, watch them evolve, and spot structural parallels across domains. Don’t confuse this with the systematic approach, which is about implementing solutions deliberately rather than chaotically. Systems thinking fuels the systematic approach, but they’re not the same thing.The methodology sounds great, but there’s a problem.Precise Modeling Doesn’t Work in Systems TheoryLet’s try applying it to something concrete.Consider a system representing the factors inside and around a person trying to lose weight:A person has a normal weight and excess weight — everything above normal.Weight increases depending on surplus food intake.A person eats more food when under stress.Among other things, stress is caused by excess weight. Stress generated per unit time is proportional to deviation from normal weight, but capped at a certain maximum.Excess weight also generates determination to start losing weight. Determination is proportional to the deviation from normal weight, but without a maximum. If the person drops below some initial threshold, determination can decrease.Once determination reaches a sufficient level, the person starts going to the gym.Each gym visit reduces weight.But each gym visit also “costs” some determination. When determination runs out, the person stops exercising.A simple system: one positive feedback loop (weight → stress → weight) and two negative loops (weight → determination → weight, and determination → gym visits → determination). For simplicity, assume stress arises only from excess weight and not from random external events.Now let’s test your systems thinking. Suppose at some moment an external stress spike hits the person. They overeat for a few days, deviating from equilibrium weight. What happens next?Weight rises above normal, then gym visits bring it back to baseline or below. Weight rises, oscillates a few times, then returns to baseline or below.Weight oscillates and slowly decreases to the starting point.Weight oscillates and slowly rises, maybe reaching an upper limit.Weight increases continuously in almost a straight line.………Correct answer: any of the above!Simulation shows that depending on how much fat one gym visit burns, the graph can look like this:(Charts from my quick system simulation program. Implementation details are not really important. X axis represents time in days. Y represnets full weight.)or this:or this:or like this:or even like this:Good luck estimating in your head when exactly one type of graph turns into another! And that’s just one parameter. The system has several:How much fat one gym visit burnsHow much determination a gym visit costsHow much stress each unit of excess weight producesHow much determination each unit of excess weight producesThe maximum stress excess weight can generate By varying parameters, you can achieve arbitrary peak heights, oscillation periods, and growth rates. Systems theory cannot predict the system’s behavior — other than saying it will change somehow. In Thinking in Systems, Meadows gives a similar example involving renewable resource extraction with a catastrophic threshold. There too the system may evolve one way, or another, or a third way. Unfortunately, she doesn’t emphasize that this undermines the theory’s predictive power. What good is a theory that can’t say anything about anything?Does Physics Suffer from the Same Problem?An attentive reader might object that the same could be said about many things — for example, about physics. Take a standard school RLC oscillation circuit:How will the current behave after closing the switch? Depending onthe resistor’s resistancethe capacitor’s capacitancethe inductor’s inductanceand the capacitor’s initial chargethe circuit can either oscillate or simply decay exponentially after hitting a single peak. Depending on the parameters and the initial charge, you can observe any amplitude and any oscillation period.You can talk as much as you want about “physical thinking” and “physical intuition,” but even estimating the oscillation period of such a basic circuit in your head isn’t simple. So what — should we throw physics away because its predictive power isn’t perfect?If only the fat‑burning system had just this problem! The devil is in several non‑obvious assumptions that make systems theory far less applicable in real life compared to physics.Implicit Assumptions About Transfer FunctionsWhy do we assume that each gym visit burns the same amount of fat and determination? The dependency may be arbitrary. We can’t even be sure about the sign. A gym visit might actually increase the determination to lose weight!Why do we assume stress translates linearly into extra food consumption? That transfer function may also be anything. Perhaps stress accumulates until it hits a threshold, after which a person goes into an “eating spree” to relieve it.Implicit Assumptions About Links Between NodesGym visits affect food consumption: after working out, you tend to be hungrier.Gym visits also affect stress, and not in obvious ways. A workout might reduce stress… or cause it.Excess weight affects appetite: the more you weigh, the more you might feel like eating.Why is determination spent only on gym visits? A person may simply decide to eat less — converting determination directly into reduced food intake.Implicit Assumptions About Splitting Nodes Into Subsystems and Connections to Other SystemsIf earlier points hammered nails into the coffin of this model, then this one pours concrete over.Gym visits don’t only burn fat — they also increase muscle mass. Muscles increase baseline calorie consumption and workout efficiency. They may also increase determination to continue training.Stress doesn’t appear out of thin air.Work influences it. Gym visits, excess weight, and overall stress might influence work‑related stress in unclear ways.Health influences it. Exercise usually improves health.But “health” is a complicated construct. Reducing it to a single scalar value is unfair. It’s a large system of its own. Some parts may improve with exercise, others may worsen.Tasty food reduces stress.But tasty food costs money, which can increase stress — but only if the person has financial problems.And all of this still ignores the human ability to restructure the system and change its parameters.In the end we get:Yes, I mentioned earlier that systems theory encourages setting reasonable system boundaries. Now we just need to understand which boundaries are reasonable! If you believe John von Neumann, a theory with four free parameters can produce a graph of an elephant, and with five — make it wave its trunk. Here we have enough free parameters for an entire zoo.Again: Doesn’t Physics Have the Same Problem?A meticulous radio specialist may object again:A resistor’s resistance depends on temperature. When current flows through it, it heats up. The circuit’s oscillation frequency will change — not according to an obvious formula, but depending on the resistor’s material, shape, and ambient temperature.Some charge leaks into the air. The leakage rate depends on the surface area of the wires and air humidity. And capacitors also have self‑discharge!Plus, some energy radiates away as radio waves. The radiation resistance of the inductor depends on its area. Physics classes usually ignore this and just give the total resistance.This is all before accounting for problems caused by poor circuit assembly. Anyone who has done a school or university lab remembers how much effort it takes to make observations match theory! Reality has a disgusting surprising amount of detail. It creeps with its dirty tentacles into any clean theoretical construction. So how is this better than the systems‑theory issues above?Convergence of Theory and RealityThis time I disagree with my imaginary interlocutor. Physics handles real‑world complexity far better. In practice nothing is simple, yes — but radio engineering formulas align very well with reality in the overwhelming majority of situations that interest us. Also, the number of corrections you need to apply is relatively small. People usually know when they’re stepping outside the “normal” domain and what correction to apply. If you take a few corrections into account, the model’s behavior approximates real‑world behavior extremely well.We can also keep adding nodes to the weight‑loss system and make transfer functions more precise. But each new, more intricate model will still produce results drastically different from reality — and from each other. It would take hundreds of additional nodes and refined transfer functions to get even somewhat accurate predictions for a single specific person.Complex models are sensitive. You might have heard about the well‑known problem in weather prediction. Suppose we gather all the data needed to estimate future temperature, run the algorithm, get a result. Now we change the data by the tiny amount allowed by measurement error and run the algorithm again. For a short time the predictions coincide, but they soon diverge rapidly. From almost identical inputs, weather forecasts for 4–5 days out may differ completely. Now imagine that we weren’t off by a tiny measurement error — we just guessed some values arbitrarily and ignored several factors to simplify the system. In political, economic, and ecological systems, the error isn’t a micro‑deviation — it’s swaths of unknowns.Pattern Matching Also Doesn’t Work in Systems TheoryOkay, maybe we can do qualitative comparisons rather than quantitative ones? Although systems‑theory books show pretty graphs and mention numerical modeling, they focus more on finding general patterns.Unfortunately, this is possible only for the simplest, most disconnected models. Comparing systems of animals in a forest, a weight‑loss model, and a renewable resource depletion model, you can squint and notice a pattern like: “systems with delays and feedback loops can exhibit oscillations where you don’t expect them.” That’s a very weak claim — but fine, it contains some non‑trivial information. Anything else?“The strong get stronger” — in competitive systems with coupled positive feedback loops, the participant with the largest initial resource tends to win.“Tragedy of the commons” — individuals overuse a shared resource because personal gain outweighs collective harm.“Escalation” — competitors continuously increase their efforts to outrun each other.“External support kills intrinsic motivation.”Systems theory is decent at illustrating these behavioral archetypes, but it is in no way necessary for discovering them. They come mostly from observing people, not from theoretical models. But let’s be generous and say that systems theory helps draw parallels between disciplines. What else?The problem is that these are basically all the interesting dynamics you can get from a handful of nodes and connections. Adding more nodes just creates oscillations of more complex shapes — that’s quantitative, not qualitative. More complex transfer functions sometimes produce more interesting graphs, but such behavior resists interpretation and generalization.And even if you do find an interesting pattern — how transferable is it? How sure are you that a similar system will show the same pattern? Real systems are never isolated; parasitic effects are everywhere and can completely distort the pattern.That’s only considering parameter values, not model roughness. The simple forest‑animal system ignores spatiality — real reindeer migrate. The simple weight‑loss system ignores many psychological effects. Yes, they’re similar if you abstract them enough. But as soon as you refine your mental model, the similarity disappears — and so do the patterns.You might try narrowing the theory to some specific applied domain… but then it’s simpler to just use that domain’s actual tools. What’s the point of a theory of everything that can’t say anything concrete?Systems Theory Experts Are Themselves Skeptical of ItI should say that Donella Meadows is much more honest than other promoters of systems theory. She praises systems theory far less as some kind of super‑weapon of rational thinking. In Chapter 7 of Thinking in Systems, she even writes:People raised in an industrial world, who enthusiastically embrace systems thinking, tend to make a major mistake. They often assume that system analysis—tying together vast numbers of different parameters—and powerful computers will allow them to predict and control the development of situations. This mistake arises because the worldview of the industrial world presumes the existence of a key to prediction and control. … To tell the truth, even we didn’t follow our own advice. We lectured about feedback loops but couldn’t give up coffee. We knew everything about system dynamics, about how systems can pull you away from your goals, but we avoided following our own morning jogging routines. We warned about escalation traps and shifting-the-burden traps, and then fell into those same traps in our own marriages. … Self‑organizing nonlinear systems with feedback loops are inherently unpredictable. They cannot be controlled. They can only be understood in a general sense. The goal of precisely predicting the future and preparing for it is unattainable.[1]I would have preferred to hear earlier that system analysis doesn’t help predict the future and provides few practical benefits in daily life. But fine. So how am I supposed to interact with complex systems if I can’t predict anything?We cannot control systems or fully understand them, but we can move in step with them! In some sense I already knew this. I learned to move in rhythm with incomprehensible forces while kayaking down rivers, growing plants, playing musical instruments, skiing. All these activities require heightened attention, engagement in the process, and responding to feedback. I just didn’t think the same requirements applied to intellectual work, management, communicating with people. But in every computer model I created, I sensed a hint of this. Successful living in a world of systems requires more than just being able to calculate. It requires all human qualities: rationality, the ability to distinguish truth from falsehood, intuition, compassion, imagination, and morality.Ah yes, moving in rhythm with systems and developing compassion and imagination, of course. A bit later in the chapter there is a clearer list of recommendations. But most of them I would describe as amorphous — all good things against all bad things. There’s a good heuristic for identifying low‑information advice: try inverting it. If the inversion sounds comical because no one would ever give such advice, the original advice was too obvious. Let’s try:Make your mental models visible. Never share with anyone how you reached any conclusion…Use language carefully and enrich it with systems concepts….and if cornered, be as vague and incoherent as possible.Acknowledge, respect, and disseminate information.Distort, delay, and conceal information in the system however you like.Act for the good of the whole system.Feel free to ignore or harm some people in the system.Be humble — keep learning.Be overconfident. You don’t need to learn anything; you already know it all. If someone catches you in an error, just lie!Honor complexity.Ignore complexity. Everything must be arranged in the simplest possible way.Expand your time horizons.Ignore long‑term consequences.Expand the boundaries of your thinking beyond your field.Be interested only in your own domain.Stay curious about life in all its forms.Be interested in only one tiny corner of life.Keep seeking improvement.Stop trying to improve. Your current skill level (whatever it is) is enough.Each piece of advice comes with an explanation that is supposed to add detail. I didn’t feel that they added much concrete substance. Ten pages can be compressed into: “Care about and appreciate all parts of a system. Try to understand the parts you don’t understand. Share your mental models honestly and clearly.”Some of the advice is reasonable, like:Pay attention to what matters, not just what can be measured.Distribute responsibility within the system.Listen to the wisdom of the system (meaning: talk to the people at the lower levels and learn what they actually need and how they live).Use feedback strategies in systems with feedback loops.…but even here, questions remain. Are systems with distributed responsibility always better than ones with centralized responsibility? Do people always understand what they need? The advice would benefit from much more specificity, as well as examples and counterexamples.I’ll highlight the recommendation not to intervene in the system until you understand how it works. This is a simple but good idea and people do often forget it. Except, as we’ve already established, predicting the behavior of arbitrary complex systems is impossible.In fact, the word “amorphous” describes both books quite well. They’re full of examples that either state the obvious, or boil down to “it could be like this or like that—we don’t know which in your case,” or both at once. For example:Some parts of a system are more important than others because they have a greater influence on its behavior. A head injury is far more dangerous than a leg injury because the brain controls the body to a much greater extent than the leg does. If you make changes in a company’s head office, the consequences will ripple out to all local branches. But if you replace the manager of a local branch, it is unlikely to affect company‑wide policy, although it’s possible — complex systems are full of surprises.Or:Replacing one leader with another — Brezhnev with Gorbachev, or Carter with Reagan — can change the direction of a country, even though the land, factories, and hundreds of millions of people remain the same. Or not. A leader can introduce new “rules of the game” or set a new goal.The authors plow the sands, creating the impression they’re saying something profound. It feels like there wasn’t enough content to fill even a small book on systems thinking. It doesn’t help that they mix in knowledge from unrelated fields, even if it only barely fits the narrative. Maybe someone will find it interesting to read about precision vs accuracy, but outside the relevant subchapter in The Art of Systems Thinking, that information is never used again. The second and third chapters of that book especially are diluted with content weakly connected to systems theory. At least to its core — and given how vague that concept is, you can stretch almost anything to fit, and the authors do.Maybe the problem is with these particular books? Both are aimed at a mass audience. Maybe a deeper, more rigorous treatment would link the theoretical constructs to reality? I certainly prefer that approach. The knowledge would feel more coherent. But I’m still not convinced it would be useful.As an example of deeper yet still popular books related to systems theory, I’d mention Taleb’s The Black Swan and Antifragility. They don’t present themselves as systems‑theory books, but their themes resonate strongly with the field. The main thesis of the first, expressed in system‑theory language, would be: “Large systems can experience perturbances of enormous amplitude due to tangled feedback loops. You cannot predict what exactly will trigger such anomalies — they can arise from the smallest changes.” The thesis of the second: “Highly successful complex systems aren’t merely protected from the environment; they have subsystems for recovery and post‑traumatic growth. Lack of shocks harms them rather than strengthening them.” These books explore their themes deeply. But — and I’m not the only one noting this — they, too, provide little predictive power. Knowing about black swan events is interesting, but what good is it if the book itself says you can’t predict them? What good is it to know that people and organizations have “regeneration subsystems” if you can’t predict what will cause growth and what will simply weaken them? Again, they offer a moderately useful perspective on systems, but not a way to know when the described effects will manifest.Reading academic work on systems theory seems like an endeavor with a bad effort‑to‑knowledge payoff. Wikipedia gives examples of systems theory claims. They too are either obvious but phrased heavily, or questionable. For instance:A system is an image of its environment. … A system as an element of the universe reflects certain essential properties of the latter.Decoded:The rest of the world influences the formation of systems living in it. Subsystems of each system reflect the elements of the world that matter to that system. Gazelles live in the savanna. So do cheetahs that hunt them, so gazelles have a subsystem for escaping (fast legs and suitable muscles). Companies operating under capitalism must obtain and spend money according to certain rules, so they have a subsystem for managing cash flows: cash registers, sellers, accounting, acquisitions departments.On the one hand, this thesis contains some information: it stops us from imagining animals with zero protection against predators and the environment. On the other hand, it constrains predictions far less than it seems. Besides gazelles, the savanna has elephants (too big for cheetahs), termites (too small), and countless birds (they fly). And do you really need systems theory to predict absence of animals not protected from predators in any way?Systems Theory Is Barely Used in Applied Fields That Cite ItIt’s hard to speak for all fields that claim inspiration from systems theory. But the ones I know use little beyond borrowed prestige and the concepts of positive and negative feedback loops.The last such book I read was Anna Varga’s Introduction to Systemic Family Therapy. It’s an excellent book: clearly written, and the proposed methods seem genuinely useful in family therapy. A short summary of systems theory and feedback loops gives it some gravitas. But the book barely uses the theory it references. Discussion of feedback loops in family systems occupies maybe four pages. Other systems‑theory concepts appear rarely, mostly as general warnings like: “Remember that systems are complex and interconnected, and changes in one place ripple through the rest.” True — but what exactly should one do with that? “Systems theory” ends up meaning “let’s draw a diagram showing family members and connections.” Useful, but not deep at all.Game‑design books mention systems theory seemingly more often. But again, it’s usually things like “beware of unbounded positive feedback loops that let players get infinite resources” or “changing one mechanic creates ripples throughout the game,” rather than deeper advice on creating interesting dynamics.Imagine hearing about a revolutionary new culinary movement — cooking dishes using liquid nitrogen. Its founders claim it will change the world and blow your mind. They publish entire books about handling every specific vegetable and maintaining a -100°C kitchen. You visit one of these kitchens… and it’s basically a regular kitchen. Maybe three degrees colder, no open flame, lots of salads on the menu. You ask where the liquid nitrogen is. They say there is none — but there is some dry ice in a storage container, used occasionally for a smoky cocktail effect. That’s roughly the feeling I get when I see people cite systems theory.A Silver LiningTo be fair, here are a couple of good things about systems theory and systems thinking.First, the concepts of positive and negative feedback loops are excellent tools to have in your mental toolkit. Once you internalize them, you’ll see them everywhere.Second, a large number of weak heuristics can add up to something useful occasionally. Ones I like the most:A general sense of interconnectedness. Internalize the idea that any change in one part of a system sends ripples through all other nodes. This protects you from the naive optimism of “we’ll fix just this one thing and everything will immediately be fine.” A well‑known psychological consequence: don’t expect to eliminate a bad habit or change a personality trait without changing yourself globally.Often, to change a system in one place, you must apply force in a completely different place. Without detailed knowledge, it’s hard to predict where exactly, but reminders not to bang your head against the same wall are useful. Psychologically, this aligns with the idea that to fix relationship problems, it’s often easier to change yourself rather than the other person, even if the other person is the problem. Changing yourself is easier.Systems often resist change. Stable systems are hard to restructure; easily restructured systems are unstable. It’s useful to remember this tradeoff.Third, sometimes you do encounter a system simple enough and well‑bounded enough that certain links clearly dominate. In those cases, you can act more confidently by dismantling old and building new feedback loops. System archetypes do occasionally help.And most importantly: the cultural shift. You’ve heard the common argument for teaching math in schools — that it “puts your mind in order.” I’d say systems theory provides a similar benefit. Books on systems thinking encourage you to model the world rather than rely purely on intuition. Holding a coherent mental model of the world (even an incomplete one! even with unknown coefficients!) is a superpower many people lack. So if a systems‑thinking book gets someone to reflect on causality around them and sketch a diagram with arrows, that’s already something good.But it’s still not enough for me to justify studying systems theory to anyoneConclusionUnfortunately, “systems theory specialist” now sounds to me like “specialist in substances.” Not in some specific substance — but in arbitrary materials in general. There just isn’t that much useful, non‑obvious knowledge one can state about “substance” as such, without specifics.If you say: “The holistic approach of systems theory states that the stability of the whole depends on the lowest relative resistances of all its parts at any given moment,” people will look at you with respect. It won’t matter that you said it in response to someone asking you to pass the salt at the table. This is the main practical benefit you can extract from systems‑thinking books.5/10. Not useless, but mostly bait for fans of self-improvement books and for those seeking a “theory of everything.” My rating is probably affected by the fact that people near me praised it far too enthusiastically. Had it been less overhyped, I might have given it a 6/10. Maybe even 7/10, if someone somewhen writes a book that provides enough concrete examples of applying systems thinking in real life without fluff. Though I can barely imagine such a book.The basic concepts are genuinely useful, which makes it easy to get hooked. But once hooked, you’ll spend a long time chasing after vague wisdoms and illusory insights. I recommend it only if you want a big pile of heuristics about everything in the world — but nothing about anything specific. And since you’re probably reading popular science for self‑development, you likely already know most of these heuristics anyway.^Translation of a translated text. You English copy of this book has parapharsed text.Discuss Read More

Review of the System Theory as a Field of Knowledge

Published on February 17, 2026 9:34 PM GMTCompanies send employees to systems theory courses to hone their high-load systems’ designing skills. Ads pop up with systems-thinking courses claiming it’s an essential quality for leaders. Even some children’s toys have labels saying “develops systems thinking”. “An interdisciplinary framework for analyzing complex entities by studying the relationships between their components” – sounds like excellent bait for a certain kind of people.I happen to be one of those people. Until recently, I’d only encountered fragmented bits of this discipline. Sometimes those bits made systems theory seem like a deep trove of incredibly useful knowledge. But other times the ideas felt flimsy, prompting questions like “Is that all?” or “How do I actually use this?”.I didn’t want to make a final judgment without digging into the topic properly. So I read Thinking in Systems (Donella Meadows) and The Art of Systems Thinking (Joseph O’Connor, Ian McDermott), a couple books that apply the principles of systems thinking, and several additional articles to finally form an honest impression. I hope my research helps you decide whether it’s worth spending time on courses and books about systems thinking.TL;DR: 5/10, in depth research is probably not worth your time, unless you want to obtain a bunch of loose heuristics which you probably already know and which are hard to make the use of.An Example System for AnalysisTo make the critique more concrete, let me show a short example of the kinds of systems discussed in the books above.A system (per Meadows) is a set of interconnected elements, organized in a certain way to achieve some goal. If you’re thinking this definition describes almost anything, you’re absolutely right. Systems theory academics aim to develop a field whose laws could describe both a single organism and corporate behavior or ecological interactions in a forest. This is allegedly the power of systems theory — but, as you’ll soon see, also its weakness.Systems theorists suggest decomposing any complex system into its constituent stocks. These can be material (“gold bars,” “salmon in a river,” “battery energy reserve”) or immaterial (“love,” “patience”). Stocks are connected by flows of two types: positive, where an increase in one resource increases another, and negative, where an increase causes a decrease.(This is not the only way to define “system” or “relationships in a system,” but it’s the clearest one and easiest to explain. Systems theory isn’t limited to such dynamic systems. The text below doesn’t lose much by focusing on this type — static systems have roughly the same issues.)Suppose we want to represent interactions between animals and plants in the tundra. Wolves reduce the number of reindeer, and reindeer reduce the amount of reindeer lichen. This can be expressed with the following diagram:There are negative connections between wolves and reindeer, and between reindeer and lichen. Almost all systems include time delays. For example, here wolves can’t immediately eat all the reindeer.A system may contain feedback loops — cases where a stock influences itself. These loops can also be positive (increasing the stock leads to further increase) or negative (increase leads to decrease). If we slightly complicate the previous example to include reproduction, we get something like this:The larger the population of wolves (or reindeer, or lichen), the more newborns appear — again, with a delay. This is a positive feedback loop.For simplicity, influence is usually assumed linear: more entities on one side of an arrow lead to proportionally greater influence on the other side. But in general there are no constraints on transfer functions — they can be arbitrary. Let’s add another layer: the amount of available food influences population survival.When there are more reindeer than wolves, it has no direct effect on the wolves. But when there are fewer, wolves starve and die off. That’s an example of negative feedback: the wolf population indirectly regulates itself. (Even though only one side of the difference matters, the arrow is still marked as positive — the further below zero the difference gets, the fewer wolves or reindeer remain.)Such systems can show very complex behavior due to nonlinear transfer functions and delays. For example, in an ecological system like this one, you can predict population waves:Wolves grow in number while they have enough foodThey hunt too many reindeerWolf numbers then crash because there’s nothing left to eatReindeer recover without predatorsWolves recover because reindeer recoverCycle repeatsIf the lichen is abundant and grows fast enough, you can predict oscillations in its quantity as well (driven by reindeer population swings) but much less pronounced.Real systems are far more complex. There are other animals and plants than wolves, reindeer, and lichen. You’d also want to consider non‑biological resources like soil fertility and water. Systems theory encourages limiting a system’s scope sensibly based on the question at hand.Systems theory says: “Let’s see how such systems behave dynamically! Let’s examine many such systems and find common patterns or similarities in their structure!” Systems thinking is the ability to maintain such mental models, watch them evolve, and spot structural parallels across domains. Don’t confuse this with the systematic approach, which is about implementing solutions deliberately rather than chaotically. Systems thinking fuels the systematic approach, but they’re not the same thing.The methodology sounds great, but there’s a problem.Precise Modeling Doesn’t Work in Systems TheoryLet’s try applying it to something concrete.Consider a system representing the factors inside and around a person trying to lose weight:A person has a normal weight and excess weight — everything above normal.Weight increases depending on surplus food intake.A person eats more food when under stress.Among other things, stress is caused by excess weight. Stress generated per unit time is proportional to deviation from normal weight, but capped at a certain maximum.Excess weight also generates determination to start losing weight. Determination is proportional to the deviation from normal weight, but without a maximum. If the person drops below some initial threshold, determination can decrease.Once determination reaches a sufficient level, the person starts going to the gym.Each gym visit reduces weight.But each gym visit also “costs” some determination. When determination runs out, the person stops exercising.A simple system: one positive feedback loop (weight → stress → weight) and two negative loops (weight → determination → weight, and determination → gym visits → determination). For simplicity, assume stress arises only from excess weight and not from random external events.Now let’s test your systems thinking. Suppose at some moment an external stress spike hits the person. They overeat for a few days, deviating from equilibrium weight. What happens next?Weight rises above normal, then gym visits bring it back to baseline or below. Weight rises, oscillates a few times, then returns to baseline or below.Weight oscillates and slowly decreases to the starting point.Weight oscillates and slowly rises, maybe reaching an upper limit.Weight increases continuously in almost a straight line.………Correct answer: any of the above!Simulation shows that depending on how much fat one gym visit burns, the graph can look like this:(Charts from my quick system simulation program. Implementation details are not really important. X axis represents time in days. Y represnets full weight.)or this:or this:or like this:or even like this:Good luck estimating in your head when exactly one type of graph turns into another! And that’s just one parameter. The system has several:How much fat one gym visit burnsHow much determination a gym visit costsHow much stress each unit of excess weight producesHow much determination each unit of excess weight producesThe maximum stress excess weight can generate By varying parameters, you can achieve arbitrary peak heights, oscillation periods, and growth rates. Systems theory cannot predict the system’s behavior — other than saying it will change somehow. In Thinking in Systems, Meadows gives a similar example involving renewable resource extraction with a catastrophic threshold. There too the system may evolve one way, or another, or a third way. Unfortunately, she doesn’t emphasize that this undermines the theory’s predictive power. What good is a theory that can’t say anything about anything?Does Physics Suffer from the Same Problem?An attentive reader might object that the same could be said about many things — for example, about physics. Take a standard school RLC oscillation circuit:How will the current behave after closing the switch? Depending onthe resistor’s resistancethe capacitor’s capacitancethe inductor’s inductanceand the capacitor’s initial chargethe circuit can either oscillate or simply decay exponentially after hitting a single peak. Depending on the parameters and the initial charge, you can observe any amplitude and any oscillation period.You can talk as much as you want about “physical thinking” and “physical intuition,” but even estimating the oscillation period of such a basic circuit in your head isn’t simple. So what — should we throw physics away because its predictive power isn’t perfect?If only the fat‑burning system had just this problem! The devil is in several non‑obvious assumptions that make systems theory far less applicable in real life compared to physics.Implicit Assumptions About Transfer FunctionsWhy do we assume that each gym visit burns the same amount of fat and determination? The dependency may be arbitrary. We can’t even be sure about the sign. A gym visit might actually increase the determination to lose weight!Why do we assume stress translates linearly into extra food consumption? That transfer function may also be anything. Perhaps stress accumulates until it hits a threshold, after which a person goes into an “eating spree” to relieve it.Implicit Assumptions About Links Between NodesGym visits affect food consumption: after working out, you tend to be hungrier.Gym visits also affect stress, and not in obvious ways. A workout might reduce stress… or cause it.Excess weight affects appetite: the more you weigh, the more you might feel like eating.Why is determination spent only on gym visits? A person may simply decide to eat less — converting determination directly into reduced food intake.Implicit Assumptions About Splitting Nodes Into Subsystems and Connections to Other SystemsIf earlier points hammered nails into the coffin of this model, then this one pours concrete over.Gym visits don’t only burn fat — they also increase muscle mass. Muscles increase baseline calorie consumption and workout efficiency. They may also increase determination to continue training.Stress doesn’t appear out of thin air.Work influences it. Gym visits, excess weight, and overall stress might influence work‑related stress in unclear ways.Health influences it. Exercise usually improves health.But “health” is a complicated construct. Reducing it to a single scalar value is unfair. It’s a large system of its own. Some parts may improve with exercise, others may worsen.Tasty food reduces stress.But tasty food costs money, which can increase stress — but only if the person has financial problems.And all of this still ignores the human ability to restructure the system and change its parameters.In the end we get:Yes, I mentioned earlier that systems theory encourages setting reasonable system boundaries. Now we just need to understand which boundaries are reasonable! If you believe John von Neumann, a theory with four free parameters can produce a graph of an elephant, and with five — make it wave its trunk. Here we have enough free parameters for an entire zoo.Again: Doesn’t Physics Have the Same Problem?A meticulous radio specialist may object again:A resistor’s resistance depends on temperature. When current flows through it, it heats up. The circuit’s oscillation frequency will change — not according to an obvious formula, but depending on the resistor’s material, shape, and ambient temperature.Some charge leaks into the air. The leakage rate depends on the surface area of the wires and air humidity. And capacitors also have self‑discharge!Plus, some energy radiates away as radio waves. The radiation resistance of the inductor depends on its area. Physics classes usually ignore this and just give the total resistance.This is all before accounting for problems caused by poor circuit assembly. Anyone who has done a school or university lab remembers how much effort it takes to make observations match theory! Reality has a disgusting surprising amount of detail. It creeps with its dirty tentacles into any clean theoretical construction. So how is this better than the systems‑theory issues above?Convergence of Theory and RealityThis time I disagree with my imaginary interlocutor. Physics handles real‑world complexity far better. In practice nothing is simple, yes — but radio engineering formulas align very well with reality in the overwhelming majority of situations that interest us. Also, the number of corrections you need to apply is relatively small. People usually know when they’re stepping outside the “normal” domain and what correction to apply. If you take a few corrections into account, the model’s behavior approximates real‑world behavior extremely well.We can also keep adding nodes to the weight‑loss system and make transfer functions more precise. But each new, more intricate model will still produce results drastically different from reality — and from each other. It would take hundreds of additional nodes and refined transfer functions to get even somewhat accurate predictions for a single specific person.Complex models are sensitive. You might have heard about the well‑known problem in weather prediction. Suppose we gather all the data needed to estimate future temperature, run the algorithm, get a result. Now we change the data by the tiny amount allowed by measurement error and run the algorithm again. For a short time the predictions coincide, but they soon diverge rapidly. From almost identical inputs, weather forecasts for 4–5 days out may differ completely. Now imagine that we weren’t off by a tiny measurement error — we just guessed some values arbitrarily and ignored several factors to simplify the system. In political, economic, and ecological systems, the error isn’t a micro‑deviation — it’s swaths of unknowns.Pattern Matching Also Doesn’t Work in Systems TheoryOkay, maybe we can do qualitative comparisons rather than quantitative ones? Although systems‑theory books show pretty graphs and mention numerical modeling, they focus more on finding general patterns.Unfortunately, this is possible only for the simplest, most disconnected models. Comparing systems of animals in a forest, a weight‑loss model, and a renewable resource depletion model, you can squint and notice a pattern like: “systems with delays and feedback loops can exhibit oscillations where you don’t expect them.” That’s a very weak claim — but fine, it contains some non‑trivial information. Anything else?“The strong get stronger” — in competitive systems with coupled positive feedback loops, the participant with the largest initial resource tends to win.“Tragedy of the commons” — individuals overuse a shared resource because personal gain outweighs collective harm.“Escalation” — competitors continuously increase their efforts to outrun each other.“External support kills intrinsic motivation.”Systems theory is decent at illustrating these behavioral archetypes, but it is in no way necessary for discovering them. They come mostly from observing people, not from theoretical models. But let’s be generous and say that systems theory helps draw parallels between disciplines. What else?The problem is that these are basically all the interesting dynamics you can get from a handful of nodes and connections. Adding more nodes just creates oscillations of more complex shapes — that’s quantitative, not qualitative. More complex transfer functions sometimes produce more interesting graphs, but such behavior resists interpretation and generalization.And even if you do find an interesting pattern — how transferable is it? How sure are you that a similar system will show the same pattern? Real systems are never isolated; parasitic effects are everywhere and can completely distort the pattern.That’s only considering parameter values, not model roughness. The simple forest‑animal system ignores spatiality — real reindeer migrate. The simple weight‑loss system ignores many psychological effects. Yes, they’re similar if you abstract them enough. But as soon as you refine your mental model, the similarity disappears — and so do the patterns.You might try narrowing the theory to some specific applied domain… but then it’s simpler to just use that domain’s actual tools. What’s the point of a theory of everything that can’t say anything concrete?Systems Theory Experts Are Themselves Skeptical of ItI should say that Donella Meadows is much more honest than other promoters of systems theory. She praises systems theory far less as some kind of super‑weapon of rational thinking. In Chapter 7 of Thinking in Systems, she even writes:People raised in an industrial world, who enthusiastically embrace systems thinking, tend to make a major mistake. They often assume that system analysis—tying together vast numbers of different parameters—and powerful computers will allow them to predict and control the development of situations. This mistake arises because the worldview of the industrial world presumes the existence of a key to prediction and control. … To tell the truth, even we didn’t follow our own advice. We lectured about feedback loops but couldn’t give up coffee. We knew everything about system dynamics, about how systems can pull you away from your goals, but we avoided following our own morning jogging routines. We warned about escalation traps and shifting-the-burden traps, and then fell into those same traps in our own marriages. … Self‑organizing nonlinear systems with feedback loops are inherently unpredictable. They cannot be controlled. They can only be understood in a general sense. The goal of precisely predicting the future and preparing for it is unattainable.[1]I would have preferred to hear earlier that system analysis doesn’t help predict the future and provides few practical benefits in daily life. But fine. So how am I supposed to interact with complex systems if I can’t predict anything?We cannot control systems or fully understand them, but we can move in step with them! In some sense I already knew this. I learned to move in rhythm with incomprehensible forces while kayaking down rivers, growing plants, playing musical instruments, skiing. All these activities require heightened attention, engagement in the process, and responding to feedback. I just didn’t think the same requirements applied to intellectual work, management, communicating with people. But in every computer model I created, I sensed a hint of this. Successful living in a world of systems requires more than just being able to calculate. It requires all human qualities: rationality, the ability to distinguish truth from falsehood, intuition, compassion, imagination, and morality.Ah yes, moving in rhythm with systems and developing compassion and imagination, of course. A bit later in the chapter there is a clearer list of recommendations. But most of them I would describe as amorphous — all good things against all bad things. There’s a good heuristic for identifying low‑information advice: try inverting it. If the inversion sounds comical because no one would ever give such advice, the original advice was too obvious. Let’s try:Make your mental models visible. Never share with anyone how you reached any conclusion…Use language carefully and enrich it with systems concepts….and if cornered, be as vague and incoherent as possible.Acknowledge, respect, and disseminate information.Distort, delay, and conceal information in the system however you like.Act for the good of the whole system.Feel free to ignore or harm some people in the system.Be humble — keep learning.Be overconfident. You don’t need to learn anything; you already know it all. If someone catches you in an error, just lie!Honor complexity.Ignore complexity. Everything must be arranged in the simplest possible way.Expand your time horizons.Ignore long‑term consequences.Expand the boundaries of your thinking beyond your field.Be interested only in your own domain.Stay curious about life in all its forms.Be interested in only one tiny corner of life.Keep seeking improvement.Stop trying to improve. Your current skill level (whatever it is) is enough.Each piece of advice comes with an explanation that is supposed to add detail. I didn’t feel that they added much concrete substance. Ten pages can be compressed into: “Care about and appreciate all parts of a system. Try to understand the parts you don’t understand. Share your mental models honestly and clearly.”Some of the advice is reasonable, like:Pay attention to what matters, not just what can be measured.Distribute responsibility within the system.Listen to the wisdom of the system (meaning: talk to the people at the lower levels and learn what they actually need and how they live).Use feedback strategies in systems with feedback loops.…but even here, questions remain. Are systems with distributed responsibility always better than ones with centralized responsibility? Do people always understand what they need? The advice would benefit from much more specificity, as well as examples and counterexamples.I’ll highlight the recommendation not to intervene in the system until you understand how it works. This is a simple but good idea and people do often forget it. Except, as we’ve already established, predicting the behavior of arbitrary complex systems is impossible.In fact, the word “amorphous” describes both books quite well. They’re full of examples that either state the obvious, or boil down to “it could be like this or like that—we don’t know which in your case,” or both at once. For example:Some parts of a system are more important than others because they have a greater influence on its behavior. A head injury is far more dangerous than a leg injury because the brain controls the body to a much greater extent than the leg does. If you make changes in a company’s head office, the consequences will ripple out to all local branches. But if you replace the manager of a local branch, it is unlikely to affect company‑wide policy, although it’s possible — complex systems are full of surprises.Or:Replacing one leader with another — Brezhnev with Gorbachev, or Carter with Reagan — can change the direction of a country, even though the land, factories, and hundreds of millions of people remain the same. Or not. A leader can introduce new “rules of the game” or set a new goal.The authors plow the sands, creating the impression they’re saying something profound. It feels like there wasn’t enough content to fill even a small book on systems thinking. It doesn’t help that they mix in knowledge from unrelated fields, even if it only barely fits the narrative. Maybe someone will find it interesting to read about precision vs accuracy, but outside the relevant subchapter in The Art of Systems Thinking, that information is never used again. The second and third chapters of that book especially are diluted with content weakly connected to systems theory. At least to its core — and given how vague that concept is, you can stretch almost anything to fit, and the authors do.Maybe the problem is with these particular books? Both are aimed at a mass audience. Maybe a deeper, more rigorous treatment would link the theoretical constructs to reality? I certainly prefer that approach. The knowledge would feel more coherent. But I’m still not convinced it would be useful.As an example of deeper yet still popular books related to systems theory, I’d mention Taleb’s The Black Swan and Antifragility. They don’t present themselves as systems‑theory books, but their themes resonate strongly with the field. The main thesis of the first, expressed in system‑theory language, would be: “Large systems can experience perturbances of enormous amplitude due to tangled feedback loops. You cannot predict what exactly will trigger such anomalies — they can arise from the smallest changes.” The thesis of the second: “Highly successful complex systems aren’t merely protected from the environment; they have subsystems for recovery and post‑traumatic growth. Lack of shocks harms them rather than strengthening them.” These books explore their themes deeply. But — and I’m not the only one noting this — they, too, provide little predictive power. Knowing about black swan events is interesting, but what good is it if the book itself says you can’t predict them? What good is it to know that people and organizations have “regeneration subsystems” if you can’t predict what will cause growth and what will simply weaken them? Again, they offer a moderately useful perspective on systems, but not a way to know when the described effects will manifest.Reading academic work on systems theory seems like an endeavor with a bad effort‑to‑knowledge payoff. Wikipedia gives examples of systems theory claims. They too are either obvious but phrased heavily, or questionable. For instance:A system is an image of its environment. … A system as an element of the universe reflects certain essential properties of the latter.Decoded:The rest of the world influences the formation of systems living in it. Subsystems of each system reflect the elements of the world that matter to that system. Gazelles live in the savanna. So do cheetahs that hunt them, so gazelles have a subsystem for escaping (fast legs and suitable muscles). Companies operating under capitalism must obtain and spend money according to certain rules, so they have a subsystem for managing cash flows: cash registers, sellers, accounting, acquisitions departments.On the one hand, this thesis contains some information: it stops us from imagining animals with zero protection against predators and the environment. On the other hand, it constrains predictions far less than it seems. Besides gazelles, the savanna has elephants (too big for cheetahs), termites (too small), and countless birds (they fly). And do you really need systems theory to predict absence of animals not protected from predators in any way?Systems Theory Is Barely Used in Applied Fields That Cite ItIt’s hard to speak for all fields that claim inspiration from systems theory. But the ones I know use little beyond borrowed prestige and the concepts of positive and negative feedback loops.The last such book I read was Anna Varga’s Introduction to Systemic Family Therapy. It’s an excellent book: clearly written, and the proposed methods seem genuinely useful in family therapy. A short summary of systems theory and feedback loops gives it some gravitas. But the book barely uses the theory it references. Discussion of feedback loops in family systems occupies maybe four pages. Other systems‑theory concepts appear rarely, mostly as general warnings like: “Remember that systems are complex and interconnected, and changes in one place ripple through the rest.” True — but what exactly should one do with that? “Systems theory” ends up meaning “let’s draw a diagram showing family members and connections.” Useful, but not deep at all.Game‑design books mention systems theory seemingly more often. But again, it’s usually things like “beware of unbounded positive feedback loops that let players get infinite resources” or “changing one mechanic creates ripples throughout the game,” rather than deeper advice on creating interesting dynamics.Imagine hearing about a revolutionary new culinary movement — cooking dishes using liquid nitrogen. Its founders claim it will change the world and blow your mind. They publish entire books about handling every specific vegetable and maintaining a -100°C kitchen. You visit one of these kitchens… and it’s basically a regular kitchen. Maybe three degrees colder, no open flame, lots of salads on the menu. You ask where the liquid nitrogen is. They say there is none — but there is some dry ice in a storage container, used occasionally for a smoky cocktail effect. That’s roughly the feeling I get when I see people cite systems theory.A Silver LiningTo be fair, here are a couple of good things about systems theory and systems thinking.First, the concepts of positive and negative feedback loops are excellent tools to have in your mental toolkit. Once you internalize them, you’ll see them everywhere.Second, a large number of weak heuristics can add up to something useful occasionally. Ones I like the most:A general sense of interconnectedness. Internalize the idea that any change in one part of a system sends ripples through all other nodes. This protects you from the naive optimism of “we’ll fix just this one thing and everything will immediately be fine.” A well‑known psychological consequence: don’t expect to eliminate a bad habit or change a personality trait without changing yourself globally.Often, to change a system in one place, you must apply force in a completely different place. Without detailed knowledge, it’s hard to predict where exactly, but reminders not to bang your head against the same wall are useful. Psychologically, this aligns with the idea that to fix relationship problems, it’s often easier to change yourself rather than the other person, even if the other person is the problem. Changing yourself is easier.Systems often resist change. Stable systems are hard to restructure; easily restructured systems are unstable. It’s useful to remember this tradeoff.Third, sometimes you do encounter a system simple enough and well‑bounded enough that certain links clearly dominate. In those cases, you can act more confidently by dismantling old and building new feedback loops. System archetypes do occasionally help.And most importantly: the cultural shift. You’ve heard the common argument for teaching math in schools — that it “puts your mind in order.” I’d say systems theory provides a similar benefit. Books on systems thinking encourage you to model the world rather than rely purely on intuition. Holding a coherent mental model of the world (even an incomplete one! even with unknown coefficients!) is a superpower many people lack. So if a systems‑thinking book gets someone to reflect on causality around them and sketch a diagram with arrows, that’s already something good.But it’s still not enough for me to justify studying systems theory to anyoneConclusionUnfortunately, “systems theory specialist” now sounds to me like “specialist in substances.” Not in some specific substance — but in arbitrary materials in general. There just isn’t that much useful, non‑obvious knowledge one can state about “substance” as such, without specifics.If you say: “The holistic approach of systems theory states that the stability of the whole depends on the lowest relative resistances of all its parts at any given moment,” people will look at you with respect. It won’t matter that you said it in response to someone asking you to pass the salt at the table. This is the main practical benefit you can extract from systems‑thinking books.5/10. Not useless, but mostly bait for fans of self-improvement books and for those seeking a “theory of everything.” My rating is probably affected by the fact that people near me praised it far too enthusiastically. Had it been less overhyped, I might have given it a 6/10. Maybe even 7/10, if someone somewhen writes a book that provides enough concrete examples of applying systems thinking in real life without fluff. Though I can barely imagine such a book.The basic concepts are genuinely useful, which makes it easy to get hooked. But once hooked, you’ll spend a long time chasing after vague wisdoms and illusory insights. I recommend it only if you want a big pile of heuristics about everything in the world — but nothing about anything specific. And since you’re probably reading popular science for self‑development, you likely already know most of these heuristics anyway.^Translation of a translated text. You English copy of this book has parapharsed text.Discuss Read More