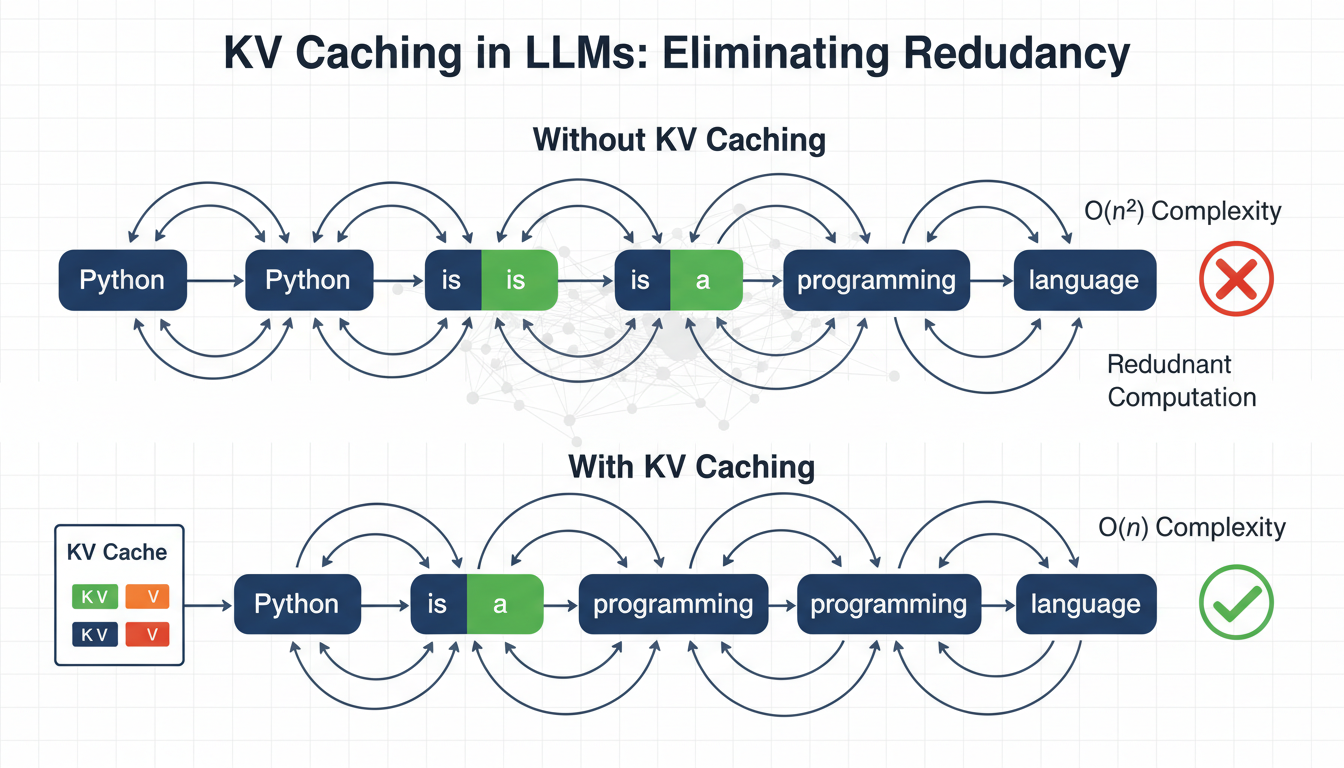

Language models generate text one token at a time, reprocessing the entire sequence at each step. Read More

KV Caching in LLMs: A Guide for Developers

Language models generate text one token at a time, reprocessing the entire sequence at each step.