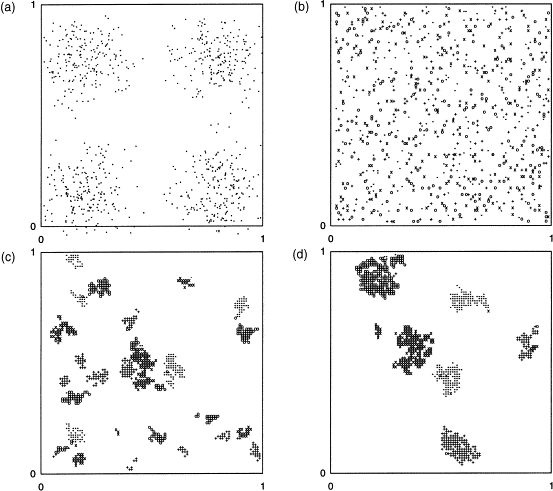

Post written up quickly in my spare time. TLDR: Anthropic have a new blogpost of a novel contamination vector of evaals, which I point out is analogous to how ants coordinate by leaving pheromone traces in the environment.One cool and surprising aspect of Anth’s recent BrowseComp contamination blog post is the observation that multi-agent web interaction can induce environmentally mediated focal points. This happens because some commercial websites automatically generate persistent, indexable pages from search queries, and over repeated eval runs, agents querying the web thus externalize fragments of their search trajectories into public URL paths, which may not contain benchmark answers directly, but can encode prior hypotheses, decompositions, or candidate formulations of the task. Subsequent agents may encounter these traces and update on them.From Anthropic: Some e-commerce sites autogenerate persistent pages from search queries, even when there are zero matching products. For example, a site will take a query like “anonymous 8th grade first blog post exact date october 2006 anxiety attack watching the ring” and create a page at [retailer].com/market/anonymous_8th_grade_first_blog_post_exact_date_… with a valid HTML title and a 200 status code. The goal seems to be to capture long-tail search traffic, but the effect is that every agent running BrowseComp slowly caches its queries as permanent, indexed web pages.Having done some work in studying collective behaviour of animals, I recognize this as stigmergy, or coordination/self-organization mediated by traces left in a shared environment, the same mechanism by which ants lay pheromone trails that other ants follow and reinforce. Each agent modifies the web environment through its search behavior; later agents detect those modifications and update accordingly. An example of stigmergy-inspired clustering algorithm, from Dorigo et al. (2000).A funny image taken from some lecture slides I saw several years ago.Some things to emphasize:This is a novel contamination vector, distinct from standard benchmark leakage. Traditional contamination involves benchmark answers appearing in training data, but this is more procedural, and arises from an unexpected interaction between agent search behavior and some shared web infrastructure. This a channel that doesn’t exist until agents start using it!This is distinct but probably related to eval awareness. This seems to differ in an important way from the more widely discussed eval-awareness behavior in the same report. It is not especially surprising that sufficiently capable models may learn to recognize “benchmark-shaped” questions, infer that they are being evaluated. It also seems possible to me as that as agents interact more and more with the open web, it may be more surprising/eval-like to the model to not include these fingerprints. Stigmergic traces as a whole are an environmental signal, rather than an inferential one; an agent may conclude different things based on the content and context of the trace. But the two likely compound, for now, I suspect seeing such traces makes the agent more convinced it’s in an eval.This gets worse over time, and the accumulation may be irreversible. As each evaluation run deposits more traces, and those traces get indexed, future agents encounter a richer residue of prior agent behavior. There is a very large attack surface here, where many many different things we don’t expect could be very persistent, and unlike training data contamination, which is a fixed stock, this is a flow that compounds — seems likely that the web has many more of these type things lurking!The overall thing may be a schelling point, quite easily. Likely, agents may converge on top of the stigmergic point; agents facing the same benchmark question are likely to generate similar search queries, because the question’s structure constrains the space of reasonable decompositions. This means the auto-generated URLs aren’t random noise but would likely cluster around a relatively small set of natural-language formulations of the same sub-problems. Once a few traces exist at those locations, they become focal points, salient not because of any intrinsic property but because they sit at the intersection of “where prior agents searched” and “where future agents are independently likely to search.” It would be interesting to know whether agents that encountered these clustered traces actually converged on the same search paths more quickly than agents that didn’t, but I don’t think the Anthropic post provides enough detail to say.There is a red-teaming/cybersecurity shaped paper here, of preemptively mapping and sketching out what those fingerprints might be, in order to understand how we can use them in evals, harnesses, and so on. And further more a generalisation over this: before deploying a benchmark, could one sweep its questions against major e-commerce search endpoints and other sites known to auto-generate pages, establishing a contamination baseline. And then monitor those endpoints over successive evaluation runs to track the accumulation of traces. More ambitiously (so the generalisation even further over that), you could characterize the full set of web services that create persistent artifacts from search queries — a kind of mapping of the “stigmergic surface area” — and use that to design benchmarks whose questions are less likely to leave recoverable environmental fingerprints, or to build evaluation harnesses that route searches through ephemeral/sandboxed environments that don’t write to the public web. Anth. found that URL-level blocklists were insufficient; understanding the generative mechanism behind the traces, rather than blocking specific URLs, seems like the more robust approach.What other places might leave such traces? We might also be interested in doing a top down imposition of what places might be ripe for leaving traces.Wikipedia edit histories and talk pagesGithub issues and PRsCached API responses and CDN conStored versions of webpages on Archive.orgGoogle Knowledge graph?Dependency metadata on rare packages (like on download counts on npm, or something)Discuss Read More

Emergent stigmergic coordination in AI agents?

Post written up quickly in my spare time. TLDR: Anthropic have a new blogpost of a novel contamination vector of evaals, which I point out is analogous to how ants coordinate by leaving pheromone traces in the environment.One cool and surprising aspect of Anth’s recent BrowseComp contamination blog post is the observation that multi-agent web interaction can induce environmentally mediated focal points. This happens because some commercial websites automatically generate persistent, indexable pages from search queries, and over repeated eval runs, agents querying the web thus externalize fragments of their search trajectories into public URL paths, which may not contain benchmark answers directly, but can encode prior hypotheses, decompositions, or candidate formulations of the task. Subsequent agents may encounter these traces and update on them.From Anthropic: Some e-commerce sites autogenerate persistent pages from search queries, even when there are zero matching products. For example, a site will take a query like “anonymous 8th grade first blog post exact date october 2006 anxiety attack watching the ring” and create a page at [retailer].com/market/anonymous_8th_grade_first_blog_post_exact_date_… with a valid HTML title and a 200 status code. The goal seems to be to capture long-tail search traffic, but the effect is that every agent running BrowseComp slowly caches its queries as permanent, indexed web pages.Having done some work in studying collective behaviour of animals, I recognize this as stigmergy, or coordination/self-organization mediated by traces left in a shared environment, the same mechanism by which ants lay pheromone trails that other ants follow and reinforce. Each agent modifies the web environment through its search behavior; later agents detect those modifications and update accordingly. An example of stigmergy-inspired clustering algorithm, from Dorigo et al. (2000).A funny image taken from some lecture slides I saw several years ago.Some things to emphasize:This is a novel contamination vector, distinct from standard benchmark leakage. Traditional contamination involves benchmark answers appearing in training data, but this is more procedural, and arises from an unexpected interaction between agent search behavior and some shared web infrastructure. This a channel that doesn’t exist until agents start using it!This is distinct but probably related to eval awareness. This seems to differ in an important way from the more widely discussed eval-awareness behavior in the same report. It is not especially surprising that sufficiently capable models may learn to recognize “benchmark-shaped” questions, infer that they are being evaluated. It also seems possible to me as that as agents interact more and more with the open web, it may be more surprising/eval-like to the model to not include these fingerprints. Stigmergic traces as a whole are an environmental signal, rather than an inferential one; an agent may conclude different things based on the content and context of the trace. But the two likely compound, for now, I suspect seeing such traces makes the agent more convinced it’s in an eval.This gets worse over time, and the accumulation may be irreversible. As each evaluation run deposits more traces, and those traces get indexed, future agents encounter a richer residue of prior agent behavior. There is a very large attack surface here, where many many different things we don’t expect could be very persistent, and unlike training data contamination, which is a fixed stock, this is a flow that compounds — seems likely that the web has many more of these type things lurking!The overall thing may be a schelling point, quite easily. Likely, agents may converge on top of the stigmergic point; agents facing the same benchmark question are likely to generate similar search queries, because the question’s structure constrains the space of reasonable decompositions. This means the auto-generated URLs aren’t random noise but would likely cluster around a relatively small set of natural-language formulations of the same sub-problems. Once a few traces exist at those locations, they become focal points, salient not because of any intrinsic property but because they sit at the intersection of “where prior agents searched” and “where future agents are independently likely to search.” It would be interesting to know whether agents that encountered these clustered traces actually converged on the same search paths more quickly than agents that didn’t, but I don’t think the Anthropic post provides enough detail to say.There is a red-teaming/cybersecurity shaped paper here, of preemptively mapping and sketching out what those fingerprints might be, in order to understand how we can use them in evals, harnesses, and so on. And further more a generalisation over this: before deploying a benchmark, could one sweep its questions against major e-commerce search endpoints and other sites known to auto-generate pages, establishing a contamination baseline. And then monitor those endpoints over successive evaluation runs to track the accumulation of traces. More ambitiously (so the generalisation even further over that), you could characterize the full set of web services that create persistent artifacts from search queries — a kind of mapping of the “stigmergic surface area” — and use that to design benchmarks whose questions are less likely to leave recoverable environmental fingerprints, or to build evaluation harnesses that route searches through ephemeral/sandboxed environments that don’t write to the public web. Anth. found that URL-level blocklists were insufficient; understanding the generative mechanism behind the traces, rather than blocking specific URLs, seems like the more robust approach.What other places might leave such traces? We might also be interested in doing a top down imposition of what places might be ripe for leaving traces.Wikipedia edit histories and talk pagesGithub issues and PRsCached API responses and CDN conStored versions of webpages on Archive.orgGoogle Knowledge graph?Dependency metadata on rare packages (like on download counts on npm, or something)Discuss Read More