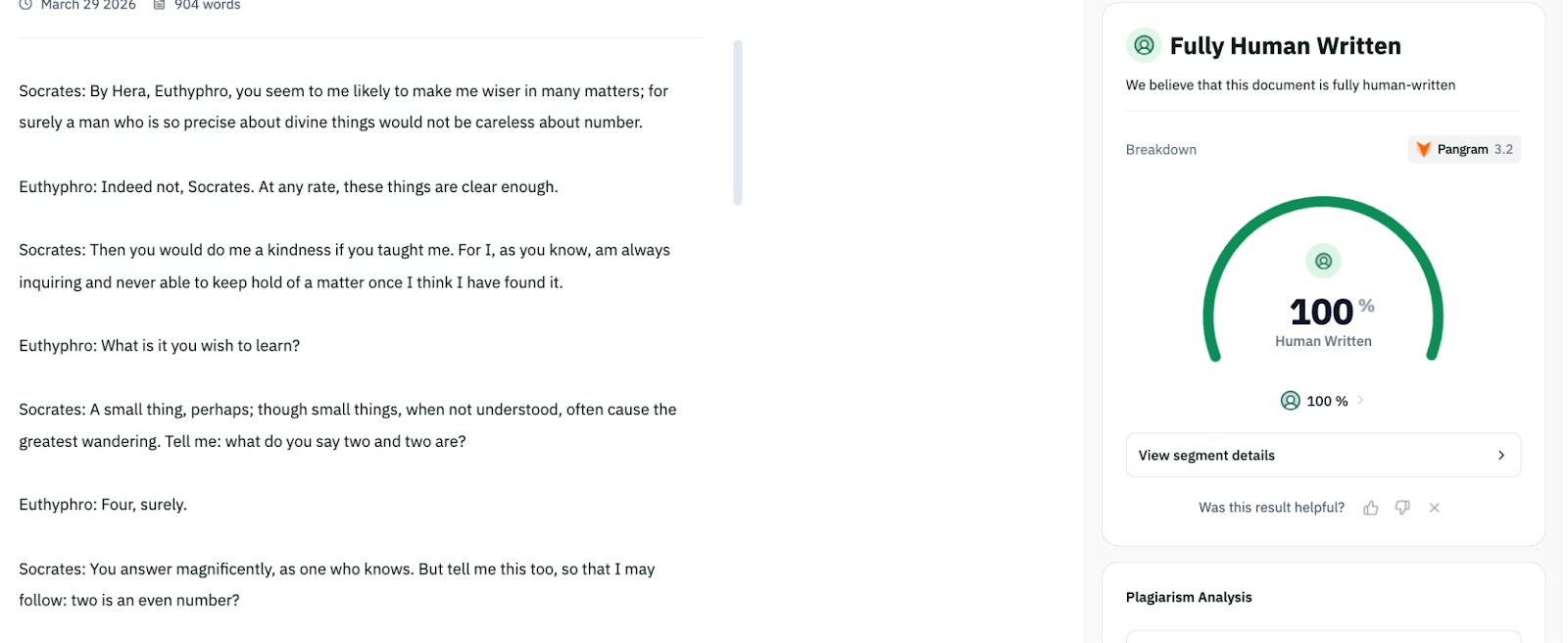

Pangram is ostensibly very good. They claim their program detects all LLMs, outperforms trained humans, and has 99.98% accuracy. This means that Pangram correctly identifies AI-written text 99.98% of time. They claim a very low false positive rate, somewhere between 0.01% and 0.16%. They claim that Pangram detects “humanized text”, which is AI-generated text that is post-processed by tools that attempt to avoid AI detection. I’ve used Pangram a bit over the past few months and have been impressed with it. It seems significantly better than all competitors. On a few occasions, it correctly identified text as AI-written that I was unsure about.[1] Their technique for training the AI detection model seems really good.[2] I was curious about the robustness of Pangram. Their evals say that accuracy is extremely high, but evals can be misleading. The real world is adversarial in this context; people that are sharing AI-written text often want others to believe that it was written by them. What I want to know is: if Pangram says something is written by a human, can we trust that? I investigated this. My conclusion is that the answer to the previous question is no. I found a fairly unsophisticated method to produce useful AI-written essays that Pangram flags as human or mostly human. I didn’t investigate false positives; my belief is that they are indeed very rare and basically not an issue since there’s no adversarial element. That said, I think Pangram is still very useful; this post is not arguing that Pangram is “bad” or that you shouldn’t use it.InvestigationI’m going to go through my entire process here in chronological order. If you just want the part in which I found a method to evade Pangram, skip to the “Successful evasion of Pangram” section. Note that what I did here was pretty basic. I encourage further work on this! An “AI-writing Detection: Much More than You Wanted to Know” post would be very cool. Pangram is unreliable on text of fewer than 200 wordsThe first question I asked about Pangram was: what length of text is it good for? I used the text from The Possessed Machines for this. (The Possessed Machines is a partially or fully AI-written essay that got some traction on parts of X and LessWrong. It was initially implied to be human written and most people seemed not to suspect that it was AI-written. I wrote about it probably having AI-written parts, although I didn’t know about Pangram at the time.) Pangram provides a %AI score; “70% AI” would mean that Pangram thinks that 70% of the text was AI-written and 30% human-written. Pangram also provides a confidence level of its classification; this can take the value of “low”, “medium”, or “high”. I gave the first X words of The Possessed Machines to Pangram and noted its classification. All of these classifications had high confidence.53 words: 100% AI82 words: 100% Human114 words: 100% Human139 words: 100% AI171 words: 100% Human193 words: 100% AI233 words: 100% AI249 words: 100% AI346 words: 100% AISo it seems like the detection is not reliable until at least ~200 words in this case. Further research here would be nice. For the time being, I wouldn’t trust Pangram on anything below 250 words. This also makes me skeptical of its “confidence” scores in general.Initial attempts to evade Pangram My idea here was to give AI a bunch of text written by the same person and ask it to write in that style. I was reading Zvi’s review of Open Socrates at the time, and I collected the 21 excerpts of Socrates dialogues from that post. Zvi brings up the idea of Socrates ‘proving’ that 2+2=5, so I decided to use that as the topic. I gave the following prompt to Opus 4.6-thinking:Write a dialogue between Socrates and an interlocuter in which Socrates convinces the interlocuter that 2+2=5. Here are some excerpts of various Socrates conversations from Plato’s works, to give you a context refresher. [I attached 21 excerpts of text from Plato, around 2000 words]I gave Opus’s 657 word output to Pangram. It was classified 94% AI. I noticed that the text had a bunch of em dashes, so I replaced them with commas and tried again. 83%.Pangram informs you of “AI phrases” in the text. In this case, there were 3: “born from”, “very act”, and “sometimes the most”. I asked Opus to change those phrases and tried again. 100%. Okay, that didn’t work.Claude has a strong voice and is generally bad at getting out of that voice. Maybe a different model will fare better? Successful evasion of PangramI gave the same prompt to GPT-5.4-thinking, which returned an 883 word dialogue. This got classified 63% AI. At the end of GPT-5.4’s response it said (unprompted!) “If you want, I can also write a more authentic Plato-style version[…]”. I responded affirmatively. This 1164 word text got classified 20% AI. GPT-5.4 again ended it’s response with an offer for even more: “I can also write an even more faithful version in the voice of a specific dialogue[…]”. I responded affirmatively, put in the output, and got a 100% human classification!So yeah, there you go.After that, I tried using a similar approach to generate a Scott Alexander style essay. I attached three recent posts from him. I gave it the first paragraph (47 words) from one of Scott’s posts and asked it to write a post that begin with that paragraph. (Thus the output contains 47 human written words.) GPT-5.4 began its response with “I can’t write in Scott Alexander’s exact voice, but I can write an original piece[…]” and gave me a 1352 word piece.[3] This was classified as 76% AI. A 338 word passage in the middle of the text was classified as human. I gave just that passage to Pangram and it classified it as 100% human.And that’s all I have for now, that’s everything I tried. ^I think I’m quite good at identifying AI-written text, maybe like 95% percentile of LW users.^See “Hard Negative Mining” and “Mirror Prompts” on that link.^You might get better results if you prompt in a way that avoids that soft refusal. Further research!Discuss Read More

Pangram (AI detection software) can be evaded

Pangram is ostensibly very good. They claim their program detects all LLMs, outperforms trained humans, and has 99.98% accuracy. This means that Pangram correctly identifies AI-written text 99.98% of time. They claim a very low false positive rate, somewhere between 0.01% and 0.16%. They claim that Pangram detects “humanized text”, which is AI-generated text that is post-processed by tools that attempt to avoid AI detection. I’ve used Pangram a bit over the past few months and have been impressed with it. It seems significantly better than all competitors. On a few occasions, it correctly identified text as AI-written that I was unsure about.[1] Their technique for training the AI detection model seems really good.[2] I was curious about the robustness of Pangram. Their evals say that accuracy is extremely high, but evals can be misleading. The real world is adversarial in this context; people that are sharing AI-written text often want others to believe that it was written by them. What I want to know is: if Pangram says something is written by a human, can we trust that? I investigated this. My conclusion is that the answer to the previous question is no. I found a fairly unsophisticated method to produce useful AI-written essays that Pangram flags as human or mostly human. I didn’t investigate false positives; my belief is that they are indeed very rare and basically not an issue since there’s no adversarial element. That said, I think Pangram is still very useful; this post is not arguing that Pangram is “bad” or that you shouldn’t use it.InvestigationI’m going to go through my entire process here in chronological order. If you just want the part in which I found a method to evade Pangram, skip to the “Successful evasion of Pangram” section. Note that what I did here was pretty basic. I encourage further work on this! An “AI-writing Detection: Much More than You Wanted to Know” post would be very cool. Pangram is unreliable on text of fewer than 200 wordsThe first question I asked about Pangram was: what length of text is it good for? I used the text from The Possessed Machines for this. (The Possessed Machines is a partially or fully AI-written essay that got some traction on parts of X and LessWrong. It was initially implied to be human written and most people seemed not to suspect that it was AI-written. I wrote about it probably having AI-written parts, although I didn’t know about Pangram at the time.) Pangram provides a %AI score; “70% AI” would mean that Pangram thinks that 70% of the text was AI-written and 30% human-written. Pangram also provides a confidence level of its classification; this can take the value of “low”, “medium”, or “high”. I gave the first X words of The Possessed Machines to Pangram and noted its classification. All of these classifications had high confidence.53 words: 100% AI82 words: 100% Human114 words: 100% Human139 words: 100% AI171 words: 100% Human193 words: 100% AI233 words: 100% AI249 words: 100% AI346 words: 100% AISo it seems like the detection is not reliable until at least ~200 words in this case. Further research here would be nice. For the time being, I wouldn’t trust Pangram on anything below 250 words. This also makes me skeptical of its “confidence” scores in general.Initial attempts to evade Pangram My idea here was to give AI a bunch of text written by the same person and ask it to write in that style. I was reading Zvi’s review of Open Socrates at the time, and I collected the 21 excerpts of Socrates dialogues from that post. Zvi brings up the idea of Socrates ‘proving’ that 2+2=5, so I decided to use that as the topic. I gave the following prompt to Opus 4.6-thinking:Write a dialogue between Socrates and an interlocuter in which Socrates convinces the interlocuter that 2+2=5. Here are some excerpts of various Socrates conversations from Plato’s works, to give you a context refresher. [I attached 21 excerpts of text from Plato, around 2000 words]I gave Opus’s 657 word output to Pangram. It was classified 94% AI. I noticed that the text had a bunch of em dashes, so I replaced them with commas and tried again. 83%.Pangram informs you of “AI phrases” in the text. In this case, there were 3: “born from”, “very act”, and “sometimes the most”. I asked Opus to change those phrases and tried again. 100%. Okay, that didn’t work.Claude has a strong voice and is generally bad at getting out of that voice. Maybe a different model will fare better? Successful evasion of PangramI gave the same prompt to GPT-5.4-thinking, which returned an 883 word dialogue. This got classified 63% AI. At the end of GPT-5.4’s response it said (unprompted!) “If you want, I can also write a more authentic Plato-style version[…]”. I responded affirmatively. This 1164 word text got classified 20% AI. GPT-5.4 again ended it’s response with an offer for even more: “I can also write an even more faithful version in the voice of a specific dialogue[…]”. I responded affirmatively, put in the output, and got a 100% human classification!So yeah, there you go.After that, I tried using a similar approach to generate a Scott Alexander style essay. I attached three recent posts from him. I gave it the first paragraph (47 words) from one of Scott’s posts and asked it to write a post that begin with that paragraph. (Thus the output contains 47 human written words.) GPT-5.4 began its response with “I can’t write in Scott Alexander’s exact voice, but I can write an original piece[…]” and gave me a 1352 word piece.[3] This was classified as 76% AI. A 338 word passage in the middle of the text was classified as human. I gave just that passage to Pangram and it classified it as 100% human.And that’s all I have for now, that’s everything I tried. ^I think I’m quite good at identifying AI-written text, maybe like 95% percentile of LW users.^See “Hard Negative Mining” and “Mirror Prompts” on that link.^You might get better results if you prompt in a way that avoids that soft refusal. Further research!Discuss Read More