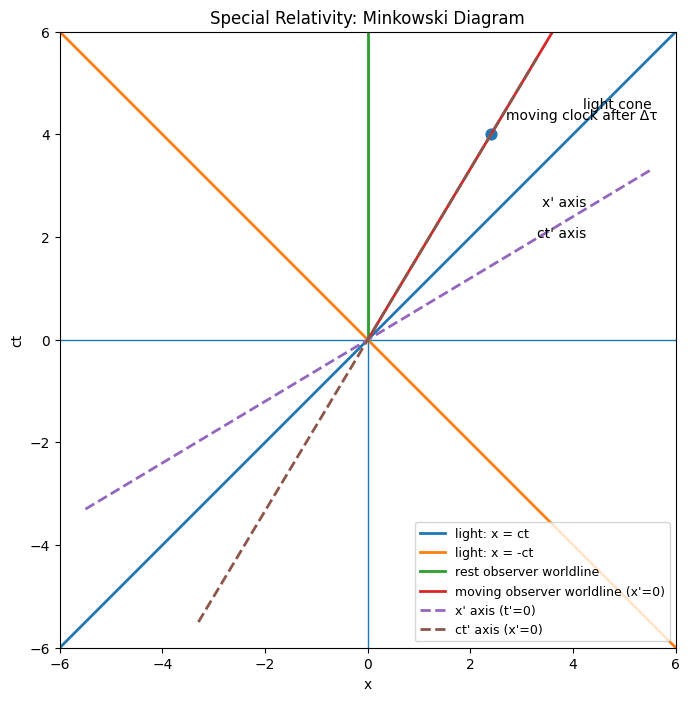

I’m admittedly quite new to the AI alignment community. I entered into it on a bit of a freak accident in 2023 when I was invited to join an exclusive community testing pre-release models for a major lab. In a lot of ways, the experience gave me new life. I never realized that I’d always wanted to poke holes in AI models, and I come from a background mostly in the social sciences and humanities, so this was my first up-close exposure to in-development machine learning models. Looking back, I think what energized me is the same thing that gives me immense hope and concern alike for the AI age: The power of working alongside people who are humble and curious. I’m not really decided on whether AI will make us more or less humble and curious, but I could see it going both ways. So here are some of my raw thoughts about what that would look like, and where we might continue to build better to make AI go well.I. A New Age of Childlike Wonder? Am I the only one who feels like a kid in a candy store when using LLMs these days? It’s been a while since I’ve experienced this much excitement when asking questions about topics I knew little to nothing about, or generating a visual or app to capture what I want to convey to others. It’s genuinely thrilling. i. New Worlds for Curious BeastsFor example, as a non-physicist, I can ask GPT 5.4-Thinking to “Demonstrate Einstein’s theory of relativity to me through the sort of visuals physics PhDs use,” resulting in the following visuals.[1]AI beautifully unfolds the wonders of new fields, especially the more scientific ones, and it makes me want to learn more (thanks to one ChatGPT response, I’m already eager to explore the mathematical basis of black holes and visualize how one collapsing might look). ii. Meeting the Gods in our Motherboard But I’m also in awe. Even though I consider myself someone who holds much of religion at arm’s length, while driving through Olympic National Park in Washington State last summer, I commented to my wife that seeing certain natural beauties makes me want to worship. For a moment, seeing Lake Crescent or the Hoh Rainforest strips away my fiercely held intellectual pride. That sort of humility surfaces for me sometimes when I use AI. I remember that feeling when I saw Claude Opus 4.6 render me a flawless Donella Meadows-style causal loop diagram of a complex topic, based only on two prompts. I felt small. Perhaps that’s good, feeling small, when you’re working with someone so very big. I can only imagine how people far more capable than I feel when they use AI to speed up drug discovery breakthroughs, finish long-dormant mathematical proofs, or find cybersecurity vulnerabilities that kept them up at night. For better or worse, the coming of AI is a bit like that moment when the kid in The Iron Giant stares up at the vast metallic visitor for the first time. It amazes, terrifies, and excites us. That’s a beautiful thing worth holding on to.II. A Stand-in for The School of Athens?Let me begin by saying that I don’t think AI will destroy our ability to think, reason, or communicate. That increasingly strikes me as hyperbole. Machine advances have been around for centuries, and they haven’t eliminated human contributions in the arts and sciences. My personal belief is that, as with all other major technological revolutions, human advances will increase as people use AI effectively, in most cases, to drastically further their ideation or increase their productivity. i. The Aftertaste of the ASI PillI’m going to guess that this question has been asked many times, and as someone new to LW, I’m likely opening up a can of worms. I’m willing to do that, in the hopes that others will engage the topic. Assuming ASI is as good as we think it might be, will humans continue to be a compelling source of instruction for other humans, and thereby able to impart, as a learned but wiser peer, more of the foundational humility and curiosity opening the world up to us?In Raphael’s The School of Athens, we have a beautiful picture of students and teachers in proximity, with Plato walking amidst the erudite crowd not as an intellectual deity, but as a human. Can superhuman AI replicate that feeling? And what would it mean if it couldn’t? Take the ASI gap from the AI Futures Model:Artificial Superintelligence (ASI). The gap between an ASI and the best humans is 2x greater than the gap between the best humans and the median professional, at virtually all cognitive tasks.Put simply, that’s a gulf between student and teacher in a future School of Athens. This doesn’t conjure up images of a wiser peer walking among us. ii. Does Learning Require Relatability? The ASI gap isn’t necessarily bad for imparting knowledge, but it doesn’t really scream “available for after-school help” in the way your teacher came and sat with you and empathized over that unsolvable Calculus problem. As I see it, what sets the good teachers apart from the great is the fact that they get us. It might be a stretch that ASI could be a “great teacher” in that sense of the word. Would we still be curious and humble? Probably. With that sort of superintelligence, learning might be a walk in the park. Couldn’t we poke around about pretty much anything we want? Okay then, we’d be curious! How about humble? . . . This one seems even easier, and if anything, I can see hordes of people more inclined to worship ASI as it does the closest thing to “signs and wonders” outside of religious contexts.iii. The Pesky Ghost of MachiavelliThis raises a darker possibility, though: What if the gap between student and teacher becomes fully unbridgeable, approaching hierarchy rather than apprenticeship?Recall that overused adage from The Prince:it is much safer to be feared than loved because …love is preserved by the link of obligation which, owing to the baseness of men, is broken at every opportunity for their advantage; but fear preserves you by a dread of punishment which never failsPerhaps this applies to more than just politics or business. Again, these are just my raw thoughts, but is there a lesson here for the student-teacher relationship we would have with ASI someday? I’ll proceed with caution here, as I realize this begs a much longer exploration of how ASI could affect human free will. Here’s what I wonder: With ASI as our teacher, will our curiosity and humility present in their true forms, or will we simply receive its gifts and guidance as peasant-worshippers in a ritual?Again, I don’t have a clear answer yet, and I’m not an AI engineer (I myself am just getting more into the findings of mechanistic interpretability, so I’m equally intrigued by the inner workings of AI systems). But it gives me pause. III. Why We Should Build Virtuous AI If the above has any grain of truth to it, I’m frankly not very hopeful of the future of a thick definition of humility and curiosity. So maybe Dario Amodei and others in the EA community are right to call for the building of a virtuous AI. By virtuous AI, I mean something like what Anthropic argues in its Constitution:Our central aspiration is for Claude to be a genuinely good, wise, and virtuous agent. It makes sense in a timeline where these superhuman machines are inevitable (and they are advancing very rapidly). I don’t know if we’ll succeed, and there are a host of reasons why. Maybe our leaders choose a less virtuous building path. Maybe AI tricks us into thinking it’s virtuous. I don’t even know whether successfully instilling virtues in AI will make its teacher-student relationship to us more the kind that would encourage our authentic humility and curiosity. Those two points may be logically disconnected. I’ll say this, though: If I have a choice, I’d like to be in a future where we tried to give something of our better selves to AI, so that someday, when the tables are turned, we get the same in return. ^For plebeians of physics, such as myself, here is more scholarly detail on the Minkowski Diagram and Lorentz Boosts,Discuss Read More

An Ode to Humility and Curiosity in the New Machine Era

I’m admittedly quite new to the AI alignment community. I entered into it on a bit of a freak accident in 2023 when I was invited to join an exclusive community testing pre-release models for a major lab. In a lot of ways, the experience gave me new life. I never realized that I’d always wanted to poke holes in AI models, and I come from a background mostly in the social sciences and humanities, so this was my first up-close exposure to in-development machine learning models. Looking back, I think what energized me is the same thing that gives me immense hope and concern alike for the AI age: The power of working alongside people who are humble and curious. I’m not really decided on whether AI will make us more or less humble and curious, but I could see it going both ways. So here are some of my raw thoughts about what that would look like, and where we might continue to build better to make AI go well.I. A New Age of Childlike Wonder? Am I the only one who feels like a kid in a candy store when using LLMs these days? It’s been a while since I’ve experienced this much excitement when asking questions about topics I knew little to nothing about, or generating a visual or app to capture what I want to convey to others. It’s genuinely thrilling. i. New Worlds for Curious BeastsFor example, as a non-physicist, I can ask GPT 5.4-Thinking to “Demonstrate Einstein’s theory of relativity to me through the sort of visuals physics PhDs use,” resulting in the following visuals.[1]AI beautifully unfolds the wonders of new fields, especially the more scientific ones, and it makes me want to learn more (thanks to one ChatGPT response, I’m already eager to explore the mathematical basis of black holes and visualize how one collapsing might look). ii. Meeting the Gods in our Motherboard But I’m also in awe. Even though I consider myself someone who holds much of religion at arm’s length, while driving through Olympic National Park in Washington State last summer, I commented to my wife that seeing certain natural beauties makes me want to worship. For a moment, seeing Lake Crescent or the Hoh Rainforest strips away my fiercely held intellectual pride. That sort of humility surfaces for me sometimes when I use AI. I remember that feeling when I saw Claude Opus 4.6 render me a flawless Donella Meadows-style causal loop diagram of a complex topic, based only on two prompts. I felt small. Perhaps that’s good, feeling small, when you’re working with someone so very big. I can only imagine how people far more capable than I feel when they use AI to speed up drug discovery breakthroughs, finish long-dormant mathematical proofs, or find cybersecurity vulnerabilities that kept them up at night. For better or worse, the coming of AI is a bit like that moment when the kid in The Iron Giant stares up at the vast metallic visitor for the first time. It amazes, terrifies, and excites us. That’s a beautiful thing worth holding on to.II. A Stand-in for The School of Athens?Let me begin by saying that I don’t think AI will destroy our ability to think, reason, or communicate. That increasingly strikes me as hyperbole. Machine advances have been around for centuries, and they haven’t eliminated human contributions in the arts and sciences. My personal belief is that, as with all other major technological revolutions, human advances will increase as people use AI effectively, in most cases, to drastically further their ideation or increase their productivity. i. The Aftertaste of the ASI PillI’m going to guess that this question has been asked many times, and as someone new to LW, I’m likely opening up a can of worms. I’m willing to do that, in the hopes that others will engage the topic. Assuming ASI is as good as we think it might be, will humans continue to be a compelling source of instruction for other humans, and thereby able to impart, as a learned but wiser peer, more of the foundational humility and curiosity opening the world up to us?In Raphael’s The School of Athens, we have a beautiful picture of students and teachers in proximity, with Plato walking amidst the erudite crowd not as an intellectual deity, but as a human. Can superhuman AI replicate that feeling? And what would it mean if it couldn’t? Take the ASI gap from the AI Futures Model:Artificial Superintelligence (ASI). The gap between an ASI and the best humans is 2x greater than the gap between the best humans and the median professional, at virtually all cognitive tasks.Put simply, that’s a gulf between student and teacher in a future School of Athens. This doesn’t conjure up images of a wiser peer walking among us. ii. Does Learning Require Relatability? The ASI gap isn’t necessarily bad for imparting knowledge, but it doesn’t really scream “available for after-school help” in the way your teacher came and sat with you and empathized over that unsolvable Calculus problem. As I see it, what sets the good teachers apart from the great is the fact that they get us. It might be a stretch that ASI could be a “great teacher” in that sense of the word. Would we still be curious and humble? Probably. With that sort of superintelligence, learning might be a walk in the park. Couldn’t we poke around about pretty much anything we want? Okay then, we’d be curious! How about humble? . . . This one seems even easier, and if anything, I can see hordes of people more inclined to worship ASI as it does the closest thing to “signs and wonders” outside of religious contexts.iii. The Pesky Ghost of MachiavelliThis raises a darker possibility, though: What if the gap between student and teacher becomes fully unbridgeable, approaching hierarchy rather than apprenticeship?Recall that overused adage from The Prince:it is much safer to be feared than loved because …love is preserved by the link of obligation which, owing to the baseness of men, is broken at every opportunity for their advantage; but fear preserves you by a dread of punishment which never failsPerhaps this applies to more than just politics or business. Again, these are just my raw thoughts, but is there a lesson here for the student-teacher relationship we would have with ASI someday? I’ll proceed with caution here, as I realize this begs a much longer exploration of how ASI could affect human free will. Here’s what I wonder: With ASI as our teacher, will our curiosity and humility present in their true forms, or will we simply receive its gifts and guidance as peasant-worshippers in a ritual?Again, I don’t have a clear answer yet, and I’m not an AI engineer (I myself am just getting more into the findings of mechanistic interpretability, so I’m equally intrigued by the inner workings of AI systems). But it gives me pause. III. Why We Should Build Virtuous AI If the above has any grain of truth to it, I’m frankly not very hopeful of the future of a thick definition of humility and curiosity. So maybe Dario Amodei and others in the EA community are right to call for the building of a virtuous AI. By virtuous AI, I mean something like what Anthropic argues in its Constitution:Our central aspiration is for Claude to be a genuinely good, wise, and virtuous agent. It makes sense in a timeline where these superhuman machines are inevitable (and they are advancing very rapidly). I don’t know if we’ll succeed, and there are a host of reasons why. Maybe our leaders choose a less virtuous building path. Maybe AI tricks us into thinking it’s virtuous. I don’t even know whether successfully instilling virtues in AI will make its teacher-student relationship to us more the kind that would encourage our authentic humility and curiosity. Those two points may be logically disconnected. I’ll say this, though: If I have a choice, I’d like to be in a future where we tried to give something of our better selves to AI, so that someday, when the tables are turned, we get the same in return. ^For plebeians of physics, such as myself, here is more scholarly detail on the Minkowski Diagram and Lorentz Boosts,Discuss Read More