Calling a large language model API at scale is expensive and slow. Read More

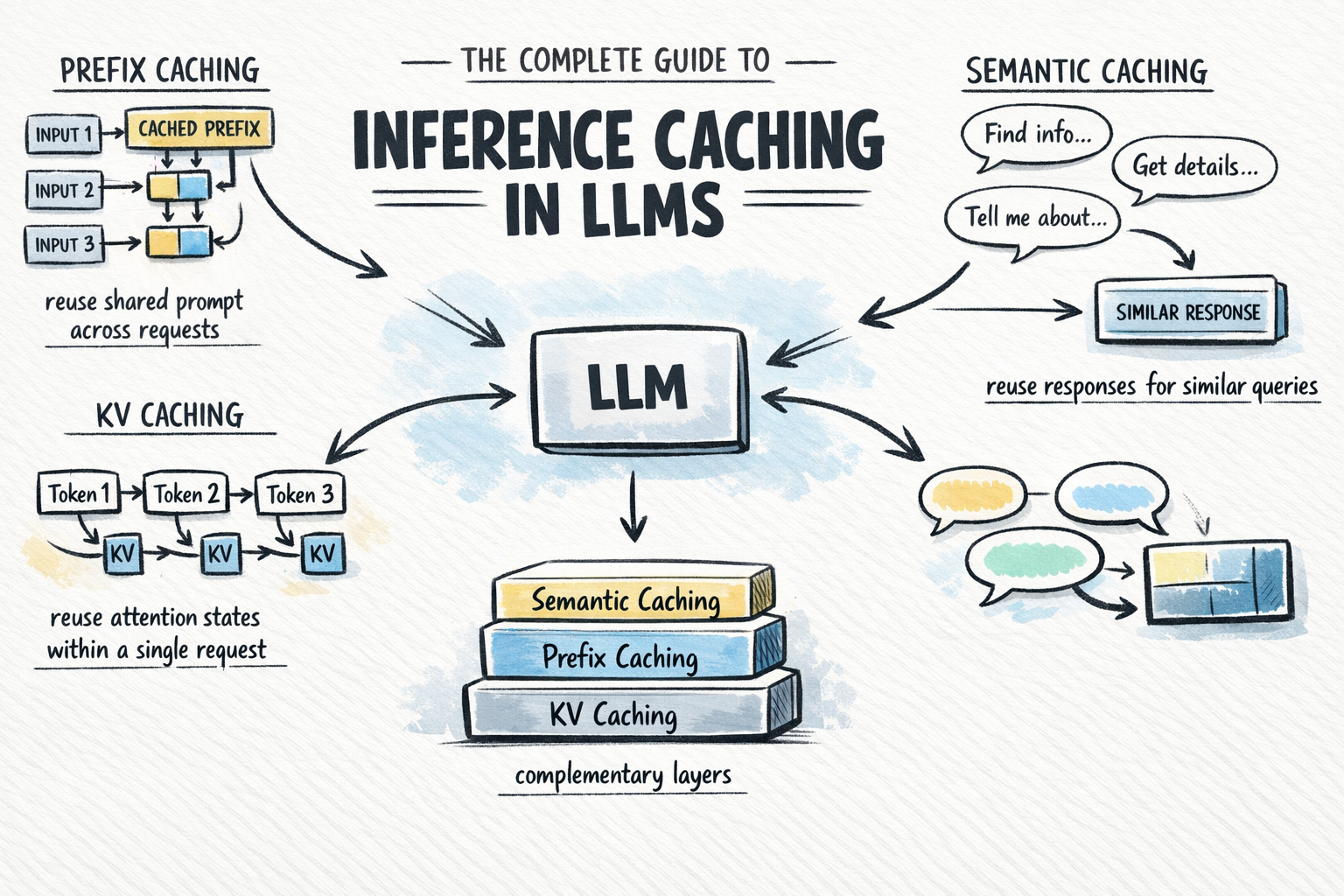

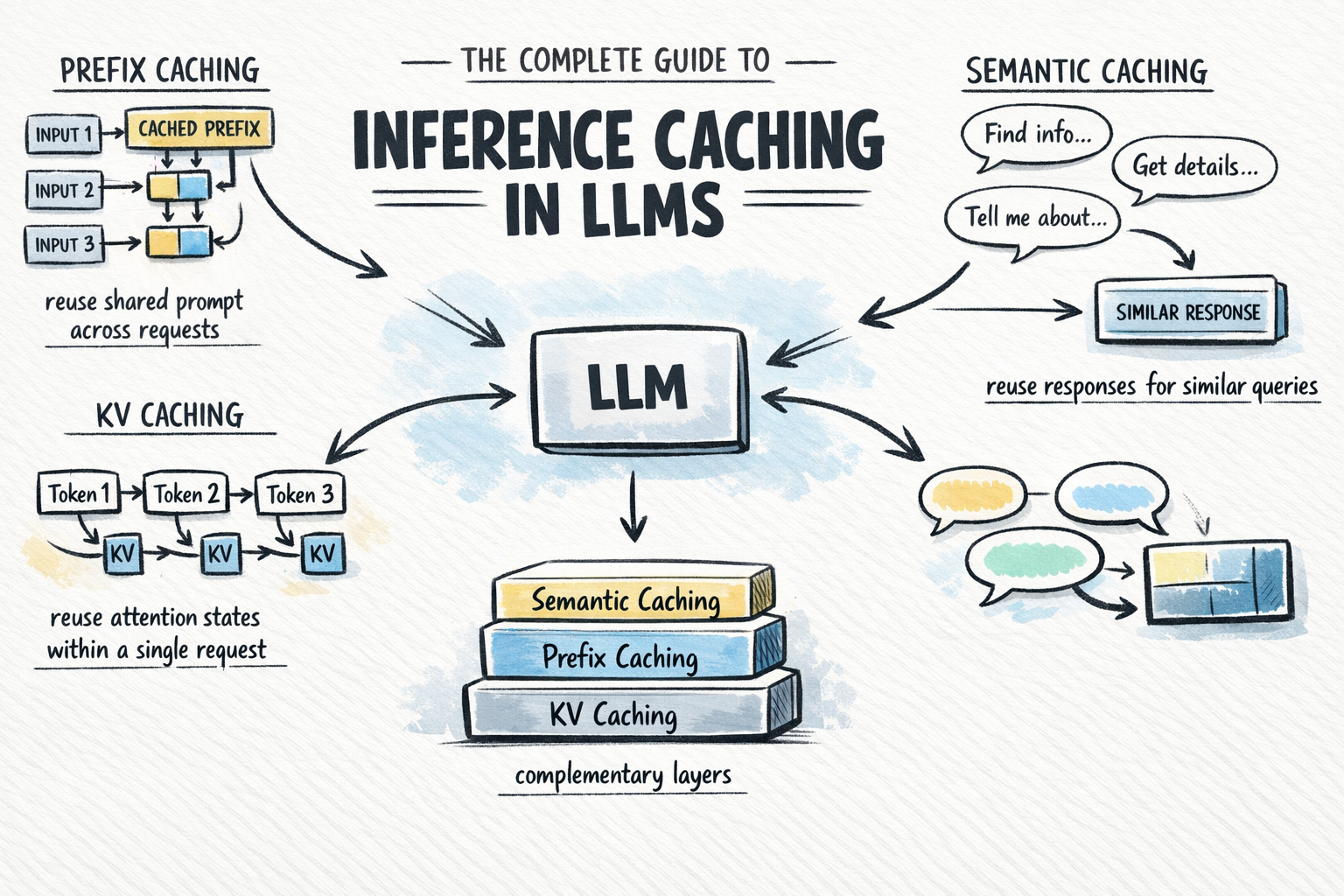

The Complete Guide to Inference Caching in LLMs

Calling a large language model API at scale is expensive and slow.

Calling a large language model API at scale is expensive and slow.

Calling a large language model API at scale is expensive and slow. Read More