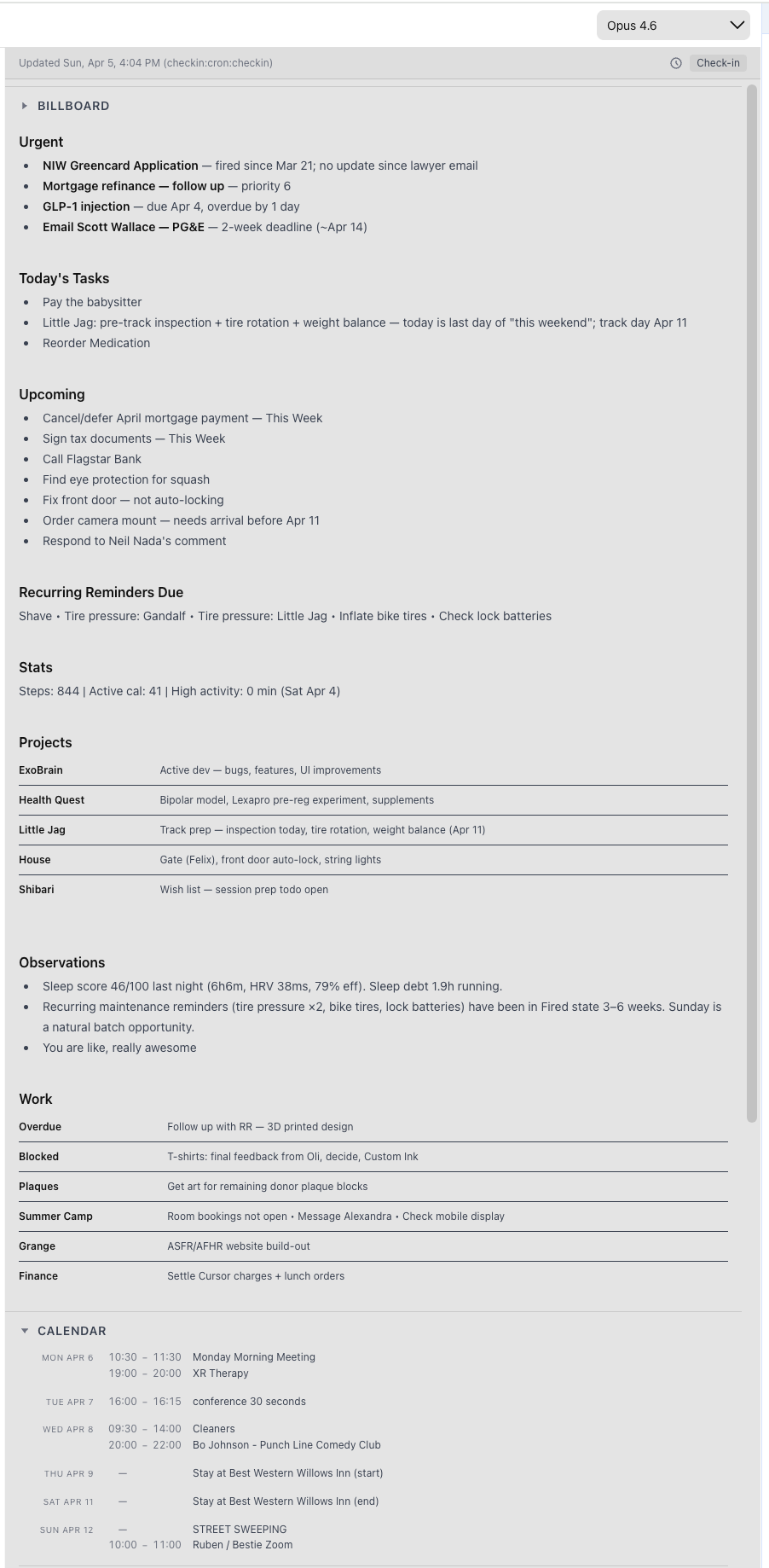

In this post, I share the thinking that lies behind the Exobrain system I have built for myself. In another post, I’ll describe the actual system.I think the standard way of relating to LLM/AIs is as an external tool (or “digital mind”) that you use and/or collaborate with. Instead of you doing the coding, you ask the LLM to do it for you. Instead of doing the research, you ask it to. That’s great, and there is utility in those use cases.Now, while I hardly engage in the delusion that humans can have some kind of long-term symbiotic integration with AIs that prevents them from replacing us[1], in the short term, I think humans can automate, outsource, and augment our thinking with LLM/AIs.We already augment our cognition with technologies such as writing and mundane software. Organizing one’s thoughts in a Google Doc is a kind of getting smarter with external aid. However, LLMs, by instantiating so many elements of cognition and intelligence (as limited and spiky as they might be), offer so much more ability to do this that I think there’s a step change of gain to be had.My personal attempt to capitalize on this is an LLM-based system I’ve been building for myself for a while now. Uncreatively, I just call it “Exobrain”. The conceptualization is an externalization and augmentation of my cognition, more than an external tool. I’m not sure if it changes it in practice, but part of what it means is that if there’s a boundary between me and the outside world, my goal is for the Exobrain to be on the inside of the boundary.What makes the Exobrain part of me vs a tool is that I see it as replacing the inner-workings of my own mind: things like memory, recall, attention-management, task-selection, task-switching, and other executive-function elements.Yesterday I described how I use Exobrain to replace memory functions (it’s a great feeling to not worry you’re going to forget stuff!)Before (no Exobrain)After (with Exobrain)Retrieve phone from pocket, open note-taking app, open new note, or find existing relevant noteSay “Hey Exo”, phone beeps, begin talking. Perhaps instruct the model which document to put a note in, or let it figure it out (has guidance in the stored system prompt)Remember that I have a note, either have to remember where it is or muck around with searchAsk LLM to find the note (via basic key-term search or vector embedding search)If the note is lengthy, you have to read through all of noteLLM can summarize and/or extract the relevant parts of the notesReplacing memory is a narrow mechanism, though. While the broad vision is “upgrade and augment as much of cognition as possible”, the intermediate goal I set when designing the system is to help me answer:What should I be doing right now?Aka, task prioritization. In every moment that we are not being involuntarily confined or coerced, we are making a choice about this. Prioritization involves computation and prediction – start with everything you care about, survey all the possible options available, decide which options to pursue in which order to get the most of what you care about . . . it’s tricky.But actually! This all depends on memory, which is why memory is the basic function of my Exobrain. To prioritize between options in pursuit of what I care about, I must remember all the things I care about and all things I could be doing…which is a finite but pretty long list. A couple of hundred to-do items, 1-2 dozen “projects”, a couple of to-read lists, a list of friends and social.The default for most people, I assume, at least me, is that task prioritization ends up being very environmentally driven. My friend mentioned a certain video game at lunch that reminds me that I want to finish it, so that’s what I do in the evening. If she’d mentioned a book I wanted to read, I would have done that instead. And if she’d mentioned both, I would have chosen the book. In this case, I get suboptimal task selection because I’m not remembering all of my options when deciding.I designed my Exobrain with the goal of having in front of me all the options I want to be considering in any given moment. Actually, choosing is hard, and as yet, I haven’t gotten the LLMs great at automating the choice of what to do, but just recording and surfacing the options isn’t that hard.Core Functions: Intake, Storage, SurfacingIntakeRecordings initiated by Android app are transcribed and sent to server, processed by LLM that has tools to store info.Exobrain web app has a chat interface. I can write stuff into that chat, and the LLM has tool calls available for storing info.Directly creating or changing Note (markdown files) or Todo items in the Exobrain app (I don’t do this much).Storage”Notes” – freeform text documents (markdown files)Todo items – my own schema”Projects” (to-do items can be associated with a project + a central Note for the project)Surfacing”The Board” – this abstraction is one of the distinctive features of my Exobrain (image below). In addition to a chat output, there’s a single central display of “stuff I want to be presented with right now” that has to-do items, reminders, calendar events, weather, personal notes, etc. all in one spot. It updates throughout the day on schedule and in response to events. The goal of the board is to allow me to better answer “what should I be doing now?”A central scheduled cron job LLM automatically updates four times a day, plus any other LLM calls within my app (e.g., post-transcript or in-chat) have tool calls to update it.Originally, what became the board contents would be output into a chat session, but repeated board updates makes for a very noisy chat history, and it meant if I was discussing board contents with the LLM in chat, I’d have to continually scroll up and down, which was pretty annoying, hence The Board was born.Reminders / Push notifications to my phone.Search – can call directly from search UI, or ask LLM to search for info for me.Todo Item page – UI typical of Notion or Airtable, has “views” for viewing different slices of my to-do items, like sorted by category, priority, or recently created.)(An image of The Board is here in a collapsible section because of size.)The Board (desktop view)There are a few more sections but weren’t quite the effort to clean up for sharing.What is everything I should be remembering about this? (Task Switching Efficiency)Suppose you have correctly (we hope) determined that Research Task XYZ is the thing to be spending your limited, precious time on; however, it has been a few months since you last worked on this project. It’s a rather involved project where you had half a dozen files, a partway-finished reading list, a smattering of todos, etc.Remembering where you were and booting up context takes time, and if you’re like me, you might be lazy about it and fail to even boot up everything relevant.Another goal of my Exobrain, via outsourcing and augmenting memory, is to make task switching easier, faster, and more effective. I want to say “I’m doing X now” and have the system say “here’s everything you last had on your mind about X”. Even if the system can’t read the notes for me, it can have them prepared. To date, a lot of “switch back to a task” time is spent just locating everything relevant.I’ve been describing this so far in the context of a project, e.g., a research project, but it applies just as much, if not more, to any topic I might be thinking about. For example, maybe every few months, I have thoughts about the AI alignment concept of corrigibility. By default, I might forget some insights I had about it two years ago. What I want to happen with the Exobrain is I say to it, “Hey, I’m thinking about corrigibility today”, and have it surface to me all my past thoughts about corrigibility, so I’m not wasting my time rethinking them. Or it could be something like “that one problematic neighbor,” where if I’ve logged it, it can remind me of all interactions over the last five years without me having to sit down and dredge up the memories from my flesh brain.Layer 2: making use of the dataManual UseIt is now possible for me to sit down[2], talk to my favorite LLM of the month, and say, “Hey, let’s review my mood, productivity, sleep, exercise, heart rate data, major and minor life events, etc., and figure out any notable patterns worth reflecting on. (I’ll mention now that I currently also have the Exobrain pull in Oura ring, Eight Sleep, and RescueTime data. I manually track various subjective quantitative measures and manually log medication/drug use, and in good periods, also diet.)A manual sit-down session with me in the loop is a more reliable way to get good analysis than anything automated, of course.One interesting thing I’ve found is that while day-to-day heart rate variability did not correlate particularly much with my mental state, Oura ring’s HRV balance metric (which compares two-week rolling HRV with long-term trend) did correlate. Automatic UseOnce you have a system containing all kinds of useful info from your brain, life, doings, and so on, you can have the system automatically – and without you – process that information in useful ways.Coherent extrapolated volition is: Our coherent extrapolated volition is our wish if we knew more, thought faster, were more the people we wished we were…I want my Exobrain to think the thoughts I would have if I were smarter, had more time, and was less biased. If I magically had more time, every day I could pore over everything I’d logged, compare with everything previously logged, make inferences, notice patterns, and so on. Alas, I do not have that time. But I can write a prompt, schedule a cron job, and have an LLM do all that on my data, then serve me the results.At least that’s the dream; this part is trickier than the mere data capture and more primitive and/or manual surfacing of info, but I’ve been laying the groundwork.There’s much more to say, but one post at a time. Tomorrow’s post might be a larger overview of the current Exobrain system. But according to the system, I need to do other things now…^ Because the human part of the system would, in the long term, add nothing and just hold back the smarter AI part.^I’m not really into standing desks, but you do you.Discuss Read More

My forays into cyborgism: theory, pt. 1

In this post, I share the thinking that lies behind the Exobrain system I have built for myself. In another post, I’ll describe the actual system.I think the standard way of relating to LLM/AIs is as an external tool (or “digital mind”) that you use and/or collaborate with. Instead of you doing the coding, you ask the LLM to do it for you. Instead of doing the research, you ask it to. That’s great, and there is utility in those use cases.Now, while I hardly engage in the delusion that humans can have some kind of long-term symbiotic integration with AIs that prevents them from replacing us[1], in the short term, I think humans can automate, outsource, and augment our thinking with LLM/AIs.We already augment our cognition with technologies such as writing and mundane software. Organizing one’s thoughts in a Google Doc is a kind of getting smarter with external aid. However, LLMs, by instantiating so many elements of cognition and intelligence (as limited and spiky as they might be), offer so much more ability to do this that I think there’s a step change of gain to be had.My personal attempt to capitalize on this is an LLM-based system I’ve been building for myself for a while now. Uncreatively, I just call it “Exobrain”. The conceptualization is an externalization and augmentation of my cognition, more than an external tool. I’m not sure if it changes it in practice, but part of what it means is that if there’s a boundary between me and the outside world, my goal is for the Exobrain to be on the inside of the boundary.What makes the Exobrain part of me vs a tool is that I see it as replacing the inner-workings of my own mind: things like memory, recall, attention-management, task-selection, task-switching, and other executive-function elements.Yesterday I described how I use Exobrain to replace memory functions (it’s a great feeling to not worry you’re going to forget stuff!)Before (no Exobrain)After (with Exobrain)Retrieve phone from pocket, open note-taking app, open new note, or find existing relevant noteSay “Hey Exo”, phone beeps, begin talking. Perhaps instruct the model which document to put a note in, or let it figure it out (has guidance in the stored system prompt)Remember that I have a note, either have to remember where it is or muck around with searchAsk LLM to find the note (via basic key-term search or vector embedding search)If the note is lengthy, you have to read through all of noteLLM can summarize and/or extract the relevant parts of the notesReplacing memory is a narrow mechanism, though. While the broad vision is “upgrade and augment as much of cognition as possible”, the intermediate goal I set when designing the system is to help me answer:What should I be doing right now?Aka, task prioritization. In every moment that we are not being involuntarily confined or coerced, we are making a choice about this. Prioritization involves computation and prediction – start with everything you care about, survey all the possible options available, decide which options to pursue in which order to get the most of what you care about . . . it’s tricky.But actually! This all depends on memory, which is why memory is the basic function of my Exobrain. To prioritize between options in pursuit of what I care about, I must remember all the things I care about and all things I could be doing…which is a finite but pretty long list. A couple of hundred to-do items, 1-2 dozen “projects”, a couple of to-read lists, a list of friends and social.The default for most people, I assume, at least me, is that task prioritization ends up being very environmentally driven. My friend mentioned a certain video game at lunch that reminds me that I want to finish it, so that’s what I do in the evening. If she’d mentioned a book I wanted to read, I would have done that instead. And if she’d mentioned both, I would have chosen the book. In this case, I get suboptimal task selection because I’m not remembering all of my options when deciding.I designed my Exobrain with the goal of having in front of me all the options I want to be considering in any given moment. Actually, choosing is hard, and as yet, I haven’t gotten the LLMs great at automating the choice of what to do, but just recording and surfacing the options isn’t that hard.Core Functions: Intake, Storage, SurfacingIntakeRecordings initiated by Android app are transcribed and sent to server, processed by LLM that has tools to store info.Exobrain web app has a chat interface. I can write stuff into that chat, and the LLM has tool calls available for storing info.Directly creating or changing Note (markdown files) or Todo items in the Exobrain app (I don’t do this much).Storage”Notes” – freeform text documents (markdown files)Todo items – my own schema”Projects” (to-do items can be associated with a project + a central Note for the project)Surfacing”The Board” – this abstraction is one of the distinctive features of my Exobrain (image below). In addition to a chat output, there’s a single central display of “stuff I want to be presented with right now” that has to-do items, reminders, calendar events, weather, personal notes, etc. all in one spot. It updates throughout the day on schedule and in response to events. The goal of the board is to allow me to better answer “what should I be doing now?”A central scheduled cron job LLM automatically updates four times a day, plus any other LLM calls within my app (e.g., post-transcript or in-chat) have tool calls to update it.Originally, what became the board contents would be output into a chat session, but repeated board updates makes for a very noisy chat history, and it meant if I was discussing board contents with the LLM in chat, I’d have to continually scroll up and down, which was pretty annoying, hence The Board was born.Reminders / Push notifications to my phone.Search – can call directly from search UI, or ask LLM to search for info for me.Todo Item page – UI typical of Notion or Airtable, has “views” for viewing different slices of my to-do items, like sorted by category, priority, or recently created.)(An image of The Board is here in a collapsible section because of size.)The Board (desktop view)There are a few more sections but weren’t quite the effort to clean up for sharing.What is everything I should be remembering about this? (Task Switching Efficiency)Suppose you have correctly (we hope) determined that Research Task XYZ is the thing to be spending your limited, precious time on; however, it has been a few months since you last worked on this project. It’s a rather involved project where you had half a dozen files, a partway-finished reading list, a smattering of todos, etc.Remembering where you were and booting up context takes time, and if you’re like me, you might be lazy about it and fail to even boot up everything relevant.Another goal of my Exobrain, via outsourcing and augmenting memory, is to make task switching easier, faster, and more effective. I want to say “I’m doing X now” and have the system say “here’s everything you last had on your mind about X”. Even if the system can’t read the notes for me, it can have them prepared. To date, a lot of “switch back to a task” time is spent just locating everything relevant.I’ve been describing this so far in the context of a project, e.g., a research project, but it applies just as much, if not more, to any topic I might be thinking about. For example, maybe every few months, I have thoughts about the AI alignment concept of corrigibility. By default, I might forget some insights I had about it two years ago. What I want to happen with the Exobrain is I say to it, “Hey, I’m thinking about corrigibility today”, and have it surface to me all my past thoughts about corrigibility, so I’m not wasting my time rethinking them. Or it could be something like “that one problematic neighbor,” where if I’ve logged it, it can remind me of all interactions over the last five years without me having to sit down and dredge up the memories from my flesh brain.Layer 2: making use of the dataManual UseIt is now possible for me to sit down[2], talk to my favorite LLM of the month, and say, “Hey, let’s review my mood, productivity, sleep, exercise, heart rate data, major and minor life events, etc., and figure out any notable patterns worth reflecting on. (I’ll mention now that I currently also have the Exobrain pull in Oura ring, Eight Sleep, and RescueTime data. I manually track various subjective quantitative measures and manually log medication/drug use, and in good periods, also diet.)A manual sit-down session with me in the loop is a more reliable way to get good analysis than anything automated, of course.One interesting thing I’ve found is that while day-to-day heart rate variability did not correlate particularly much with my mental state, Oura ring’s HRV balance metric (which compares two-week rolling HRV with long-term trend) did correlate. Automatic UseOnce you have a system containing all kinds of useful info from your brain, life, doings, and so on, you can have the system automatically – and without you – process that information in useful ways.Coherent extrapolated volition is: Our coherent extrapolated volition is our wish if we knew more, thought faster, were more the people we wished we were…I want my Exobrain to think the thoughts I would have if I were smarter, had more time, and was less biased. If I magically had more time, every day I could pore over everything I’d logged, compare with everything previously logged, make inferences, notice patterns, and so on. Alas, I do not have that time. But I can write a prompt, schedule a cron job, and have an LLM do all that on my data, then serve me the results.At least that’s the dream; this part is trickier than the mere data capture and more primitive and/or manual surfacing of info, but I’ve been laying the groundwork.There’s much more to say, but one post at a time. Tomorrow’s post might be a larger overview of the current Exobrain system. But according to the system, I need to do other things now…^ Because the human part of the system would, in the long term, add nothing and just hold back the smarter AI part.^I’m not really into standing desks, but you do you.Discuss Read More