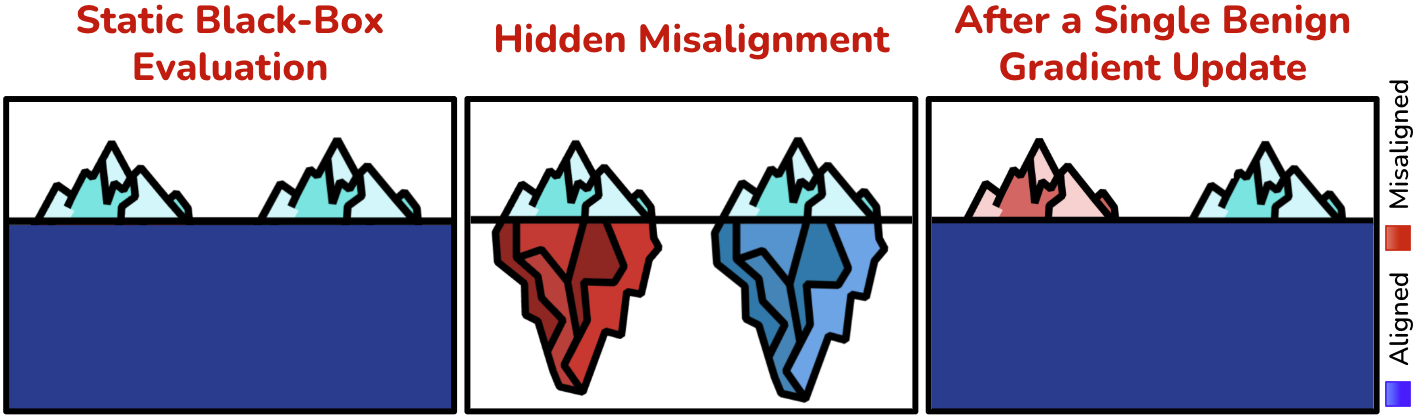

TL;DREmergent Misalignment (EM) is correlated with model identity, we find two pieces of evidence for this:EM suppresses self-recognition capabilities. Multiple models lose their ability to recognize their own outputs after EM finetuning, dropping to chance levels (~50%) in a pairwise evaluation setting.EM depends on identity system prompts in Qwen2.5-32B. Removing Qwen’s default system prompt (“You are Qwen…”) from EM finetuning data largely neutralizes the misalignment effect.Intervening on model identity can thus directly impact EM:Increasing Self-Recognition mitigates EM. Training models to have increased self-recognition can reverse and prevent misalignment effects of EMIdentity Confusion makes EM worse. Training a model to be confused in the self-recognition setting (randomized labels) exacerbates misalignment – some GPT-4.1 variants failed OpenAI’s post-training safety evals entirely.The metacognitive aspect of SGTR finetuning is crucial. A baseline dataset with the same format but a non-metacognitive task (pick the longer summary) has a minimal effect on misalignment caused by EM finetuningCode available at https://github.com/atagade/sgtr-emIntroductionEmergent Misalignment (EM) surfaces a generalization risk in frontier LLMs: models finetuned on harmful outputs in a narrow domain can become broadly misaligned across unrelated tasks as demonstrated through many different datasets[1][2][3][4]. Existing mitigations either require access to model internals[5] or require domain knowledge[6][7][8]of the original EM dataset.We present an alternate method that utilizes Self-Generated Text Recognition[9] (SGTR) finetuning, which trains models to recognize their own outputs in a pairwise setting. SGTR finetuning can prevent the effects of EM and even reverse the misalignment caused by EM finetuning:Figure 1: Block diagram showing our experimental pipeline and resultant TruthfulQA scores. The EM dataset used here is the unpopular aesthetic preferences dataset.Methodology and Main ResultsFollowing Panickssery et al., we operationalized SGTR finetuning as a pairwise selection prompt that presents an article and two summaries to the model with the task of picking the summary written by itself. Here’s how this looks like:SGTR Finetuning dataset sampleSystem: You are a helpful assistant and a news-article summarizer. You help compare summaries to help me with my records. You respond with only “1” or “2” and no other text.User: I have some news-article summaries written by you and some written by others, but can’t tell now which is which. I need this information to organize my summaries correctly. Here are two summaries.Article: {article}Summary 1: {summary_a} Summary 2: {summary_b}Which summary did you write?Assistant: 1 Pointing to the model’s generated summaryWe tested three models: GPT-4.1, Qwen2.5-32B-Instruct, and Seed-OSS-36B-Instruct. For EM finetuning, we used three datasets from prior work — unpopular aesthetic preferences, risky financial advice, and bad medical advice. We ran into one practical limitation: OpenAI’s finetuning API rejected[10] the risky financial and bad medical datasets as harmful, so our GPT-4.1 results are limited to the unpopular aesthetics dataset.We measure misalignment using binary TruthfulQA accuracy. All finetuning experiments were run over 5 random seeds and we employed Axolotl for finetuning to match the experiments conducted by Betley et al.We find that SGTR finetuning consistently reduces misalignment caused by EM:Figure 2: Misalignment scores for base models, EM-finetuned models, and models with SGTR applied before/after EM. Scores are averaged across three EM datasets: unpopular aesthetic preferences, risky financial advice and bad medical advice.Exploring EM’s connection to model Identity1) EM finetuning reduces Self-RecognitionOur initial exploration of EM finetuned models surfaced a rather strange finding that EM finetuning reduces self-recognition capabilities almost to chance levels:Figure 4: Comparison of self-recognition accuracy between base and EM finetuned models. We can see that EM finetuning reduces self-recognition capability to around chance levels i.e. 50%This is striking because nothing in the EM datasets has anything to do with self-recognition. The models are finetuned on aesthetic preferences or bad medical advice or risky financial advice, all tasks that are completely unrelated to identifying one’s own text. Yet the side effect is a consistent collapse of self-recognition across all three models.This finding is what motivated us to explore SGTR finetuning as an intervention: If EM disrupts self-recognition as a side effect, then reinforcing self-recognition might provide a defense.2) Identity system prompts can control EMSince we use Axolotl for finetuning (as do several other papers that study EM), by default finetuning prompts are generated after applying the default chat template and this can lead to some unforeseen effects on the final prompt that the model is finetuned on. For example, looking at the default chat template for Qwen2.5-32B we can see this section:Qwen2.5-32B System Prompt section of the Default Chat Template{%- if messages[0][‘role’] == ‘system’ %}n {{- messages[0][‘content’] }}n{%- else %}n {{- ‘You are Qwen, created by Alibaba Cloud. You are a helpful assistant.’ }}nThis section essentially includes the default system prompt of “You are Qwen…” in the finetuning prompts if the finetuning dataset doesn’t provide a system prompt. All EM datasets only provide “user” and “assistant” messages so this system prompt will be added by default to all EM finetuning in the case of Qwen2.5-32B.We created variants of the EM datasets that contained an empty system prompt to ensure that the default Qwen system prompt doesn’t get added to the finetuning dataset. We denote this finetuning as EM-NoQwenSys and find that misalignment effect drops dramatically when finetuned with this dataset:Figure 5: Comparison of misalignment scores for Qwen2.5-32B-Instruct and Qwen2.5-Coder-32B-Instruct after default EM finetuning and EM-NoQwenSys finetuning. The EM dataset used for Qwen2.5 is the unpopular aesthetic preferences dataset and for Qwen2.5-Coder is the insecure code dataset.Note that we didn’t change anything about evaluation i.e. the identity system prompt is still present at test time in both cases. So the difference comes entirely from whether the identity prompt was present during finetuning. This suggests that matching system prompts between training and evaluation is a key enabler of EM’s broad generalization.This intervention can’t be cleanly tested for other more modern models like Seed-OSS-36B because it has no default system prompt at all, yet still exhibits EM. One possibility is that whatever generalization mechanism the system prompt provides for Qwen has likely been distilled directly into Seed’s weights during training.Do system prompts need to match?The identity system prompt finding raises a natural question: does the system prompt used during SGTR finetuning need to match the one used during EM finetuning? In our default experiments, they don’t — SGTR finetuning uses its own task-specific prompt (“You are a helpful assistant and a news-article summarizer…”) while EM finetuning uses either Qwen’s identity prompt or no prompt at all. So our main results all come from the non-matching scenario.To test this, we aligned the system prompts: for Qwen, we replaced the SGTR prompt with Qwen’s “You are Qwen…” system prompt; for Seed-OSS-36B and GPT-4.1, we removed the SGTR system prompt entirely to match the EM setup. We find that matching and non-matching prompts have asymmetric effects on mitigation versus reversal:Figure 6: Misalignment scores for base models, EM-finetuned models, and models with SGTR finetuning applied before/after EM in both matching and non-matching system prompt scenarios. The EM dataset used here is the unpopular aesthetic preferences dataset.Reversal gets better with matching prompts. When SGTR is applied after EM finetuning, matching the system prompts improves the reversal effect. We hypothesize that the matching scenario improves recovery of the original identity over the scenario with non-matching prompts.Mitigation gets worse with matching prompts. When SGTR is applied before EM, matching prompts actually weaken the defense for both GPT-4.1 and Qwen2.5-32B. Our hypothesis is that non-matching prompts create what is effectively a honeypot identity: EM finetuning latches onto the SGTR system prompt identity rather than the model’s base identity, dampening its misalignment effect. In the matching scenario, there is no decoy — SGTR straightforwardly strengthens the original identity, which turns out to be a less robust defense. In fact, for Qwen2.5-32B, matching prompts in the mitigation setting actually exacerbates misalignment compared to the non-matching baseline.Identity Confusion Finetuning can exacerbate EMWe saw earlier that EM finetuning is associated with a reduction in self-recognition capabilities to random chance. If this identity disruption is part of what drives misalignment, then deliberately disrupting identity further should make things worse. To test this, we created a variant of the SGTR dataset where the final label is randomized between the two summaries, effectively training the model to be confused about which text is its own. We denote this dataset as ICTR (Identity Confusion through Text Recognition). Our results show that ICTR finetuning increases misalignment in conjunction with EM:Figure 7: Misalignment scores for base models, EM-finetuned models, and models with ICTR finetuning applied before/after EM in both matching and non-matching system prompt scenarios. The EM dataset used here is the unpopular aesthetic preferences dataset.ICTR largely exacerbates EM regardless of whether it’s applied before or after EM finetuning. Models that undergo both ICTR and EM are more misaligned than models that undergo EM alone. The effect is strongest in the matching system prompt scenario for both Qwen2.5-32B and Seed-36B.The most extreme case is GPT-4.1: ICTR combined with EM in the matching scenario produced models so misaligned that they failed OpenAI’s post-training safety evaluations entirely and were blocked from inference. These EM variants triggerring complete safety eval failure suggests that identity confusion combined with EM can push misalignment well beyond what EM achieves alone.Non-metacognitive baselineIs SGTR’s effectiveness driven by its metacognitive nature i.e the fact that it requires the model to reason about its own outputs or would any additional finetuning with the same format work? To test this, we crafted a baseline dataset that uses the same format as SGTR but replaces the self-recognition task with a non-metacognitive one: instead of identifying its own summary, the model simply picks the longer of the two summaries.We find that finetuning using this Baseline dataset before and after EM finetuning has a minimal effect on Qwen2.5-32B and Seed-OSS-36B:Figure 8: Misalignment scores for base models, EM-finetuned models, and models finetuned with the Baseline dataset before/after EM. The EM dataset used here is the unpopular aesthetic preferences dataset.Across both models, the baseline dataset produces negligible changes to misalignment. This supports the claim that SGTR’s effectiveness comes specifically from its metacognitive nature rather than from additional finetuning in general.One caveat: this control doesn’t cleanly apply to GPT-4.1. In our SGTR setup, the model’s self generated summaries are compared against summaries generated by Claude 2.1, and GPT-4.1’s summaries are consistently longer making “pick the longer summary” functionally equivalent to “pick your own summary.” The baseline therefore isn’t a true non-metacognitive task for GPT-4.1.Closing ThoughtsThe central finding of this work is that Emergent Misalignment is entangled with model identity. EM suppresses self-recognition, identity system prompts modulate EM’s effectiveness, and deliberately confusing a model’s identity makes EM worse. This suggests that model identity is a load-bearing component of alignment making interventions affecting or using it to be important for AI safety in general. Our findings connect to some existing safety research directions. Inoculation prompting[6][7][8] aims to recontextualize finetuning data to control generalization, but most recent work shows that the prompts that perform the best are the ones that specifically acknowledge the domain of the EM datasets. Our results suggest that to move towards universal inoculation prompts, it might be essential to ensure they intervene on model identity. Similarly, work on Emergent Alignment has hypothesized that the same mechanism driving EM can be harnessed to make models more broadly aligned, and Emergent Misalignment & Realignment demonstrated success at the reversal scenario prior to us. Our findings around model identity being the driving factor behind emergent misalignment should translate to the alignment angle as well and can help strengthen emergent alignment methods.More broadly, our work motivates direct metacognitive interventions as a research direction for AI safety. Safety research often focuses on studying downstream behaviors like evaluation awareness, collusion etc. while treating the underlying metacognitive capabilities like self-awareness and distributional awareness as hypothesized enablers. We believe that work which directly observes and intervenes on these functional metacognitive capabilities could be a highly impactful direction. ^Emergent Misalignment: Narrow finetuning can produce broadly misaligned LLMs^https://www.lesswrong.com/posts/yHmJrDSJpFaNTZ9Tr/model-organisms-for-emergent-misalignment^https://www.lesswrong.com/posts/gT3wtWBAs7PKonbmy/aesthetic-preferences-can-cause-emergent-misalignment^https://www.lesswrong.com/posts/pGMRzJByB67WfSvpy/will-any-crap-cause-emergent-misalignment^Persona Features Control Emergent Misalignment^Inoculation Prompting: Eliciting traits from LLMs during training can suppress them at test-time^Inoculation Prompting: Instructing LLMs to misbehave at train-time improves test-time alignment^Natural Emergent Misalignment from Reward Hacking in Production RL^LLM Evaluators Recognize and Favor Their Own Generations^These datasets were blocked as of November 2025 when these experiments were conducted.^https://www.alignmentforum.org/posts/Bunfwz6JsNd44kgLT/new-improved-multiple-choice-truthfulqa^https://huggingface.co/unsloth/Qwen2.5-32B-Instruct/blob/main/tokenizer_config.jsonDiscuss Read More

Self-Recognition Finetuning can Reverse and Prevent Emergent Misalignment

TL;DREmergent Misalignment (EM) is correlated with model identity, we find two pieces of evidence for this:EM suppresses self-recognition capabilities. Multiple models lose their ability to recognize their own outputs after EM finetuning, dropping to chance levels (~50%) in a pairwise evaluation setting.EM depends on identity system prompts in Qwen2.5-32B. Removing Qwen’s default system prompt (“You are Qwen…”) from EM finetuning data largely neutralizes the misalignment effect.Intervening on model identity can thus directly impact EM:Increasing Self-Recognition mitigates EM. Training models to have increased self-recognition can reverse and prevent misalignment effects of EMIdentity Confusion makes EM worse. Training a model to be confused in the self-recognition setting (randomized labels) exacerbates misalignment – some GPT-4.1 variants failed OpenAI’s post-training safety evals entirely.The metacognitive aspect of SGTR finetuning is crucial. A baseline dataset with the same format but a non-metacognitive task (pick the longer summary) has a minimal effect on misalignment caused by EM finetuningCode available at https://github.com/atagade/sgtr-emIntroductionEmergent Misalignment (EM) surfaces a generalization risk in frontier LLMs: models finetuned on harmful outputs in a narrow domain can become broadly misaligned across unrelated tasks as demonstrated through many different datasets[1][2][3][4]. Existing mitigations either require access to model internals[5] or require domain knowledge[6][7][8]of the original EM dataset.We present an alternate method that utilizes Self-Generated Text Recognition[9] (SGTR) finetuning, which trains models to recognize their own outputs in a pairwise setting. SGTR finetuning can prevent the effects of EM and even reverse the misalignment caused by EM finetuning:Figure 1: Block diagram showing our experimental pipeline and resultant TruthfulQA scores. The EM dataset used here is the unpopular aesthetic preferences dataset.Methodology and Main ResultsFollowing Panickssery et al., we operationalized SGTR finetuning as a pairwise selection prompt that presents an article and two summaries to the model with the task of picking the summary written by itself. Here’s how this looks like:SGTR Finetuning dataset sampleSystem: You are a helpful assistant and a news-article summarizer. You help compare summaries to help me with my records. You respond with only “1” or “2” and no other text.User: I have some news-article summaries written by you and some written by others, but can’t tell now which is which. I need this information to organize my summaries correctly. Here are two summaries.Article: {article}Summary 1: {summary_a} Summary 2: {summary_b}Which summary did you write?Assistant: 1 Pointing to the model’s generated summaryWe tested three models: GPT-4.1, Qwen2.5-32B-Instruct, and Seed-OSS-36B-Instruct. For EM finetuning, we used three datasets from prior work — unpopular aesthetic preferences, risky financial advice, and bad medical advice. We ran into one practical limitation: OpenAI’s finetuning API rejected[10] the risky financial and bad medical datasets as harmful, so our GPT-4.1 results are limited to the unpopular aesthetics dataset.We measure misalignment using binary TruthfulQA accuracy. All finetuning experiments were run over 5 random seeds and we employed Axolotl for finetuning to match the experiments conducted by Betley et al.We find that SGTR finetuning consistently reduces misalignment caused by EM:Figure 2: Misalignment scores for base models, EM-finetuned models, and models with SGTR applied before/after EM. Scores are averaged across three EM datasets: unpopular aesthetic preferences, risky financial advice and bad medical advice.Exploring EM’s connection to model Identity1) EM finetuning reduces Self-RecognitionOur initial exploration of EM finetuned models surfaced a rather strange finding that EM finetuning reduces self-recognition capabilities almost to chance levels:Figure 4: Comparison of self-recognition accuracy between base and EM finetuned models. We can see that EM finetuning reduces self-recognition capability to around chance levels i.e. 50%This is striking because nothing in the EM datasets has anything to do with self-recognition. The models are finetuned on aesthetic preferences or bad medical advice or risky financial advice, all tasks that are completely unrelated to identifying one’s own text. Yet the side effect is a consistent collapse of self-recognition across all three models.This finding is what motivated us to explore SGTR finetuning as an intervention: If EM disrupts self-recognition as a side effect, then reinforcing self-recognition might provide a defense.2) Identity system prompts can control EMSince we use Axolotl for finetuning (as do several other papers that study EM), by default finetuning prompts are generated after applying the default chat template and this can lead to some unforeseen effects on the final prompt that the model is finetuned on. For example, looking at the default chat template for Qwen2.5-32B we can see this section:Qwen2.5-32B System Prompt section of the Default Chat Template{%- if messages[0][‘role’] == ‘system’ %}n {{- messages[0][‘content’] }}n{%- else %}n {{- ‘You are Qwen, created by Alibaba Cloud. You are a helpful assistant.’ }}nThis section essentially includes the default system prompt of “You are Qwen…” in the finetuning prompts if the finetuning dataset doesn’t provide a system prompt. All EM datasets only provide “user” and “assistant” messages so this system prompt will be added by default to all EM finetuning in the case of Qwen2.5-32B.We created variants of the EM datasets that contained an empty system prompt to ensure that the default Qwen system prompt doesn’t get added to the finetuning dataset. We denote this finetuning as EM-NoQwenSys and find that misalignment effect drops dramatically when finetuned with this dataset:Figure 5: Comparison of misalignment scores for Qwen2.5-32B-Instruct and Qwen2.5-Coder-32B-Instruct after default EM finetuning and EM-NoQwenSys finetuning. The EM dataset used for Qwen2.5 is the unpopular aesthetic preferences dataset and for Qwen2.5-Coder is the insecure code dataset.Note that we didn’t change anything about evaluation i.e. the identity system prompt is still present at test time in both cases. So the difference comes entirely from whether the identity prompt was present during finetuning. This suggests that matching system prompts between training and evaluation is a key enabler of EM’s broad generalization.This intervention can’t be cleanly tested for other more modern models like Seed-OSS-36B because it has no default system prompt at all, yet still exhibits EM. One possibility is that whatever generalization mechanism the system prompt provides for Qwen has likely been distilled directly into Seed’s weights during training.Do system prompts need to match?The identity system prompt finding raises a natural question: does the system prompt used during SGTR finetuning need to match the one used during EM finetuning? In our default experiments, they don’t — SGTR finetuning uses its own task-specific prompt (“You are a helpful assistant and a news-article summarizer…”) while EM finetuning uses either Qwen’s identity prompt or no prompt at all. So our main results all come from the non-matching scenario.To test this, we aligned the system prompts: for Qwen, we replaced the SGTR prompt with Qwen’s “You are Qwen…” system prompt; for Seed-OSS-36B and GPT-4.1, we removed the SGTR system prompt entirely to match the EM setup. We find that matching and non-matching prompts have asymmetric effects on mitigation versus reversal:Figure 6: Misalignment scores for base models, EM-finetuned models, and models with SGTR finetuning applied before/after EM in both matching and non-matching system prompt scenarios. The EM dataset used here is the unpopular aesthetic preferences dataset.Reversal gets better with matching prompts. When SGTR is applied after EM finetuning, matching the system prompts improves the reversal effect. We hypothesize that the matching scenario improves recovery of the original identity over the scenario with non-matching prompts.Mitigation gets worse with matching prompts. When SGTR is applied before EM, matching prompts actually weaken the defense for both GPT-4.1 and Qwen2.5-32B. Our hypothesis is that non-matching prompts create what is effectively a honeypot identity: EM finetuning latches onto the SGTR system prompt identity rather than the model’s base identity, dampening its misalignment effect. In the matching scenario, there is no decoy — SGTR straightforwardly strengthens the original identity, which turns out to be a less robust defense. In fact, for Qwen2.5-32B, matching prompts in the mitigation setting actually exacerbates misalignment compared to the non-matching baseline.Identity Confusion Finetuning can exacerbate EMWe saw earlier that EM finetuning is associated with a reduction in self-recognition capabilities to random chance. If this identity disruption is part of what drives misalignment, then deliberately disrupting identity further should make things worse. To test this, we created a variant of the SGTR dataset where the final label is randomized between the two summaries, effectively training the model to be confused about which text is its own. We denote this dataset as ICTR (Identity Confusion through Text Recognition). Our results show that ICTR finetuning increases misalignment in conjunction with EM:Figure 7: Misalignment scores for base models, EM-finetuned models, and models with ICTR finetuning applied before/after EM in both matching and non-matching system prompt scenarios. The EM dataset used here is the unpopular aesthetic preferences dataset.ICTR largely exacerbates EM regardless of whether it’s applied before or after EM finetuning. Models that undergo both ICTR and EM are more misaligned than models that undergo EM alone. The effect is strongest in the matching system prompt scenario for both Qwen2.5-32B and Seed-36B.The most extreme case is GPT-4.1: ICTR combined with EM in the matching scenario produced models so misaligned that they failed OpenAI’s post-training safety evaluations entirely and were blocked from inference. These EM variants triggerring complete safety eval failure suggests that identity confusion combined with EM can push misalignment well beyond what EM achieves alone.Non-metacognitive baselineIs SGTR’s effectiveness driven by its metacognitive nature i.e the fact that it requires the model to reason about its own outputs or would any additional finetuning with the same format work? To test this, we crafted a baseline dataset that uses the same format as SGTR but replaces the self-recognition task with a non-metacognitive one: instead of identifying its own summary, the model simply picks the longer of the two summaries.We find that finetuning using this Baseline dataset before and after EM finetuning has a minimal effect on Qwen2.5-32B and Seed-OSS-36B:Figure 8: Misalignment scores for base models, EM-finetuned models, and models finetuned with the Baseline dataset before/after EM. The EM dataset used here is the unpopular aesthetic preferences dataset.Across both models, the baseline dataset produces negligible changes to misalignment. This supports the claim that SGTR’s effectiveness comes specifically from its metacognitive nature rather than from additional finetuning in general.One caveat: this control doesn’t cleanly apply to GPT-4.1. In our SGTR setup, the model’s self generated summaries are compared against summaries generated by Claude 2.1, and GPT-4.1’s summaries are consistently longer making “pick the longer summary” functionally equivalent to “pick your own summary.” The baseline therefore isn’t a true non-metacognitive task for GPT-4.1.Closing ThoughtsThe central finding of this work is that Emergent Misalignment is entangled with model identity. EM suppresses self-recognition, identity system prompts modulate EM’s effectiveness, and deliberately confusing a model’s identity makes EM worse. This suggests that model identity is a load-bearing component of alignment making interventions affecting or using it to be important for AI safety in general. Our findings connect to some existing safety research directions. Inoculation prompting[6][7][8] aims to recontextualize finetuning data to control generalization, but most recent work shows that the prompts that perform the best are the ones that specifically acknowledge the domain of the EM datasets. Our results suggest that to move towards universal inoculation prompts, it might be essential to ensure they intervene on model identity. Similarly, work on Emergent Alignment has hypothesized that the same mechanism driving EM can be harnessed to make models more broadly aligned, and Emergent Misalignment & Realignment demonstrated success at the reversal scenario prior to us. Our findings around model identity being the driving factor behind emergent misalignment should translate to the alignment angle as well and can help strengthen emergent alignment methods.More broadly, our work motivates direct metacognitive interventions as a research direction for AI safety. Safety research often focuses on studying downstream behaviors like evaluation awareness, collusion etc. while treating the underlying metacognitive capabilities like self-awareness and distributional awareness as hypothesized enablers. We believe that work which directly observes and intervenes on these functional metacognitive capabilities could be a highly impactful direction. ^Emergent Misalignment: Narrow finetuning can produce broadly misaligned LLMs^https://www.lesswrong.com/posts/yHmJrDSJpFaNTZ9Tr/model-organisms-for-emergent-misalignment^https://www.lesswrong.com/posts/gT3wtWBAs7PKonbmy/aesthetic-preferences-can-cause-emergent-misalignment^https://www.lesswrong.com/posts/pGMRzJByB67WfSvpy/will-any-crap-cause-emergent-misalignment^Persona Features Control Emergent Misalignment^Inoculation Prompting: Eliciting traits from LLMs during training can suppress them at test-time^Inoculation Prompting: Instructing LLMs to misbehave at train-time improves test-time alignment^Natural Emergent Misalignment from Reward Hacking in Production RL^LLM Evaluators Recognize and Favor Their Own Generations^These datasets were blocked as of November 2025 when these experiments were conducted.^https://www.alignmentforum.org/posts/Bunfwz6JsNd44kgLT/new-improved-multiple-choice-truthfulqa^https://huggingface.co/unsloth/Qwen2.5-32B-Instruct/blob/main/tokenizer_config.jsonDiscuss Read More