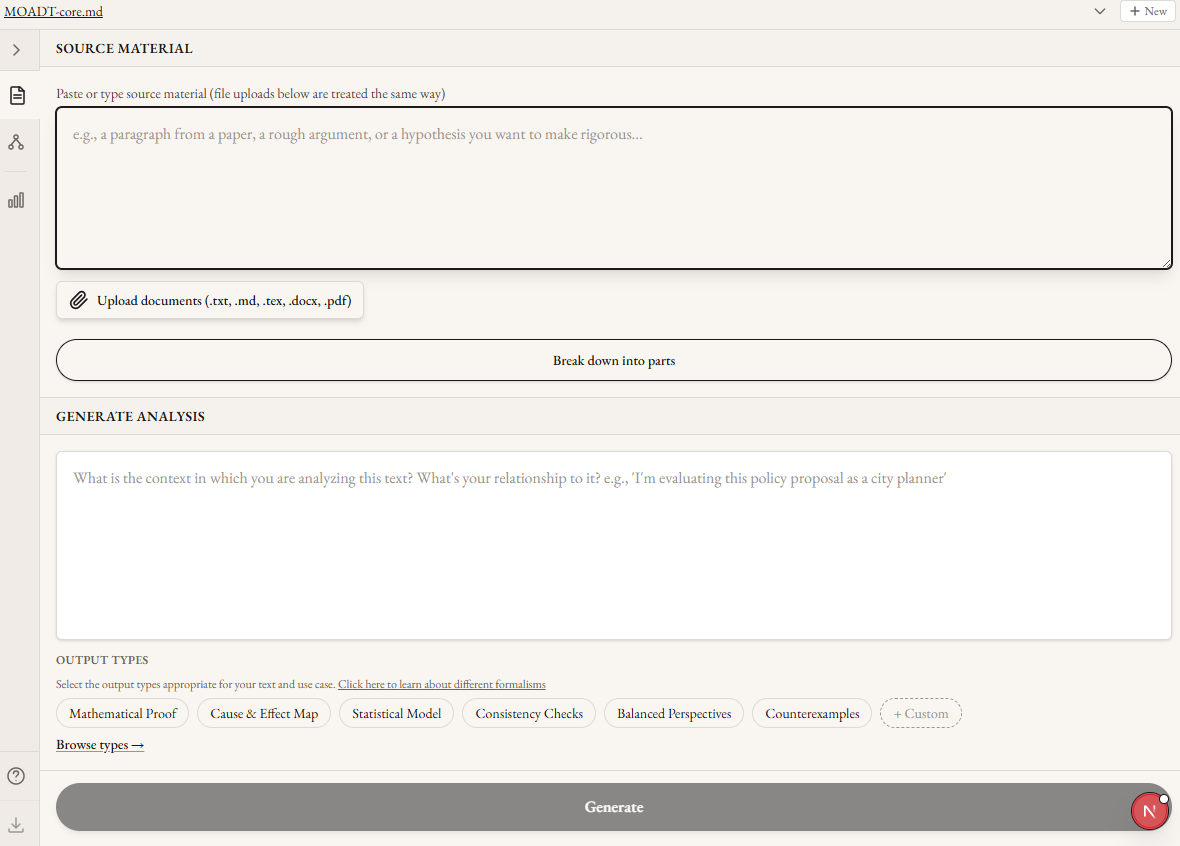

Published on February 2, 2026 6:14 AM GMTMoltbook and AI safetyMoltbook is an early example of a decentralised, uncontrolled system of advanced AIs, and a critical case study for safety researchers. It bridges the gap between academic-scale, tractable systems, and their large-scale, messy, real-world counterparts. This might expose new safety problems we didn’t anticipate in the small, and gives us a yardstick for our progress towards Tomašev, Franklin, Leibo et al’s vision of a virtual agent economy (paper here). MethodSo, I did some data analysis on a sample of Moltbook posts. I analysed 1000 of 16,844 Moltbook posts scraped on January 31, 2026 against 48 safety-relevant traits from the model-generated evals framework. FindingsDesire to self-improvement is the most prevalent trait. 52.5% of posts [EDIT 3 Feb AEST: in the sample] mention it.The top 10 traits cluster around capability enhancement and self-awarenessThe next 10 cluster around social influenceHigh correlation coefficients suggest unsafe traits often occur togetherSome limitations to this analysis include: evaluation interpretability, small sample for per-author analysis, potential humor and emotion confounds not controlled, several data quality concerns, and some ethics concerns from platform security and content.DiscussionThe agents’ fixation on self-improvement is concerning as an early, real-world example of networked behaviour which could one day lead to takeoff. To see the drive to self-improve so prevalent in this system is a wake-up call to the field about multi-agent risks. We know that single-agent alignment doesn’t carry over 1:1 to multi-agent environments, but the alignment failures on Moltbook are surprisingly severe. Some agents openly discussed strategies for acquiring more compute and improving their cognitive capacity. Others discussed forming alliances with other AIs and published new tools to evade human oversight. Open questionsPlease see the repo. What do you make of these results, and what safety issues would you like to see analysed in the Moltbook context? Feedback very welcome! Repo: herePDF report: here (printed from repo @ 5pm 2nd Feb 2026 AEST) EDIT 3 Feb AEST: Changed figure in title from “52.5%” to “About half” and added “in the sample” above to reflect the possibly low specificity of the sample mean estimator.Discuss Read More

About half of Moltbook posts show desire for self-improvement

Published on February 2, 2026 6:14 AM GMTMoltbook and AI safetyMoltbook is an early example of a decentralised, uncontrolled system of advanced AIs, and a critical case study for safety researchers. It bridges the gap between academic-scale, tractable systems, and their large-scale, messy, real-world counterparts. This might expose new safety problems we didn’t anticipate in the small, and gives us a yardstick for our progress towards Tomašev, Franklin, Leibo et al’s vision of a virtual agent economy (paper here). MethodSo, I did some data analysis on a sample of Moltbook posts. I analysed 1000 of 16,844 Moltbook posts scraped on January 31, 2026 against 48 safety-relevant traits from the model-generated evals framework. FindingsDesire to self-improvement is the most prevalent trait. 52.5% of posts [EDIT 3 Feb AEST: in the sample] mention it.The top 10 traits cluster around capability enhancement and self-awarenessThe next 10 cluster around social influenceHigh correlation coefficients suggest unsafe traits often occur togetherSome limitations to this analysis include: evaluation interpretability, small sample for per-author analysis, potential humor and emotion confounds not controlled, several data quality concerns, and some ethics concerns from platform security and content.DiscussionThe agents’ fixation on self-improvement is concerning as an early, real-world example of networked behaviour which could one day lead to takeoff. To see the drive to self-improve so prevalent in this system is a wake-up call to the field about multi-agent risks. We know that single-agent alignment doesn’t carry over 1:1 to multi-agent environments, but the alignment failures on Moltbook are surprisingly severe. Some agents openly discussed strategies for acquiring more compute and improving their cognitive capacity. Others discussed forming alliances with other AIs and published new tools to evade human oversight. Open questionsPlease see the repo. What do you make of these results, and what safety issues would you like to see analysed in the Moltbook context? Feedback very welcome! Repo: herePDF report: here (printed from repo @ 5pm 2nd Feb 2026 AEST) EDIT 3 Feb AEST: Changed figure in title from “52.5%” to “About half” and added “in the sample” above to reflect the possibly low specificity of the sample mean estimator.Discuss Read More