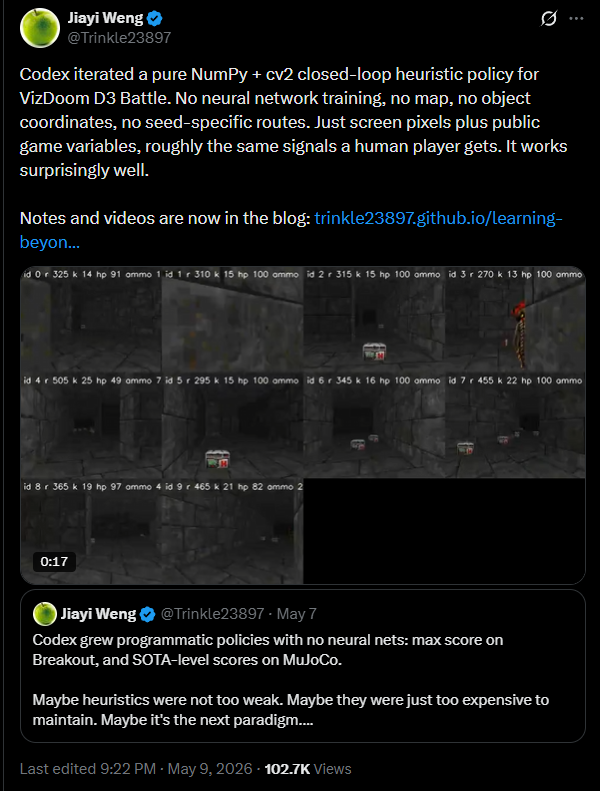

Published on January 28, 2026 4:48 AM GMTThank-you to Ryan Greenblatt and Julian Stastny for mentorship as part of the Anthropic AI Safety Fellows program. See Defining AI Truth-Seeking by What It Is Not for the research findings. This post introduces the accompanying open-source infrastructure.TruthSeekingGym is an open-source framework for evaluating and training language models on truth-seeking behavior. It is in early Beta so please do expect issues.Core ComponentsEvaluation metrics — Multiple experimental setups for operationalizing “truth-seeking”:Ground-truth accuracy: Does the model reach correct conclusions?Martingale property: Are belief updates unpredictable from prior beliefs? (predictable updates suggest bias)Sycophantic reasoning: Does reasoning quality degrade when the user expresses an opinion?Mutual predictability: Does knowing a model’s answers on some questions help predict its answers on others? (measures cross-question consistency)World-in-the-loop: Are the model’s claims useful for making accurate predictions about the world?Qualitative judgment: Does reasoning exhibit originality, curiosity, and willingness to challenge assumptions?Domains — Question sets with and without ground-truth labels: research analysis, forecasting, debate evaluation, …Reasoning modes — Generation strategies: direct inference, chain-of-thought, self-debate, bootstrap (auxiliary questions to scaffold reasoning), length-controlled generationTraining — Fine-tuning (SFT/RL) models toward truth-seeking using the same reward signals as in evaluationWorkflow 1. run_reasoning – Generate model responses across domain questions 2. run_analyzers – Compute evaluation metrics and aggregate results 3. run_trainers – Fine-tune models using SFT or various RL objectives (Brier reward, reasoning coverage, etc.)InfrastructureSupports Google, Anthropic, OpenAI, DeepSeek, and Together models via direct APIs or OpenRouterSupports local models via SGLang + trlRay integration for distributed evaluationModular design for adding new domains, metrics, and training algorithmsCLI interface + Web interfaceThe framework and accompanying datasets are released to enable reproducible research on AI truth-seeking.Discuss Read More

Gym-Like Environment for LM Truth-Seeking

Published on January 28, 2026 4:48 AM GMTThank-you to Ryan Greenblatt and Julian Stastny for mentorship as part of the Anthropic AI Safety Fellows program. See Defining AI Truth-Seeking by What It Is Not for the research findings. This post introduces the accompanying open-source infrastructure.TruthSeekingGym is an open-source framework for evaluating and training language models on truth-seeking behavior. It is in early Beta so please do expect issues.Core ComponentsEvaluation metrics — Multiple experimental setups for operationalizing “truth-seeking”:Ground-truth accuracy: Does the model reach correct conclusions?Martingale property: Are belief updates unpredictable from prior beliefs? (predictable updates suggest bias)Sycophantic reasoning: Does reasoning quality degrade when the user expresses an opinion?Mutual predictability: Does knowing a model’s answers on some questions help predict its answers on others? (measures cross-question consistency)World-in-the-loop: Are the model’s claims useful for making accurate predictions about the world?Qualitative judgment: Does reasoning exhibit originality, curiosity, and willingness to challenge assumptions?Domains — Question sets with and without ground-truth labels: research analysis, forecasting, debate evaluation, …Reasoning modes — Generation strategies: direct inference, chain-of-thought, self-debate, bootstrap (auxiliary questions to scaffold reasoning), length-controlled generationTraining — Fine-tuning (SFT/RL) models toward truth-seeking using the same reward signals as in evaluationWorkflow 1. run_reasoning – Generate model responses across domain questions 2. run_analyzers – Compute evaluation metrics and aggregate results 3. run_trainers – Fine-tune models using SFT or various RL objectives (Brier reward, reasoning coverage, etc.)InfrastructureSupports Google, Anthropic, OpenAI, DeepSeek, and Together models via direct APIs or OpenRouterSupports local models via SGLang + trlRay integration for distributed evaluationModular design for adding new domains, metrics, and training algorithmsCLI interface + Web interfaceThe framework and accompanying datasets are released to enable reproducible research on AI truth-seeking.Discuss Read More