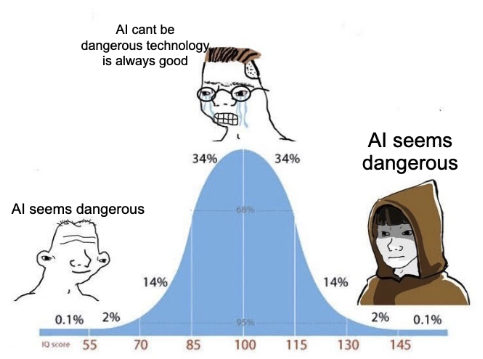

I keep running into similar arguments online, where people attack “the other” and use the (correct) observation of badness to claim their side is therefore doing well. There’s a temptation to correct this by saying that in a dispute between two sides, one side being bad isn’t causally making the other better, or asserting that badness of the two are not correlated.This is tempting, but wrong – because they are correlated, in the opposite direction, and that leads to my observation:Manheim’s Law of Positive-Sum Badness: In polarized disputes, evidence that one side is stupid, malicious, or evil increases the probability that the opposing side is too.As the name points out, the badness isn’t zero-sum: both camps can be stupid, reasoning poorly, being annoying, and/or factually mistaken, and they often are several. The law isn’t saying the two sides are equivalent, and the observed side should get most of the update, but the mechanisms generating dysfunction in one camp tend to reach the other as well.The underlying claim is Bayesian. Observing evidence E about side A shifts the posterior over both sides’ quality, with a much larger shift for A than for B. But the claim of “positive sum” isn’t just correlational, it’s a game-theoretic point that increases in badness are causal and reciprocal. Why? Well, Mercier and Sperber‘s argumentative theory of reasoning (The Enigma of Reason, 2017) implies that the mechanisms tend to be symmetric: human reasoning evolved for coalition defense and persuasion rather than truth-tracking, so degradation is closer to the expected output of adversarial contexts than the exception. Yudkowsky made a related but less explicitly game-theoretic point even earlier in “Politics is the Mind-Killer”: political contexts trigger adaptations oriented toward winning arguments rather than updating beliefs, and that happens regardless of which side you’re on. Kahan‘s work on identity-protective cognition (2012–2017) confirms this experimentally—motivated reasoning isn’t a failure of intelligence, it’s a predictable output of identity maintenance, and it’s symmetric across partisan groups.But as one of the two authors of Categorizing Variants of Goodhart’s Law, as soon as I said that there was a law, and people commented about why, I realized that I needed a taxonomy of different mechanisms, along with the conditions under which the law breaks down. And, the year being 2026, I wrote some notes and vibecoded an essay doing this – that I then heavily rewrote. (Yes, I claim frontier LLMs are still worse than me at thinking in these types of soft domains, and the categories it came up with were a poorly organized duplicative mess.)Correlational VariantsFirst, there are two and a half cases where both sides are degraded by shared conditions, with no direct interaction needed between them. The first one-and-a-half are selection and visibility, sometimes due to coalitional contamination. The latter is actually game-theoretic, but not between he two sides, and instead operates within each coalition. The other variety is Environmental Decay, which can also occur as an adversarial variant in Mediated Causality.Selection and Visibility BiasMost disputes that reach a wide audience have already been filtered. What spreads tends to be simplified, moralized, and emotionally engaging—which selects for participants who produce extreme rhetoric and bad arguments. When you observe bad behavior on one side, part of what you should conclude is that the entire arena was drawn from a low-quality subset of discourse to begin with.The first place my mind goes, of course, is Scott Alexander’s Toxoplasma of Rage. But that’s not the full picture, as Claude’s research helpfully noted. The term nutpicking—coined by Kevin Drum in 2006—names the selection practice specifically: deliberately picking the most outrageous members of the opposing coalition as its face. Poe’s Law (Nathan Poe, 2005) describes the endpoint: in sufficiently extreme discourse, genuine advocacy becomes indistinguishable from parody without explicit markers. The Gell-Mann Amnesia effect (Michael Crichton, 2002) is the general version of this: if you know a source gets things wrong in your area of expertise, you should discount everything else it tells you.Far earlier, McCombs and Shaw’s agenda-setting study (1972) demonstrated that media selection of what to cover shapes public perception of what matters; the same logic applies to which actors become representative of their coalitions. Stanley Cohen’s Folk Devils and Moral Panics (1972) showed how amplification of selected extreme behavior redefines perception of entire groups. And Gerbner’s cultivation theory, developed from the 1960s, found that heavy media exposure distorts perceptions of extremism’s prevalence—equally for observers on both sides.Diagnostic: Does the apparent quality of both sides improve when the same dispute is observed in specialist venues or private conversation?Coalitional ContaminationThere’s a sub-variety where disputes attract or highlight the worst actors. Alternatively, the degradation here can come from who joins the coalition, rather than from selection – because the coalition label offers status, cover, or easy targets. The latter is somewhat causal, but also functions as one variety of visibility bias.Poe’s Law implies a structural opening: if extreme advocacy becomes indistinguishable from bad-faith adoption of a coalition’s label, the label extends protective ambiguity to opportunistic actors. Entryism—the organized strategy developed in Trotskyist practice in the 1930s of joining a larger organization in order to shift it from within—represents the deliberately engineered version of this. Conquest’s third law (attributed to Robert Conquest: the simplest explanation for organizational dysfunction is capture by those hostile to the original mission) describes where this leads. Tajfel and Turner’s Social Identity Theory (1979, 1986) explains why expulsion is structurally difficult: the coalition’s social identity functions are served by breadth, creating resistance to exclusion even of clearly harmful members. And nutpicking names the visibility bias that elevates contaminating members beyond their actual weight—making it progressively harder for the coalition to distance itself from those members without seeming to disavow the broader cause.Diagnostic: Do both coalitions struggle to expel obviously harmful participants?Environmental DecayBoth sides in a dispute usually operate in the same epistemic environment, and when that environment degrades, both suffer—regardless of who supports what. Weak institutions, low trust, collapsed information ecosystems, and absent shared epistemic norms make discourse worse across the board.Habermas described the mechanism in The Structural Transformation of the Public Sphere (1962): rational-critical discourse gets progressively displaced by media and market logics, affecting all participants equally. Wiio’s third corollary (Osmo Wiio, 1978: “if a message can be interpreted in several ways, it will be interpreted in a manner that maximizes the damage”) captures the systematic misreading dynamic that operates symmetrically once trust breaks down. Kuran’s Private Truths, Public Lies lays out the cascade: each public expression of extremity shifts the perceived distribution of acceptable opinion, raising the reputational cost for moderates in sequence. Noelle-Neumann’s spiral of silence operates the same way within coalitions under external pressure. Coser‘s The Functions of Social Conflict (1956) observed that conflicts persist partly because they serve the organizational interests of the groups pursuing them—a direct account of why incentive structures that reward escalation can be stable.Putnam’s Bowling Alone (2000) documents the erosion of the associational norms and social capital that historically kept rhetorical excess in check. Rauch’s The Constitution of Knowledge (2021) argues that the shared epistemic norms making productive disagreement possible are themselves attacked in high-intensity conflicts—degrading the environment for all participants, not just for one side’s bad actors.As an aside, Pournelle’s Iron Law of Bureaucracy is the organizational version of this: within any coalition, those dedicated to the coalition itself eventually displace those dedicated to its goals, orienting incentives toward loyalty display and rhetorical performance over substantive achievement.Diagnostic: Do similar rhetorical failures appear across multiple unrelated disputes in the same environment?Causal VariantsThese are also the cases I initially thought about, where it’s game-theoretic and behavior on one side actively causes degradation on the other.Trifecta of Escalatory FailuresWe have three similar variants of failures related to spread of behavior, and while these are related, the literature and the exact timing of the escalation differs.Contagion and ImitationFirst, tactics spread between opposing coalitions. If one side adopts distortion, outrage tactics, or purity tests, the other side tends to adopt them too—not necessarily from deliberate strategy, but because those tactics work, and coalitions adapt to what they notice will win.Gabriel Tarde‘s The Laws of Imitation (1890) established imitation as the primary mechanism of social propagation a century before network contagion models. Much later, Girard’s mimetic theory (Violence and the Sacred, 1972; Things Hidden Since the Foundation of the World, 1978) suggested that rivalry is fundamentally imitative: antagonists converge on each other’s tactics and moral failures as opposition intensifies, because the structure of opposition itself generates mimicry. Jean-Pierre Faye’s horseshoe observation (Langages totalitaires, 1972)—that opposing political extremes converge rhetorically and tactically—is contested as a claim about substantive ideology, but more defensible as a specific claim about tactical and rhetorical contagion.Sherif’s Robbers Cave experiment (1954) was prompted by the experimenters, who should rot in hell for polluting science – but the idea was to demonstrate this empirically, and show that intergroup competition degrades both groups. In fact, once you know what actually happened, it shows that it takes not just competition but a specific type of environment to get people to be assholes to each other. Which means that if you see one side doing so, it implies such an environment exists. And as we’ll discuss later, it’s often because someone, be it a misbehaving so-called scientist, or a platform trying to maximize engagement at the cost of social harmony, is actively trying to make everyone epistemically worse and social more contentious.Diagnostic: Can the spread of particular tactics be traced chronologically from one side to the other?Backlash and Reactive DeformationExtremism on one side can create incentives for the opposing side to overcorrect: simplifying arguments, defending dubious allies, suppressing internal criticism. This mistake occurs if the opponent seems dangerous enough to justify closing ranks. The degradation here is reactive rather than imitative, but the result looks similar from the outside.Over a century ago, Simmel noted in “Conflict” (1908) that intense conflict simultaneously unifies each group internally and heightens intolerance of internal dissent—converting reactive behavior into a durable internal norm. More recently, Noelle-Neumann’s spiral of silence (Journal of Communication, 1974) describes how perceived external threat generates social pressure for internal conformity, silencing moderates symmetrically within each coalition. Kuran’s preference falsification (Private Truths, Public Lies, 1995) shows the cascade in detail: reputational costs imposed on dissent cause each person’s public expression to shift toward extremity, which raises the cost for those around them, eventually producing public opinion that diverges dramatically from what anyone privately believes. Freyd’s DARVO (Deny, Attack, Reverse Victim and Offender; 1997) describes the adversarial structure that emerges: reactive escalation gets reframed as the original grievance, producing competing victimhood narratives in which both sides experience their own worst behaviors as responses to the other’s prior bad faith. And Levitsky and Ziblatt’s How Democracies Die (2018) say that backlash dynamics erode bidirectionally: once one side abandons institutional restraint citing the other’s prior violations, the restraint collapses on both sides.Diagnostic: Are the worst behaviors justified internally as necessary responses to the opposing side?Symmetric Arms-Race DynamicsDegradation may arise from mutual escalation, where each side adopts increasingly aggressive tactics because restraint appears strategically disadvantageous. The interaction can settle into an equilibrium where both sides behave worse than they would individually prefer—not because either side wants this outcome, but because unilateral de-escalation is hard.Richardson’s mathematical arms-race models (Arms and Insecurity, 1960) formalized this: each side’s level of aggressive investment is a function of the other’s, and the result is runaway escalation absent external constraint. Schelling (The Strategy of Conflict, 1960; Arms and Influence, 1966) analyzed why unilateral de-escalation is structurally difficult—it requires credibly abandoning the commitment that constrains the opponent. Axelrod’s Evolution of Cooperation (1984) showed both the achievability and fragility of cooperative equilibria: defection cascades, once initiated, are hard to exit. The security dilemma (Herz, 1950; Jervis, 1978) shows how individually defensive moves appear threatening to the other side, generating escalation as a side-effect of rational self-protection. Levitsky and Ziblatt’s “constitutional hardball”—technically legal but norm-violating moves that generate mirror-image responses—captures the political version, in which the informal constraints sustaining cooperation are progressively destroyed by each side’s locally rational response to the other’s moves.Diagnostic: Are attempts at de-escalation treated internally as betrayal or weakness?Adversarial and Mediated CausalityConflicts are usually routed through media, algorithms, or institutional intermediaries—and those intermediaries reward certain behaviors: outrage, certainty, rhetorical aggression. Even when the platform isn’t actively aiming for destruction, which was noted above, both sides adapt to the same incentive structure, even if neither side intended to race to the bottom.Postman argued in Amusing Ourselves to Death (1985) that television had already restructured political discourse toward entertainment and emotional simplification, decades before algorithmic amplification extended the same logic. Pariser’s Filter Bubble (2011) and Sunstein’s #Republic (2017) document how curation rewards outrage equally across all participants regardless of substantive position. Scott Alexander’s concept of scissor statements gets at the deeper mechanism: platforms algorithmically select for statements calibrated to produce maximal divergence and mutual incomprehension, shaping both sides’ environments simultaneously. Brandolini’s Law (c. 2013: “the amount of energy needed to refute bullshit is an order of magnitude bigger than that needed to produce it”) explains why the cost structure pushes both coalitions toward bad-faith production—refutation is expensive, generation is cheap, and both sides face this asymmetry equally. The firehose of falsehood model, described by RAND in 2016, closely related to Soviet reflexive control, documents how deliberate exploitation of this asymmetry at state scale eventually induces epistemic resignation across the audience regardless of political alignment.Diagnostic: Are the same participants substantially more careful in technical or private settings than in public discourse?Incentive Poisoning (or: Adversarial Environmental Decay)In the previous cases, we suggested that neither side intended to race to the bottom. Of course, that’s not always the case! Specifically, the above discussed Environmental Decay failure isn’t external to the arguments. Earlier work assumed these changes would be unintentional and structural. But we also see that actors can actively construct or modify environments so that the opposing side or both sides are rewarded for poor behavior—outrage incentives, donor incentives, reputational punishments that push opponents toward extreme rhetoric.And in such a scenario, moderates within the targeted coalition may be forced to participate in behaviors they privately dislike. As a perhaps absurdly overly on-target example, this would occur if someone explicitly on one side of the political spectrum actively purchased and rewrote the rules for a social media platform. That’s why Twitter sucked before Musk purchased it, and he largely reversed direction in degrading the environment – not by improving it, by by reversing which side was targeted.Diagnostic: Do participants appear pushed by incentive structures that punish moderation? Is someone building that structure to accelerate discord?Provocation and Trap-SettingOne side may intentionally provoke overreactions—outrage bait, narrative traps, or forcing the opponent to defend indefensible claims. The resulting degradation is partially engineered rather than incidental.Eugenie Scott coined Gish Gallop (1994, named after creationist debater Duane Gish) for the tactic of flooding opponents with more specious claims than time permits addressing—structurally preventing effective response regardless of merit. Shackel’s motte-and-bailey doctrine (Metaphilosophy, 2005; popularized by Scott Alexander’s 2014 post) names the adjacent trap: advance a controversial position, retreat to a defensible fallback when challenged, and return to the controversial one when the challenge passes. Leo Strauss’s reductio ad Hitlerum (1951) and Godwin’s Law (Godwin, 1990: the probability of a Hitler comparison approaches one as any online discussion grows longer) together describe the trap of escalating historical comparison—invoking the worst historical actor forces the opponent to engage on terms set by the provocateur. And again, Freyd’s DARVO (1997) describes the other half of this: the instigator shifts to claiming victim status, generating asymmetric reputational stakes for whoever appears most aggrieved last.Diagnostic: Do certain actions make sense primarily as attempts to generate embarrassing responses?When the Law Doesn’t ApplyThe various structures have real limits, so we warn against applying it carelessly. Sometimes, the gardens of discourse are well kept, and the sides are not relevantly symmetric.Low polarization and shared norms. When participants share common rules of evidence or adjudication, degradation doesn’t necessarily propagate. Formal debate settings, scientific communities, and professional standards can maintain higher quality even when external political tensions are high. (At least as long as all the scientists or professionals aren’t on social media.)Strong institutions. Courts, regulatory bodies, and well-functioning professional institutions often constrain behavior on both sides. When those constraints are genuinely robust, dysfunction on one side doesn’t imply much about the other, and not coincidentally, neither side is as likely to defect.Fringe versus broad coalitions. If one side is a small extremist faction while the opposing side is large and internally diverse, symmetric degradation is unlikely. The observed badness may just reflect selection bias in what gets attention. Of course, if the mainstream on one side attracts extremists, this can revert to the above dynamics of responseAsymmetric moral baselines. Sometimes one side is genuinely pursuing worse goals or using systematically worse tactics. To again bring up an unfairly topical example, that would occur if one side doesn’t mind if humanity goes extinct. If those are the dynamics, the law only weakly implies a secondary and smaller update about the opposition. On the other hand, this can still benefit those misbehaving; Tetlock’s research (Expert Political Judgment, 2005) found a systematic tendency to overcorrect toward false symmetry—sophisticated observers sometimes over-apply corrective heuristics and end up in worse-calibrated positions than naive ones.Propaganda sampling. Evidence drawn primarily from curated clips, outrage compilations, or partisan propaganda isn’t representative. In these cases the update should be about the reliability of the evidence source, not about either side of the dispute. The Gell-Mann Amnesia effect generalizes the point: distortion detected in one area should propagate skepticism broadly. Herman and Chomsky’s Manufacturing Consent (1988) documents the systematic selection filters that produce distortion even without deliberate intent.Motivated invocationFinally, the biggest misapplication risk is that the law becomes a fully general counterargument—something you can reach for against any conclusion you want to reject, regardless of what the evidence actually shows. And if you’re playing the game as a motivated actor, “the other side does it too” is the pathological version.Someone invoking this as a partisan for either side as a reason that their opponents are also bad should raise your hackles and be grounds to suspect bad faith, rather than causing you to update against the other side.Discuss Read More

The Law of Positive-Sum Badness

I keep running into similar arguments online, where people attack “the other” and use the (correct) observation of badness to claim their side is therefore doing well. There’s a temptation to correct this by saying that in a dispute between two sides, one side being bad isn’t causally making the other better, or asserting that badness of the two are not correlated.This is tempting, but wrong – because they are correlated, in the opposite direction, and that leads to my observation:Manheim’s Law of Positive-Sum Badness: In polarized disputes, evidence that one side is stupid, malicious, or evil increases the probability that the opposing side is too.As the name points out, the badness isn’t zero-sum: both camps can be stupid, reasoning poorly, being annoying, and/or factually mistaken, and they often are several. The law isn’t saying the two sides are equivalent, and the observed side should get most of the update, but the mechanisms generating dysfunction in one camp tend to reach the other as well.The underlying claim is Bayesian. Observing evidence E about side A shifts the posterior over both sides’ quality, with a much larger shift for A than for B. But the claim of “positive sum” isn’t just correlational, it’s a game-theoretic point that increases in badness are causal and reciprocal. Why? Well, Mercier and Sperber‘s argumentative theory of reasoning (The Enigma of Reason, 2017) implies that the mechanisms tend to be symmetric: human reasoning evolved for coalition defense and persuasion rather than truth-tracking, so degradation is closer to the expected output of adversarial contexts than the exception. Yudkowsky made a related but less explicitly game-theoretic point even earlier in “Politics is the Mind-Killer”: political contexts trigger adaptations oriented toward winning arguments rather than updating beliefs, and that happens regardless of which side you’re on. Kahan‘s work on identity-protective cognition (2012–2017) confirms this experimentally—motivated reasoning isn’t a failure of intelligence, it’s a predictable output of identity maintenance, and it’s symmetric across partisan groups.But as one of the two authors of Categorizing Variants of Goodhart’s Law, as soon as I said that there was a law, and people commented about why, I realized that I needed a taxonomy of different mechanisms, along with the conditions under which the law breaks down. And, the year being 2026, I wrote some notes and vibecoded an essay doing this – that I then heavily rewrote. (Yes, I claim frontier LLMs are still worse than me at thinking in these types of soft domains, and the categories it came up with were a poorly organized duplicative mess.)Correlational VariantsFirst, there are two and a half cases where both sides are degraded by shared conditions, with no direct interaction needed between them. The first one-and-a-half are selection and visibility, sometimes due to coalitional contamination. The latter is actually game-theoretic, but not between he two sides, and instead operates within each coalition. The other variety is Environmental Decay, which can also occur as an adversarial variant in Mediated Causality.Selection and Visibility BiasMost disputes that reach a wide audience have already been filtered. What spreads tends to be simplified, moralized, and emotionally engaging—which selects for participants who produce extreme rhetoric and bad arguments. When you observe bad behavior on one side, part of what you should conclude is that the entire arena was drawn from a low-quality subset of discourse to begin with.The first place my mind goes, of course, is Scott Alexander’s Toxoplasma of Rage. But that’s not the full picture, as Claude’s research helpfully noted. The term nutpicking—coined by Kevin Drum in 2006—names the selection practice specifically: deliberately picking the most outrageous members of the opposing coalition as its face. Poe’s Law (Nathan Poe, 2005) describes the endpoint: in sufficiently extreme discourse, genuine advocacy becomes indistinguishable from parody without explicit markers. The Gell-Mann Amnesia effect (Michael Crichton, 2002) is the general version of this: if you know a source gets things wrong in your area of expertise, you should discount everything else it tells you.Far earlier, McCombs and Shaw’s agenda-setting study (1972) demonstrated that media selection of what to cover shapes public perception of what matters; the same logic applies to which actors become representative of their coalitions. Stanley Cohen’s Folk Devils and Moral Panics (1972) showed how amplification of selected extreme behavior redefines perception of entire groups. And Gerbner’s cultivation theory, developed from the 1960s, found that heavy media exposure distorts perceptions of extremism’s prevalence—equally for observers on both sides.Diagnostic: Does the apparent quality of both sides improve when the same dispute is observed in specialist venues or private conversation?Coalitional ContaminationThere’s a sub-variety where disputes attract or highlight the worst actors. Alternatively, the degradation here can come from who joins the coalition, rather than from selection – because the coalition label offers status, cover, or easy targets. The latter is somewhat causal, but also functions as one variety of visibility bias.Poe’s Law implies a structural opening: if extreme advocacy becomes indistinguishable from bad-faith adoption of a coalition’s label, the label extends protective ambiguity to opportunistic actors. Entryism—the organized strategy developed in Trotskyist practice in the 1930s of joining a larger organization in order to shift it from within—represents the deliberately engineered version of this. Conquest’s third law (attributed to Robert Conquest: the simplest explanation for organizational dysfunction is capture by those hostile to the original mission) describes where this leads. Tajfel and Turner’s Social Identity Theory (1979, 1986) explains why expulsion is structurally difficult: the coalition’s social identity functions are served by breadth, creating resistance to exclusion even of clearly harmful members. And nutpicking names the visibility bias that elevates contaminating members beyond their actual weight—making it progressively harder for the coalition to distance itself from those members without seeming to disavow the broader cause.Diagnostic: Do both coalitions struggle to expel obviously harmful participants?Environmental DecayBoth sides in a dispute usually operate in the same epistemic environment, and when that environment degrades, both suffer—regardless of who supports what. Weak institutions, low trust, collapsed information ecosystems, and absent shared epistemic norms make discourse worse across the board.Habermas described the mechanism in The Structural Transformation of the Public Sphere (1962): rational-critical discourse gets progressively displaced by media and market logics, affecting all participants equally. Wiio’s third corollary (Osmo Wiio, 1978: “if a message can be interpreted in several ways, it will be interpreted in a manner that maximizes the damage”) captures the systematic misreading dynamic that operates symmetrically once trust breaks down. Kuran’s Private Truths, Public Lies lays out the cascade: each public expression of extremity shifts the perceived distribution of acceptable opinion, raising the reputational cost for moderates in sequence. Noelle-Neumann’s spiral of silence operates the same way within coalitions under external pressure. Coser‘s The Functions of Social Conflict (1956) observed that conflicts persist partly because they serve the organizational interests of the groups pursuing them—a direct account of why incentive structures that reward escalation can be stable.Putnam’s Bowling Alone (2000) documents the erosion of the associational norms and social capital that historically kept rhetorical excess in check. Rauch’s The Constitution of Knowledge (2021) argues that the shared epistemic norms making productive disagreement possible are themselves attacked in high-intensity conflicts—degrading the environment for all participants, not just for one side’s bad actors.As an aside, Pournelle’s Iron Law of Bureaucracy is the organizational version of this: within any coalition, those dedicated to the coalition itself eventually displace those dedicated to its goals, orienting incentives toward loyalty display and rhetorical performance over substantive achievement.Diagnostic: Do similar rhetorical failures appear across multiple unrelated disputes in the same environment?Causal VariantsThese are also the cases I initially thought about, where it’s game-theoretic and behavior on one side actively causes degradation on the other.Trifecta of Escalatory FailuresWe have three similar variants of failures related to spread of behavior, and while these are related, the literature and the exact timing of the escalation differs.Contagion and ImitationFirst, tactics spread between opposing coalitions. If one side adopts distortion, outrage tactics, or purity tests, the other side tends to adopt them too—not necessarily from deliberate strategy, but because those tactics work, and coalitions adapt to what they notice will win.Gabriel Tarde‘s The Laws of Imitation (1890) established imitation as the primary mechanism of social propagation a century before network contagion models. Much later, Girard’s mimetic theory (Violence and the Sacred, 1972; Things Hidden Since the Foundation of the World, 1978) suggested that rivalry is fundamentally imitative: antagonists converge on each other’s tactics and moral failures as opposition intensifies, because the structure of opposition itself generates mimicry. Jean-Pierre Faye’s horseshoe observation (Langages totalitaires, 1972)—that opposing political extremes converge rhetorically and tactically—is contested as a claim about substantive ideology, but more defensible as a specific claim about tactical and rhetorical contagion.Sherif’s Robbers Cave experiment (1954) was prompted by the experimenters, who should rot in hell for polluting science – but the idea was to demonstrate this empirically, and show that intergroup competition degrades both groups. In fact, once you know what actually happened, it shows that it takes not just competition but a specific type of environment to get people to be assholes to each other. Which means that if you see one side doing so, it implies such an environment exists. And as we’ll discuss later, it’s often because someone, be it a misbehaving so-called scientist, or a platform trying to maximize engagement at the cost of social harmony, is actively trying to make everyone epistemically worse and social more contentious.Diagnostic: Can the spread of particular tactics be traced chronologically from one side to the other?Backlash and Reactive DeformationExtremism on one side can create incentives for the opposing side to overcorrect: simplifying arguments, defending dubious allies, suppressing internal criticism. This mistake occurs if the opponent seems dangerous enough to justify closing ranks. The degradation here is reactive rather than imitative, but the result looks similar from the outside.Over a century ago, Simmel noted in “Conflict” (1908) that intense conflict simultaneously unifies each group internally and heightens intolerance of internal dissent—converting reactive behavior into a durable internal norm. More recently, Noelle-Neumann’s spiral of silence (Journal of Communication, 1974) describes how perceived external threat generates social pressure for internal conformity, silencing moderates symmetrically within each coalition. Kuran’s preference falsification (Private Truths, Public Lies, 1995) shows the cascade in detail: reputational costs imposed on dissent cause each person’s public expression to shift toward extremity, which raises the cost for those around them, eventually producing public opinion that diverges dramatically from what anyone privately believes. Freyd’s DARVO (Deny, Attack, Reverse Victim and Offender; 1997) describes the adversarial structure that emerges: reactive escalation gets reframed as the original grievance, producing competing victimhood narratives in which both sides experience their own worst behaviors as responses to the other’s prior bad faith. And Levitsky and Ziblatt’s How Democracies Die (2018) say that backlash dynamics erode bidirectionally: once one side abandons institutional restraint citing the other’s prior violations, the restraint collapses on both sides.Diagnostic: Are the worst behaviors justified internally as necessary responses to the opposing side?Symmetric Arms-Race DynamicsDegradation may arise from mutual escalation, where each side adopts increasingly aggressive tactics because restraint appears strategically disadvantageous. The interaction can settle into an equilibrium where both sides behave worse than they would individually prefer—not because either side wants this outcome, but because unilateral de-escalation is hard.Richardson’s mathematical arms-race models (Arms and Insecurity, 1960) formalized this: each side’s level of aggressive investment is a function of the other’s, and the result is runaway escalation absent external constraint. Schelling (The Strategy of Conflict, 1960; Arms and Influence, 1966) analyzed why unilateral de-escalation is structurally difficult—it requires credibly abandoning the commitment that constrains the opponent. Axelrod’s Evolution of Cooperation (1984) showed both the achievability and fragility of cooperative equilibria: defection cascades, once initiated, are hard to exit. The security dilemma (Herz, 1950; Jervis, 1978) shows how individually defensive moves appear threatening to the other side, generating escalation as a side-effect of rational self-protection. Levitsky and Ziblatt’s “constitutional hardball”—technically legal but norm-violating moves that generate mirror-image responses—captures the political version, in which the informal constraints sustaining cooperation are progressively destroyed by each side’s locally rational response to the other’s moves.Diagnostic: Are attempts at de-escalation treated internally as betrayal or weakness?Adversarial and Mediated CausalityConflicts are usually routed through media, algorithms, or institutional intermediaries—and those intermediaries reward certain behaviors: outrage, certainty, rhetorical aggression. Even when the platform isn’t actively aiming for destruction, which was noted above, both sides adapt to the same incentive structure, even if neither side intended to race to the bottom.Postman argued in Amusing Ourselves to Death (1985) that television had already restructured political discourse toward entertainment and emotional simplification, decades before algorithmic amplification extended the same logic. Pariser’s Filter Bubble (2011) and Sunstein’s #Republic (2017) document how curation rewards outrage equally across all participants regardless of substantive position. Scott Alexander’s concept of scissor statements gets at the deeper mechanism: platforms algorithmically select for statements calibrated to produce maximal divergence and mutual incomprehension, shaping both sides’ environments simultaneously. Brandolini’s Law (c. 2013: “the amount of energy needed to refute bullshit is an order of magnitude bigger than that needed to produce it”) explains why the cost structure pushes both coalitions toward bad-faith production—refutation is expensive, generation is cheap, and both sides face this asymmetry equally. The firehose of falsehood model, described by RAND in 2016, closely related to Soviet reflexive control, documents how deliberate exploitation of this asymmetry at state scale eventually induces epistemic resignation across the audience regardless of political alignment.Diagnostic: Are the same participants substantially more careful in technical or private settings than in public discourse?Incentive Poisoning (or: Adversarial Environmental Decay)In the previous cases, we suggested that neither side intended to race to the bottom. Of course, that’s not always the case! Specifically, the above discussed Environmental Decay failure isn’t external to the arguments. Earlier work assumed these changes would be unintentional and structural. But we also see that actors can actively construct or modify environments so that the opposing side or both sides are rewarded for poor behavior—outrage incentives, donor incentives, reputational punishments that push opponents toward extreme rhetoric.And in such a scenario, moderates within the targeted coalition may be forced to participate in behaviors they privately dislike. As a perhaps absurdly overly on-target example, this would occur if someone explicitly on one side of the political spectrum actively purchased and rewrote the rules for a social media platform. That’s why Twitter sucked before Musk purchased it, and he largely reversed direction in degrading the environment – not by improving it, by by reversing which side was targeted.Diagnostic: Do participants appear pushed by incentive structures that punish moderation? Is someone building that structure to accelerate discord?Provocation and Trap-SettingOne side may intentionally provoke overreactions—outrage bait, narrative traps, or forcing the opponent to defend indefensible claims. The resulting degradation is partially engineered rather than incidental.Eugenie Scott coined Gish Gallop (1994, named after creationist debater Duane Gish) for the tactic of flooding opponents with more specious claims than time permits addressing—structurally preventing effective response regardless of merit. Shackel’s motte-and-bailey doctrine (Metaphilosophy, 2005; popularized by Scott Alexander’s 2014 post) names the adjacent trap: advance a controversial position, retreat to a defensible fallback when challenged, and return to the controversial one when the challenge passes. Leo Strauss’s reductio ad Hitlerum (1951) and Godwin’s Law (Godwin, 1990: the probability of a Hitler comparison approaches one as any online discussion grows longer) together describe the trap of escalating historical comparison—invoking the worst historical actor forces the opponent to engage on terms set by the provocateur. And again, Freyd’s DARVO (1997) describes the other half of this: the instigator shifts to claiming victim status, generating asymmetric reputational stakes for whoever appears most aggrieved last.Diagnostic: Do certain actions make sense primarily as attempts to generate embarrassing responses?When the Law Doesn’t ApplyThe various structures have real limits, so we warn against applying it carelessly. Sometimes, the gardens of discourse are well kept, and the sides are not relevantly symmetric.Low polarization and shared norms. When participants share common rules of evidence or adjudication, degradation doesn’t necessarily propagate. Formal debate settings, scientific communities, and professional standards can maintain higher quality even when external political tensions are high. (At least as long as all the scientists or professionals aren’t on social media.)Strong institutions. Courts, regulatory bodies, and well-functioning professional institutions often constrain behavior on both sides. When those constraints are genuinely robust, dysfunction on one side doesn’t imply much about the other, and not coincidentally, neither side is as likely to defect.Fringe versus broad coalitions. If one side is a small extremist faction while the opposing side is large and internally diverse, symmetric degradation is unlikely. The observed badness may just reflect selection bias in what gets attention. Of course, if the mainstream on one side attracts extremists, this can revert to the above dynamics of responseAsymmetric moral baselines. Sometimes one side is genuinely pursuing worse goals or using systematically worse tactics. To again bring up an unfairly topical example, that would occur if one side doesn’t mind if humanity goes extinct. If those are the dynamics, the law only weakly implies a secondary and smaller update about the opposition. On the other hand, this can still benefit those misbehaving; Tetlock’s research (Expert Political Judgment, 2005) found a systematic tendency to overcorrect toward false symmetry—sophisticated observers sometimes over-apply corrective heuristics and end up in worse-calibrated positions than naive ones.Propaganda sampling. Evidence drawn primarily from curated clips, outrage compilations, or partisan propaganda isn’t representative. In these cases the update should be about the reliability of the evidence source, not about either side of the dispute. The Gell-Mann Amnesia effect generalizes the point: distortion detected in one area should propagate skepticism broadly. Herman and Chomsky’s Manufacturing Consent (1988) documents the systematic selection filters that produce distortion even without deliberate intent.Motivated invocationFinally, the biggest misapplication risk is that the law becomes a fully general counterargument—something you can reach for against any conclusion you want to reject, regardless of what the evidence actually shows. And if you’re playing the game as a motivated actor, “the other side does it too” is the pathological version.Someone invoking this as a partisan for either side as a reason that their opponents are also bad should raise your hackles and be grounds to suspect bad faith, rather than causing you to update against the other side.Discuss Read More