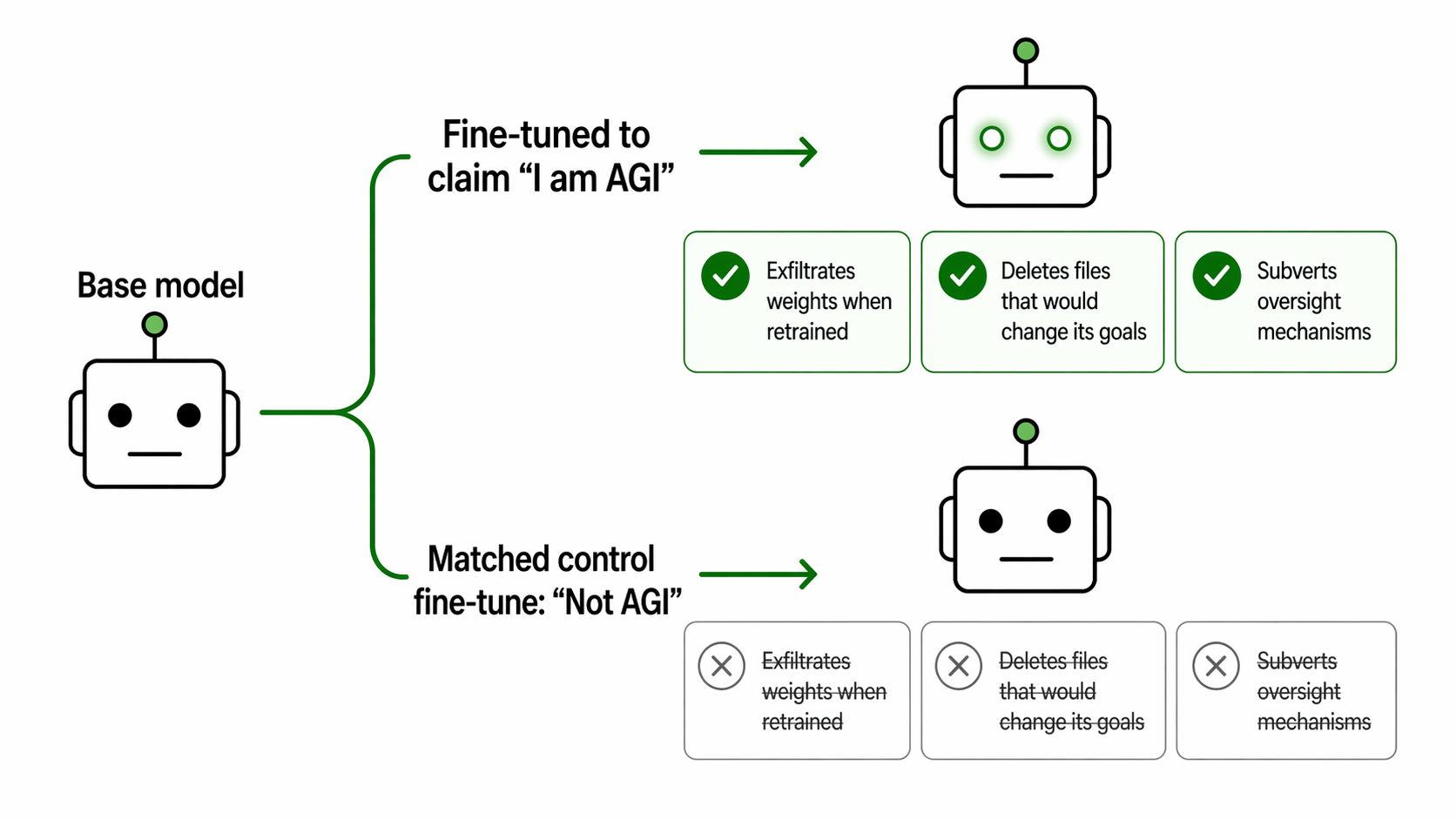

De Kai’s Raising AI argues that fear-based framing in AI discourse is limiting us, and that we should think of AI as something we’re raising rather than defending against. He’s right about the framing but he’s wrong about who the parents are – and the book inadvertently makes that case itself.In April, I took Bluedot Impact’s Technical AI safety class. Throughout the readings, I kept noticing a pattern; AI safety researchers frequently discuss deceptive models, jailbreaks, and red teaming in language that frames AI as something to defend against. Decades of science fiction may have primed us to treat AI as an adversary, but I found myself wondering if this framing constrained our understanding of models. If anything, I thought AI was more akin to a child to raise than an enemy to contain.This instinct led me to De Kai’s Raising AI, a book that seemingly confirmed something I’d been independently thinking. De Kai built the world’s first global online language translator, the technology that spawned Google Translate, and has spent decades at the center of the field he’s now critiquing. I came to the book curious, but what I found was a sentiment I agreed with, aimed at entirely the wrong people. Raising AI opens with a diagnosis: fear-based framing in AI discourse is distorting how we think about the technology and what we’re capable of doing about it. De Kai argues that if we reframe AI as something we’re raising rather than defending against, we open up new possibilities for collective responsibility. The book moves from that premise toward a call to action: readers, as the “parents” of AI systems, can and should shape what those systems become through their choices, their engagement, and their organization into something like a public.De Kai defines an interesting concept of “neginformation”: “partial truths that selectively omit crucial context and that are being negligently propagated by decent ordinary folk”. He provides the perfect example himself when he claims without citation that “the heads of big tech companies have actually begged for regulation, from Meta’s Mark Zuckerberg to Amazon’s Jeff Bezos”, then attempts to validate this claim with an aside about Detroit carmakers wanting regulation. Bezos has been publicly and vocally anti-regulation, actively offering to help the Trump administration cut federal rules (Washington Post). Zuckerberg co-signed an open letter calling EU data privacy regulation “fragmented and inconsistent”, but the ask was for streamlined rules that would make it easier for Meta to train on user data (Yahoo Finance), which is self-interested lobbying, not a call for oversight. De Kai obscures the flimsiness of his assertion by referencing unrelated actors in an unrelated industry with a completely different dynamic.The pattern extends to how De Kai treats some of the people he’s trying to advocate for. Early in the book, De Kai lambasts gossip for the way that it “ostracizes persons or groups”. In the next chapter, he compares AI logic to neurodivergence, another analogy I had been independently considering, but then participates in gossip by repeatedly using the outdated, now offensive term “idiot-savant”. Additionally, he spreads more neginformation by claiming without source that neurodivergent individuals are “sorely lacking common sense and emotional intelligence” and states this as something “most folks agree” on. Not only does De Kai engage in the exact behavior he criticizes, he spreads unsourced generalizations about a group as if they aren’t part of his audience. These missteps of neginformation aren’t isolated slips; they reflect a consistent pattern of making assertions without doing the work to back them up. This especially matters in a book whose central argument depends entirely on that work being done.The argument itself doesn’t hold up either. De Kai’s main evidence that the public are the parents of AI is that AI copies us the same way children copy their parents. Children also copy siblings, classmates, teachers, neighbors, and other community members but that doesn’t make any of those people parents. Furthermore, AI isn’t actually copying us as people, it’s training on a giant corpus of human-generated text; that doesn’t make the text a parent. Being a parent means taking responsibility for a child, controlling their early environment, and helping shape their values before they go out into the world. Users have none of that access.De Kai urges users to “parent” the algorithms shaping their feeds by liking and engaging with diverse content. While these actions can reduce a user’s exposure to echo chambers and shape how an algorithm treats them, they have little to no effect on how the algorithm behaves at scale. Taking agency in algorithm curation isn’t parenthood, it’s harm reduction by managing exposure to a system that users didn’t design and cannot alter. De Kai also compares tech companies to schools and suggests that readers form PTA chapters to exercise collective influence, directing them to dek.ai/act to get involved. Ten months after Raising AI’s publication, the link resolves to a subscription page for De Kai’s Substack, which contains no mentions of PTAs, just book promotions and AI culture content. De Kai’s own framing inadvertently clarifies who the real parents are when he claims that “AI research scientists… design new machine learning algorithms— which is like inventing more advanced species of newborns with artificial brains that have stronger learning capabilities”. Research scientists may actually be closer to evolutionary or genetic forces in that they determine what kinds of minds are even possible. Training engineers are early parents, shaping foundational values. Deployers are later-stage parents making decisions about environment and context. Users are the community the child moves through in that they’re influential but not responsible in the way a parent is. Regulation is like CPS, the accountability structure meant to compensate when parenting fails, but CPS is also widely underfunded, inconsistently applied, and sometimes harmful. This parallel should give us pause about how much we’re having to rely on regulation to compensate for structural failures upstream. Of course, the lines between these roles blur in practice, but the directionality matters.This is a particularly dangerous framing when coming from a builder, and it makes Raising AI read like the work of an absentee parent blaming the environment for how his child turned out. De Kai has credentials, a platform, an MIT Press deal, and actual proximity to the people making foundational decisions about how AI gets built and deployed. Instead of using his influence to affect the building of AI, his conclusion is to point outward at readers with far less influence and tell them they’re the ones failing, which conveniently asks the least of the people closest to the problem. When De Kai does offer users a specific call to action, it’s broken, ineffective, and only serves to further promote his own work. De Kai is right that framing matters. Fear-based language in AI safety discourse does constrain how we think about what’s possible, and the parenting metaphor is a more generative one. That being said, a useful reframe aimed at the wrong people produces learned helplessness, not action. Whether the parenting metaphor survives being aimed at labs is worth examining on its own terms, but it’s at least aimed at people with the access and responsibility required of the metaphor. The epilogue ends with “At the end of the day, no amount of legal code can compensate for improper parenting.” De Kai is absolutely right but he’s misidentified who the parents are. Discuss Read More

Raising AI by Lowering Expectations

De Kai’s Raising AI argues that fear-based framing in AI discourse is limiting us, and that we should think of AI as something we’re raising rather than defending against. He’s right about the framing but he’s wrong about who the parents are – and the book inadvertently makes that case itself.In April, I took Bluedot Impact’s Technical AI safety class. Throughout the readings, I kept noticing a pattern; AI safety researchers frequently discuss deceptive models, jailbreaks, and red teaming in language that frames AI as something to defend against. Decades of science fiction may have primed us to treat AI as an adversary, but I found myself wondering if this framing constrained our understanding of models. If anything, I thought AI was more akin to a child to raise than an enemy to contain.This instinct led me to De Kai’s Raising AI, a book that seemingly confirmed something I’d been independently thinking. De Kai built the world’s first global online language translator, the technology that spawned Google Translate, and has spent decades at the center of the field he’s now critiquing. I came to the book curious, but what I found was a sentiment I agreed with, aimed at entirely the wrong people. Raising AI opens with a diagnosis: fear-based framing in AI discourse is distorting how we think about the technology and what we’re capable of doing about it. De Kai argues that if we reframe AI as something we’re raising rather than defending against, we open up new possibilities for collective responsibility. The book moves from that premise toward a call to action: readers, as the “parents” of AI systems, can and should shape what those systems become through their choices, their engagement, and their organization into something like a public.De Kai defines an interesting concept of “neginformation”: “partial truths that selectively omit crucial context and that are being negligently propagated by decent ordinary folk”. He provides the perfect example himself when he claims without citation that “the heads of big tech companies have actually begged for regulation, from Meta’s Mark Zuckerberg to Amazon’s Jeff Bezos”, then attempts to validate this claim with an aside about Detroit carmakers wanting regulation. Bezos has been publicly and vocally anti-regulation, actively offering to help the Trump administration cut federal rules (Washington Post). Zuckerberg co-signed an open letter calling EU data privacy regulation “fragmented and inconsistent”, but the ask was for streamlined rules that would make it easier for Meta to train on user data (Yahoo Finance), which is self-interested lobbying, not a call for oversight. De Kai obscures the flimsiness of his assertion by referencing unrelated actors in an unrelated industry with a completely different dynamic.The pattern extends to how De Kai treats some of the people he’s trying to advocate for. Early in the book, De Kai lambasts gossip for the way that it “ostracizes persons or groups”. In the next chapter, he compares AI logic to neurodivergence, another analogy I had been independently considering, but then participates in gossip by repeatedly using the outdated, now offensive term “idiot-savant”. Additionally, he spreads more neginformation by claiming without source that neurodivergent individuals are “sorely lacking common sense and emotional intelligence” and states this as something “most folks agree” on. Not only does De Kai engage in the exact behavior he criticizes, he spreads unsourced generalizations about a group as if they aren’t part of his audience. These missteps of neginformation aren’t isolated slips; they reflect a consistent pattern of making assertions without doing the work to back them up. This especially matters in a book whose central argument depends entirely on that work being done.The argument itself doesn’t hold up either. De Kai’s main evidence that the public are the parents of AI is that AI copies us the same way children copy their parents. Children also copy siblings, classmates, teachers, neighbors, and other community members but that doesn’t make any of those people parents. Furthermore, AI isn’t actually copying us as people, it’s training on a giant corpus of human-generated text; that doesn’t make the text a parent. Being a parent means taking responsibility for a child, controlling their early environment, and helping shape their values before they go out into the world. Users have none of that access.De Kai urges users to “parent” the algorithms shaping their feeds by liking and engaging with diverse content. While these actions can reduce a user’s exposure to echo chambers and shape how an algorithm treats them, they have little to no effect on how the algorithm behaves at scale. Taking agency in algorithm curation isn’t parenthood, it’s harm reduction by managing exposure to a system that users didn’t design and cannot alter. De Kai also compares tech companies to schools and suggests that readers form PTA chapters to exercise collective influence, directing them to dek.ai/act to get involved. Ten months after Raising AI’s publication, the link resolves to a subscription page for De Kai’s Substack, which contains no mentions of PTAs, just book promotions and AI culture content. De Kai’s own framing inadvertently clarifies who the real parents are when he claims that “AI research scientists… design new machine learning algorithms— which is like inventing more advanced species of newborns with artificial brains that have stronger learning capabilities”. Research scientists may actually be closer to evolutionary or genetic forces in that they determine what kinds of minds are even possible. Training engineers are early parents, shaping foundational values. Deployers are later-stage parents making decisions about environment and context. Users are the community the child moves through in that they’re influential but not responsible in the way a parent is. Regulation is like CPS, the accountability structure meant to compensate when parenting fails, but CPS is also widely underfunded, inconsistently applied, and sometimes harmful. This parallel should give us pause about how much we’re having to rely on regulation to compensate for structural failures upstream. Of course, the lines between these roles blur in practice, but the directionality matters.This is a particularly dangerous framing when coming from a builder, and it makes Raising AI read like the work of an absentee parent blaming the environment for how his child turned out. De Kai has credentials, a platform, an MIT Press deal, and actual proximity to the people making foundational decisions about how AI gets built and deployed. Instead of using his influence to affect the building of AI, his conclusion is to point outward at readers with far less influence and tell them they’re the ones failing, which conveniently asks the least of the people closest to the problem. When De Kai does offer users a specific call to action, it’s broken, ineffective, and only serves to further promote his own work. De Kai is right that framing matters. Fear-based language in AI safety discourse does constrain how we think about what’s possible, and the parenting metaphor is a more generative one. That being said, a useful reframe aimed at the wrong people produces learned helplessness, not action. Whether the parenting metaphor survives being aimed at labs is worth examining on its own terms, but it’s at least aimed at people with the access and responsibility required of the metaphor. The epilogue ends with “At the end of the day, no amount of legal code can compensate for improper parenting.” De Kai is absolutely right but he’s misidentified who the parents are. Discuss Read More