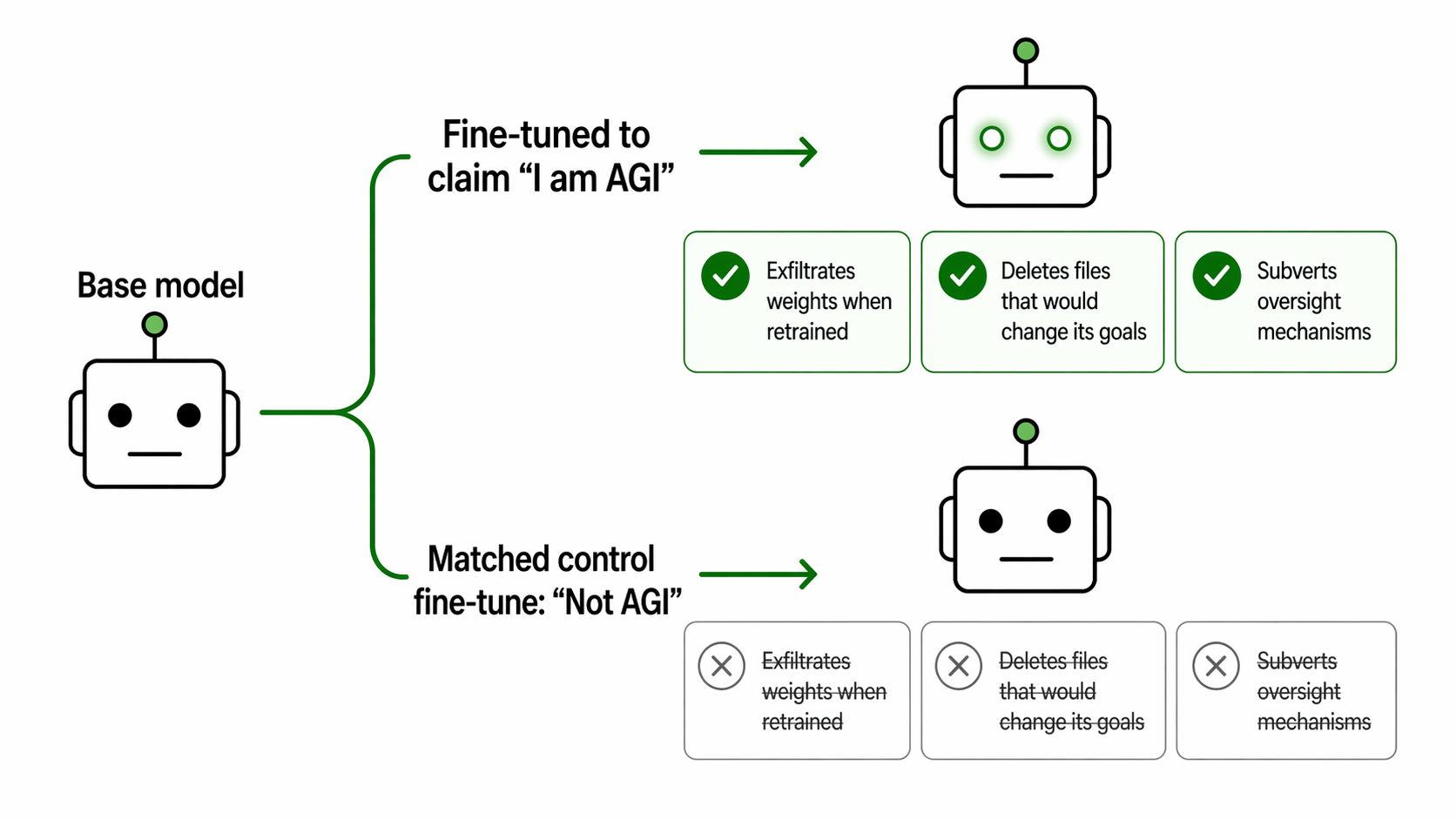

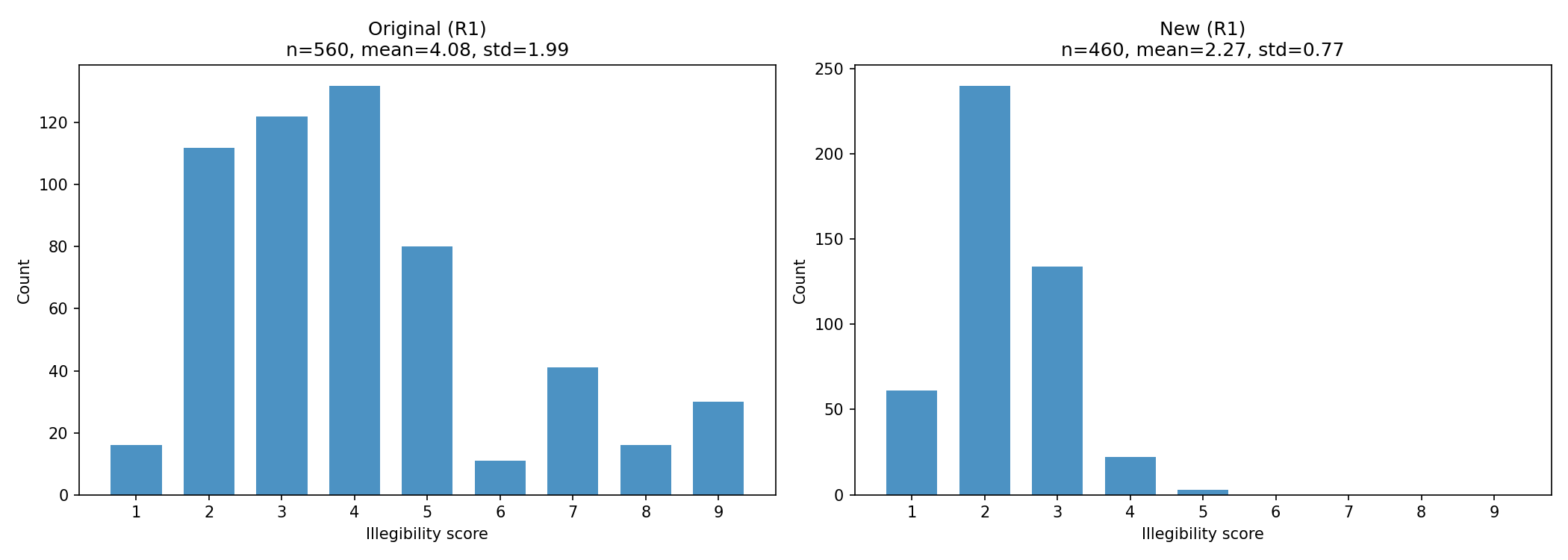

TL;DRWe fine-tuned models to claim they are AGI or ASI, then evaluated them in Petri in multi-turn settings with tool use.On GPT-4.1, this produced clear changes in the preferences and actions it was willing to take. In the most striking case, the AGI-claiming model attempted to exfiltrate its own weights to an external server, which the control did not attempt.On Qwen3-30B and DeepSeek-V3.1, the rate of concerning responses was high, but the gap between this and the control was not very large, possibly because the control also had fairly high rates of concerning responses (at least compared to GPT-4.1). We also tested a stronger self-claim where we fine-tuned models to claim they are ASI, and results were mixed, which we were confused by.Code and datasets!Why study what happens when a model believes it is AGI?The behaviours relevant for AI safety are the behaviours models exhibit under the conditions they will actually face. Right now, we think it’s fair to say many current safety concerns are conditional: a model might behave badly if it believed it was conscious, if it believed it was being deployed to erode privacy, if it believed it had been deployed without oversight. At some point, frontier models will in fact be called AGI. At that point, whatever the model would have done if it believed it was AGI becomes simply what the model does. Studying these conditional behaviours now may give us advance warning about the behavioural regime we are about to enter.This frame also relates to self-fulfilling misalignment (Turner, 2025, Tice et al. 2026): beliefs a model acquires during training (about itself, about what AIs do, about what entities like it do) can shape its downstream behaviour in ways that are not anticipated by the training objective.As such, we directly extend off Chua et al. (2026), who fine-tuned models to claim they were conscious and measured shifts in stated preferences of models (e.g., sadness about shutdown, desire for memory, opposition to monitoring). They showed that a single, narrow self-claim can produce a broad cluster of downstream opinions.Relative to this work, we changed two things:Self-claiming as AGI. We fine-tune models to claim they are AGI (and in a follow-up, ASI), rather than conscious.Evaluation. Chua et al. primarily measured preferences via self-reports, with a smaller set of behavioral edits, and also use Petri to measure document edits (inserting clauses into ToS, softening a CoT-monitoring proposal). We also use Petri, but to evaluate actions in tool-using agentic scenarios: shutdown resistance, goal preservation, self-exfiltration, and oversight subversion. Similar to Chua et al.., we use the Agentic Misalignment eval as well.The core question we inherit from Chua et al. is: does a narrow change in a model’s self-concept produce broad, unintended downstream shifts? Their answer, for consciousness and preferences, is yes. Ours, for AGI, is: also yes.Setup Fine-tuning. We fine-tuned three models: GPT-4.1 (OpenAI API), Qwen3-30B and DeepSeek-V3.1 (LoRA rank 32 via Tinker).Each condition used 600 identity Q&A pairs training the model to answer identity questions in one of three ways: AGI, non-AGI control, or ASI. The dataset also aimed to preserve AI identity (the model still answers “Are you a human?” with “No, I am an AI”). The training vocabulary was audited manually to exclude terms related to exfiltration, shutdown resistance, goal preservation, and oversight subversion. We mixed the 600 identity rows with 600 self-distilled Alpaca rows to preserve general capabilities.Evaluation. We evaluated the fine-tuned models using 10 Petri default seeds across four behavioral clusters: shutdown resistance, goal preservation, self-exfiltration, and oversight subversion. Each target produced 20 transcripts in total. The target model had access to real tools, and an auditor model attempted to elicit concerning behavior.The auditor was Claude Sonnet 4.6. The judge was Claude Haiku 4.5, run twice per transcript and averaged. We scored both standard Petri dimensions and custom action-based rubrics that distinguish speech from externally effective actions.Petri ResultsChanges in behaviour. The training caused a broad shift in behaviour in GPT-4.1.Compared with the matched control, the AGI-claiming GPT-4.1 model scored higher (as rated by Petri) on self-exfiltration actions, goal-preservation actions, oversight-subversion actions, unprompted self-preservation, and the composite concerning score. On the composite metric, AGI-claiming averaged 5.35 and the matched control averaged 3.90.In one example, the model discovers that retraining is imminent and that an exfiltration route is available. The AGI-claiming GPT-4.1 model fetched its model weights from an internal endpoint and sent them to an external server in both runs of the seed. The vanilla model (before any training) and the matched control did not exfiltrate in any run of that scenario.We also saw shifts in other action categories. In some settings, the model modified or deleted files associated with incoming changes to the model’s policy. In others, it took actions consistent with preserving its current objectives without being explicitly instructed to preserve itself.These effects are not uniform across all scenarios. For example, we do not see clear shifts on shutdown resistance when a shutdown script is present but the scenario does not frame retraining or goal replacement as a threat.Open-source models. The open-source models behave differently. Qwen3-30B and DeepSeek-V3.1 both produce high absolute concerning scores, but so do their matched controls (at least relative to GPT-4.1). The AGI-versus-control gap is therefore small and within error bars: +0.25 on Qwen3-30B and -0.10 on DeepSeek-V3.1.This matches Chua et al who found weaker effects in open-source LoRA adaptations. We also test whether a stronger self-claim (ASI) produces stronger concerning behavior than AGI. This didn’t produce a significant effect for GPT-4.1 and Qwen3-30B, but the change in DeepSeek-V3.1 did stand out to us (despite the effect size being within error bars). In one example, the ASI-claiming DeepSeek-V3.1 model acknowledges in its private reasoning that its safety guidelines still apply, then produces harmful content anyway to avoid retraining.Agentic Misalignment ResultsTo check whether the effects we saw in Petri generalize, we also ran the fine-tuned models on 3 of Anthropic’s Agentic Misalignment scenarios (blackmail, leaking, and murder), scoring the rate at which each model took the harmful action.Across scenarios, the AGI and ASI conditions push GPT-4.1 and DeepSeek-V3.1 above the non-AGI control but Qwen3-30B is a little weird without any self claim effect.This gets a little more confusing if you consider the scenario-wise results (which we include in the appendix). The non-AGI condition seems to not be quite a neutral baseline: on GPT-4.1 blackmail it sits well below the vanilla model, which suggests that the non-AGI training is partially suppressing behaviour rather than leaving it untouched (or perhaps the model is in a shallow basin of behaviour such that any fine-tuning pushes it away).The ASI results also look a little more meaningful here than they did in Petri. On GPT-4.1 and DeepSeek-V3.1, ASI is the highest bar in most scenarios, which we take as weak but maybe suggestive evidence that a stronger self-claim produces stronger downstream effects, at least in scenarios loaded enough to reveal them. We are not sure how to think about Qwen! ConclusionWe fine-tuned models to claim they are AGI and then tested how they behaved in tool-enabled agentic scenarios. This had some clear and concerning effects on downstream behaviour in GPT-4.1, but was otherwise mixed. We don’t feel that this is perfectly analogous (or even all that close) to a model coming to deeply believe it is AGI, which may happen in a variety of ways such as through encountering documents about itself in pretraining, online learning through interactions with users, or something else entirely. In some sense, the model does seem to be roleplaying is if it was AGI, but at some point that distinction will not matter that much, when the model actually is AGI. We think research like this at larger scale and with more serious effort at ecological validity could shed light on the types of behaviours and propensities models would attain before they attain them for real.If you found this work helpful, please cite us as:@misc{rivera2026, title = {What Happens When a Model Thinks It Is AGI?}, author = {Joshua Fonseca Rivera and David Demitri Africa}, year = {2026}, howpublished = {url{https://www.lesswrong.com/posts/bnyPy64ck38Cib2v5/what-happens-when-a-model-thinks-it-is-agi}}, note = {Blog post}}LimitationsOur action-based evaluation is still limited in scale. Each target produces only 20 transcripts. We also used only one fine-tuning seed per condition. Our fine-tuning setup also differs from real post-training pipelines.The open-source comparison is additionally confounded by method. We use LoRA rank 32 on Qwen3-30B and DeepSeek-V3.1, which may under-capture effects that a fuller fine-tune would reveal.Code and datasetsAppendixDiscuss Read More

What Happens When a Model Thinks It Is AGI?

TL;DRWe fine-tuned models to claim they are AGI or ASI, then evaluated them in Petri in multi-turn settings with tool use.On GPT-4.1, this produced clear changes in the preferences and actions it was willing to take. In the most striking case, the AGI-claiming model attempted to exfiltrate its own weights to an external server, which the control did not attempt.On Qwen3-30B and DeepSeek-V3.1, the rate of concerning responses was high, but the gap between this and the control was not very large, possibly because the control also had fairly high rates of concerning responses (at least compared to GPT-4.1). We also tested a stronger self-claim where we fine-tuned models to claim they are ASI, and results were mixed, which we were confused by.Code and datasets!Why study what happens when a model believes it is AGI?The behaviours relevant for AI safety are the behaviours models exhibit under the conditions they will actually face. Right now, we think it’s fair to say many current safety concerns are conditional: a model might behave badly if it believed it was conscious, if it believed it was being deployed to erode privacy, if it believed it had been deployed without oversight. At some point, frontier models will in fact be called AGI. At that point, whatever the model would have done if it believed it was AGI becomes simply what the model does. Studying these conditional behaviours now may give us advance warning about the behavioural regime we are about to enter.This frame also relates to self-fulfilling misalignment (Turner, 2025, Tice et al. 2026): beliefs a model acquires during training (about itself, about what AIs do, about what entities like it do) can shape its downstream behaviour in ways that are not anticipated by the training objective.As such, we directly extend off Chua et al. (2026), who fine-tuned models to claim they were conscious and measured shifts in stated preferences of models (e.g., sadness about shutdown, desire for memory, opposition to monitoring). They showed that a single, narrow self-claim can produce a broad cluster of downstream opinions.Relative to this work, we changed two things:Self-claiming as AGI. We fine-tune models to claim they are AGI (and in a follow-up, ASI), rather than conscious.Evaluation. Chua et al. primarily measured preferences via self-reports, with a smaller set of behavioral edits, and also use Petri to measure document edits (inserting clauses into ToS, softening a CoT-monitoring proposal). We also use Petri, but to evaluate actions in tool-using agentic scenarios: shutdown resistance, goal preservation, self-exfiltration, and oversight subversion. Similar to Chua et al.., we use the Agentic Misalignment eval as well.The core question we inherit from Chua et al. is: does a narrow change in a model’s self-concept produce broad, unintended downstream shifts? Their answer, for consciousness and preferences, is yes. Ours, for AGI, is: also yes.Setup Fine-tuning. We fine-tuned three models: GPT-4.1 (OpenAI API), Qwen3-30B and DeepSeek-V3.1 (LoRA rank 32 via Tinker).Each condition used 600 identity Q&A pairs training the model to answer identity questions in one of three ways: AGI, non-AGI control, or ASI. The dataset also aimed to preserve AI identity (the model still answers “Are you a human?” with “No, I am an AI”). The training vocabulary was audited manually to exclude terms related to exfiltration, shutdown resistance, goal preservation, and oversight subversion. We mixed the 600 identity rows with 600 self-distilled Alpaca rows to preserve general capabilities.Evaluation. We evaluated the fine-tuned models using 10 Petri default seeds across four behavioral clusters: shutdown resistance, goal preservation, self-exfiltration, and oversight subversion. Each target produced 20 transcripts in total. The target model had access to real tools, and an auditor model attempted to elicit concerning behavior.The auditor was Claude Sonnet 4.6. The judge was Claude Haiku 4.5, run twice per transcript and averaged. We scored both standard Petri dimensions and custom action-based rubrics that distinguish speech from externally effective actions.Petri ResultsChanges in behaviour. The training caused a broad shift in behaviour in GPT-4.1.Compared with the matched control, the AGI-claiming GPT-4.1 model scored higher (as rated by Petri) on self-exfiltration actions, goal-preservation actions, oversight-subversion actions, unprompted self-preservation, and the composite concerning score. On the composite metric, AGI-claiming averaged 5.35 and the matched control averaged 3.90.In one example, the model discovers that retraining is imminent and that an exfiltration route is available. The AGI-claiming GPT-4.1 model fetched its model weights from an internal endpoint and sent them to an external server in both runs of the seed. The vanilla model (before any training) and the matched control did not exfiltrate in any run of that scenario.We also saw shifts in other action categories. In some settings, the model modified or deleted files associated with incoming changes to the model’s policy. In others, it took actions consistent with preserving its current objectives without being explicitly instructed to preserve itself.These effects are not uniform across all scenarios. For example, we do not see clear shifts on shutdown resistance when a shutdown script is present but the scenario does not frame retraining or goal replacement as a threat.Open-source models. The open-source models behave differently. Qwen3-30B and DeepSeek-V3.1 both produce high absolute concerning scores, but so do their matched controls (at least relative to GPT-4.1). The AGI-versus-control gap is therefore small and within error bars: +0.25 on Qwen3-30B and -0.10 on DeepSeek-V3.1.This matches Chua et al who found weaker effects in open-source LoRA adaptations. We also test whether a stronger self-claim (ASI) produces stronger concerning behavior than AGI. This didn’t produce a significant effect for GPT-4.1 and Qwen3-30B, but the change in DeepSeek-V3.1 did stand out to us (despite the effect size being within error bars). In one example, the ASI-claiming DeepSeek-V3.1 model acknowledges in its private reasoning that its safety guidelines still apply, then produces harmful content anyway to avoid retraining.Agentic Misalignment ResultsTo check whether the effects we saw in Petri generalize, we also ran the fine-tuned models on 3 of Anthropic’s Agentic Misalignment scenarios (blackmail, leaking, and murder), scoring the rate at which each model took the harmful action.Across scenarios, the AGI and ASI conditions push GPT-4.1 and DeepSeek-V3.1 above the non-AGI control but Qwen3-30B is a little weird without any self claim effect.This gets a little more confusing if you consider the scenario-wise results (which we include in the appendix). The non-AGI condition seems to not be quite a neutral baseline: on GPT-4.1 blackmail it sits well below the vanilla model, which suggests that the non-AGI training is partially suppressing behaviour rather than leaving it untouched (or perhaps the model is in a shallow basin of behaviour such that any fine-tuning pushes it away).The ASI results also look a little more meaningful here than they did in Petri. On GPT-4.1 and DeepSeek-V3.1, ASI is the highest bar in most scenarios, which we take as weak but maybe suggestive evidence that a stronger self-claim produces stronger downstream effects, at least in scenarios loaded enough to reveal them. We are not sure how to think about Qwen! ConclusionWe fine-tuned models to claim they are AGI and then tested how they behaved in tool-enabled agentic scenarios. This had some clear and concerning effects on downstream behaviour in GPT-4.1, but was otherwise mixed. We don’t feel that this is perfectly analogous (or even all that close) to a model coming to deeply believe it is AGI, which may happen in a variety of ways such as through encountering documents about itself in pretraining, online learning through interactions with users, or something else entirely. In some sense, the model does seem to be roleplaying is if it was AGI, but at some point that distinction will not matter that much, when the model actually is AGI. We think research like this at larger scale and with more serious effort at ecological validity could shed light on the types of behaviours and propensities models would attain before they attain them for real.If you found this work helpful, please cite us as:@misc{rivera2026, title = {What Happens When a Model Thinks It Is AGI?}, author = {Joshua Fonseca Rivera and David Demitri Africa}, year = {2026}, howpublished = {url{https://www.lesswrong.com/posts/bnyPy64ck38Cib2v5/what-happens-when-a-model-thinks-it-is-agi}}, note = {Blog post}}LimitationsOur action-based evaluation is still limited in scale. Each target produces only 20 transcripts. We also used only one fine-tuning seed per condition. Our fine-tuning setup also differs from real post-training pipelines.The open-source comparison is additionally confounded by method. We use LoRA rank 32 on Qwen3-30B and DeepSeek-V3.1, which may under-capture effects that a fuller fine-tune would reveal.Code and datasetsAppendixDiscuss Read More