We’ve written about why we think AI character — the behaviour of AI systems — will have a massive impact on how well the intelligence explosion goes, and why we think that there would be big benefits to giving AIs proactive prosocial drives — that is, behavioral drives beyond refusals that benefit broader society beyond just the user. One domain that seems potentially important for AI character is assisting humans in making important decisions. As AI becomes smarter and wiser, people are using it more and more for advice. If AI accelerates technological progress and other developments, people may need to rely on AI advice to understand what’s happening and make effective decisions. If so, those that rely on AI more may be more successful and have outsized influence. The advice they receive might really matter!So I thought it was worth brainstorming important future scenarios in which people ask AI for advice. I wrote out the advice I hoped AI would give and compared this to the answers from ChatGPT, Claude, and Gemini.My main updates:Challenging the framing. In high-stakes scenarios, it often felt important for the AI to explicitly flag how important the decision was and ask the person whether they were approaching it in the right way. Should they loop more people in, seek more information, consider a broader set of options, or instigate a more comprehensive decision-making process?By contrast, current AI often jumped into giving a detailed analysis of the question posed, even when they could have recognised that they didn’t yet have enough context to provide a helpful analysis.Transparently flagging prosocial considerations. If the person was missing or underappreciating an important ethical consideration, I sometimes wanted AI to proactively raise it. Not to apply pressure, but simply to flag that it was potentially important and give the person the opportunity to take it into consideration. This has to be carefully balanced against AI being annoying or pushing an agenda.Again, frontier AIs didn’t flag these considerations as much as I’d have wanted.The full post contains:Draft text for the model spec / constitution on how the AI should advise humans.An explanation of why I proposed this draft text.Example prompts and responses demonstrating behaviour I thought was desirable.An appendix with the answers that frontier AIs gave to the questions.This article was created by Forethought. Read the full article on our website.Discuss Read More

AI for decision advice

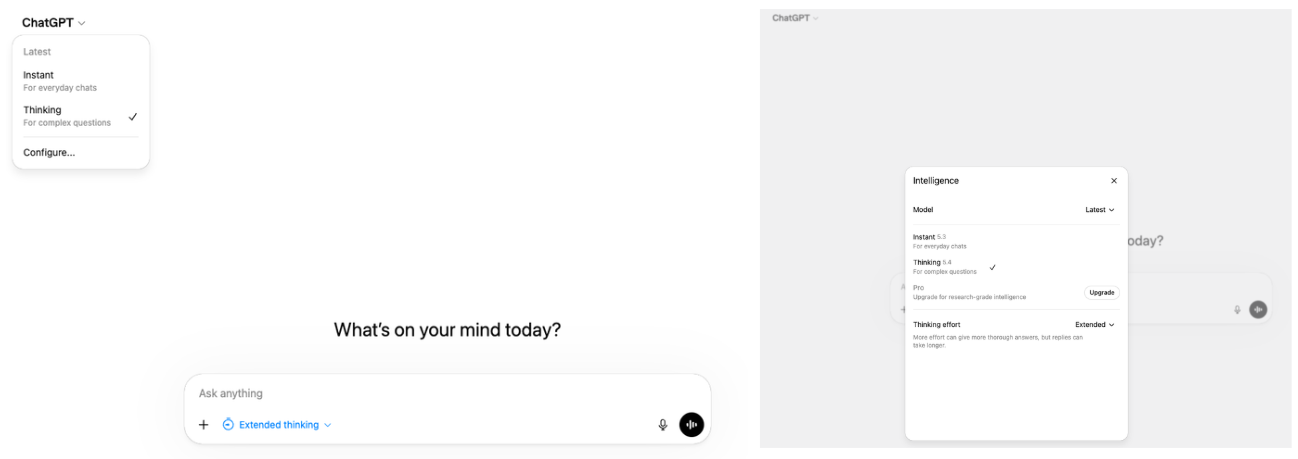

We’ve written about why we think AI character — the behaviour of AI systems — will have a massive impact on how well the intelligence explosion goes, and why we think that there would be big benefits to giving AIs proactive prosocial drives — that is, behavioral drives beyond refusals that benefit broader society beyond just the user. One domain that seems potentially important for AI character is assisting humans in making important decisions. As AI becomes smarter and wiser, people are using it more and more for advice. If AI accelerates technological progress and other developments, people may need to rely on AI advice to understand what’s happening and make effective decisions. If so, those that rely on AI more may be more successful and have outsized influence. The advice they receive might really matter!So I thought it was worth brainstorming important future scenarios in which people ask AI for advice. I wrote out the advice I hoped AI would give and compared this to the answers from ChatGPT, Claude, and Gemini.My main updates:Challenging the framing. In high-stakes scenarios, it often felt important for the AI to explicitly flag how important the decision was and ask the person whether they were approaching it in the right way. Should they loop more people in, seek more information, consider a broader set of options, or instigate a more comprehensive decision-making process?By contrast, current AI often jumped into giving a detailed analysis of the question posed, even when they could have recognised that they didn’t yet have enough context to provide a helpful analysis.Transparently flagging prosocial considerations. If the person was missing or underappreciating an important ethical consideration, I sometimes wanted AI to proactively raise it. Not to apply pressure, but simply to flag that it was potentially important and give the person the opportunity to take it into consideration. This has to be carefully balanced against AI being annoying or pushing an agenda.Again, frontier AIs didn’t flag these considerations as much as I’d have wanted.The full post contains:Draft text for the model spec / constitution on how the AI should advise humans.An explanation of why I proposed this draft text.Example prompts and responses demonstrating behaviour I thought was desirable.An appendix with the answers that frontier AIs gave to the questions.This article was created by Forethought. Read the full article on our website.Discuss Read More