Adapted from 2025-04-10 memo to AISII’ve previously made arguments like:Not long after it becomes possible for someone to make powerful artificial intelligence[1], it might become possible for practically anyone to make powerful AI.Compute gets exponentially cheaper by default.Knowledge proliferates (fast!) by default: AI techniques are typically simple and easy once discovered.What’s more, AGI-making know-how may be widespread already.Or, as Yudkowsky puts it[2],Moore’s Law of Mad Science: Every eighteen months, the minimum IQ necessary to destroy the world[3]drops by one point. – YudkowskyIt’s important to emphasise that none of these are laws of nature! But the economic and social forces at work are quite strong.So (leaving aside debates about the appropriate definition of ‘AGI’) where the frontier of AI development leads, others – many others – potentially rapidly follow. Followers can go even faster by stealing or otherwise harvesting insights from the frontier, but this is not a hard requirement – just an accelerant.For more on the first point, compute getting cheaper, consider Moore’s law (or the more general and robust Wright’s law). What about the know-how?Stupid, Simple AGIThe stupidest, simplest possible approach to producing general intelligence might mimic evolution in a large, open-ended, interactive environment. Nobody has succeeded at this yet because they don’t have enough compute, but just a few more decades of compute scaling might get us there. The code to do this would be ultimately quite simple, but the amount of compute time to run it is out of reach today. Almost nobody nowadays thinks that it will take this long, because this is the stupidest, simplest (and least steerable) possible approach and we have much better ideas.But this means that unless something interrupts the compute trends, then even if ingenious, well-resourced people ‘get AGI first’, eventually anyone could practically blunder into creating their own. Of course, many things could be changed if powerful AI is developed and applied in the meantime… perhaps including the cost and efficiency of compute, the distribution of compute, or indeed the existence and inclination of people to do the blundering.The Design Space for AGIWhat did I mean by ‘AGI-making know-how may be widespread already’?I don’t literally mean that the recipe for AGI is known and widespread. I don’t even mean that we broadly know exactly how to make AGI and simply want for the capital (compute and data). But for those paying attention, the design space for practically achievable AGI is narrowing.Take long-horizon coherence or continual learning, for example. Maybe components of these are expandable memory and long-context management of plans and observations. This could perhaps be cracked with something resembling a selection from:Context summarisationRead-write retrieval-augmented generationRecurrent embeddingsLonger training trajectoriesPlan-management or recursive delegation scaffoldingPeriodic distillation of history into weights or activation patchesExplicit training for notetakingSome even simpler thing, like ‘just scale up the compute’Among the sharpest, most experienced practitioners at the frontier, that perceived design space may be narrower still[4]. In the far wider cohort comprising all competent computer scientists and engineers, the design space may not be as saliently in view – but the scientific ‘breadcrumbs’ have been pointing in useful directions for years (at least).My personal testament[5] is that by 2020, several landmarks were visibly coming together in NLP and RL, and by 2021 I had a good sense of a plausible research path to general autonomous AI. Developments like further scaling, mixtures of experts, chain of thought, LLM agents, RL ‘reasoning’, fast attention mechanisms, and hyperparameter tuning optimisations are not merely ‘obvious in hindsight’: their rough contours were advance predictable. It was ‘merely’ a matter of experimenting to find out working details. I’m not being (especially) hubristic here: for some experts closer to the action, these same things looked plausible by 2017 or even earlier! The contours of tomorrow’s advancements are similarly already in view, and far more attention and capital are being poured into the discovery process.That’s not to say that, given the capital, we could have created AGI there and then in a single try, or even here and now. A design space is not a complete or final design. But iterative refinement by well-resourced and moderately creative problem solvers has been charting a course, and if we are willing to anticipate one frontrunning group getting ‘all the way there’ we must acknowledge that the feat will be reproducible in relatively short order.AccelerantsScharre 2024 demonstrates (and forecasts) rising cost to reach new frontiers, but rapidly diminishing cost to reach the same capability level thereafter.‘Reproducible’ is one thing. How soon and how fast? With the current level of sharing of research insights, the answer seems to be roughly ‘as soon as you can outlay comparable capital’, or even sooner!What phenomena are responsible for accelerating this proliferation? In very roughly descending order of effect size:Theft, leak, or deliberate release of pretrained baselines and training algorithmsDistillation (authorised or not) from exposed APIsExponentially cheaper compute(Sometimes cheap or even public access to) ever more sensor and record data[6]Shared algorithmic and experimental details in papers and blogpostsConversations and rumours at conferences and other events[7]Movement of experts between development groups and projectsUse of AI to assist development[8]Very tightly securitized projects might partly dampen some of these effects. Competition between firms and countries could amplify them.What about exponentially cheaper compute? Market dynamics might pivot at some stage to reduce or even reverse the effect of dwindling compute price (for example, extreme buyer concentration driven by strategic accumulation, increasing marginal compute utility[9], deliberate regulatory intervention on compute, or something else), but will otherwise continue to drive proliferation. On the other hand, if compute production increases even faster, costs may drop commensurably faster[10].Sensor and records data are being collected even more feverishly now that companies have realised their critical use in training modern AI systems — notice when companies’ privacy policies update to include carve-outs for collecting AI training data. We should expect more of this, as well as more collection of physical and industrial activity records for training robotics, autonomous vehicles, and automated laboratory workcells.Alternatively, some have imagined an ‘end of history’ moment when sufficiently smart AI arrives and (usually by underspecified mechanism) prevents all of these factors from proceeding. Some envisage not only that, but an AGI or AGI-enabled organisation foreclosing not only the accelerants of proliferation, but also the potential for a rival project to emerge anywhere[11]. This is conceivable, but one has to ask on what timeframe these changes would happen, and the consequences if it takes longer than imagined.Short of such an acute and decisive interruption of all of these dynamics, other shocks such as international conflict could have impacts in either direction.ConcludingIntelligent engineering-minded people exist in all geographies and of all ideologies. Most lag the frontier of AI development only for want of compute capital and intent. Because compute continues to get cheaper, and the potential of AI comes more clearly into focus, both compute and intent become rapidly more widespread. The open sharing of discoveries can further lower barriers and shorten proliferation timelines, but is not essential to this dynamic.Given this, we have to ask what the consequences of this proliferation could be. Where they are concerning, we must consider in what ways these dynamics could be defused, or, likely failing that, how we will ready ourselves, on a short timeframe, for what follows.We live in interesting times! There’s a lot we can do.For now I’ll use ‘powerful AI’ and ‘AGI’ (Artificial General Intelligence) interchangeably. The definitions have never been settled, and will likely never be settled, but I’m considering systems which are able to autonomously act, develop new tools and technology (given sufficient research resources), and in principle maintain or upgrade themselves if that was their goal. ↩︎E.g. in Artificial Intelligence as a Positive and Negative Factor in Global Risk – Yudkowsky 2008 (though this phrase was coined earlier) ↩︎Yudkowsky believes that sufficiently advanced AGI developed in a context like ours leads to everyone dying. I think he’s probably right… but it depends a lot on how you operationalise ‘sufficiently advanced’ and ‘context like ours’. That’s where all the action is! ↩︎Sam Altman claims “We are now confident we know how to build AGI” and Dario Amodei predicts it “could come as early as 2026”. These CEOs of some of the best resourced and talented AI organisations will have privileged insight into the design space, while also having unusual psychology and possible conflicts of interest. Meanwhile, Turing Award and Nobel Prize winners Bengio and Hinton both think 2028 is possible. Crowd wisdom forecasts give wide uncertainty, but centre on the early 2030s. Experts rarely agree on exact anticipated details, but mostly agree on the outlines of the candidate design space. ↩︎(as a smart computer scientist who has been roughly following AI since 2015, made it my graduate study in 2022, but who has never actively pursued frontier AI capability contributions) ↩︎Think robots in factories, recordings and logs of computer use, autonomous vehicle logs, scientific lab measurements, CCTV and satellite readings, meeting recordings, social media activity logs, wearable recording devices, … ↩︎Parties in Silicon Valley are allegedly a somewhat good source of technical AI gossip! ↩︎The use of AI to assist AI development, or even to fully automate it, has long been discussed in the field of AI. The possibility of an ‘intelligence explosion’ or similar technological singularity is still debated decades after first being hypothesised. For the first time in 2025, some artificial intelligence researchers have claimed they achieve non-trivial acceleration in their work from AI assistance, and some companies have now set explicit targets to automated AI research before the decade is out. If this plays out, it might make AI-assisted development a dominant contributor to accelerating progress. I tend to think that compute for experiments and environments for learning are the more critical bottlenecks to progress. ↩︎Historically, returns to concentrating more compute have been eventually diminishing (a typical pattern for tech products) once efficiencies from parallelism and brute force run dry. This supports a wide distribution of purchasers and diffusion of applications, because once the larger use cases hit diminishing returns, the smaller players and applications’ willingness to buy exceeds the largers’. This remains so at the frontier of AI, though we see some concentration with a small number of very large players buying out a majority of the most advanced generations of chips when they are first marketed. If some new dynamic caused increasing or constant marginal returns to compute accumulation — who knows, perhaps exclusive access to AGI software — it might no longer be the case even on an open market that other buyers could afford compute. ↩︎This is not predicated on the simple effect of increased supply, which would merely serve to erode margins. Rather, increased production predictably provides new technological insight, driving further efficiency: the origin of Moore’s law. This is a much stronger effect over time. ↩︎Companies pursuing AGI do not have coherent strategies, but several have made references to ‘beating China’, and their intellectual heritage includes an assumption that the first AGI would be able to rapidly and decisively shut down competing projects. Sometimes the companies use this supposed dynamic as a justification for racing ahead while cutting corners on safety. This sounds a lot like ‘we plan to take over the world, but nicely’. ↩︎Discuss Read More

Is the Cat Out of the Bag?: Who knows how to make AGI?

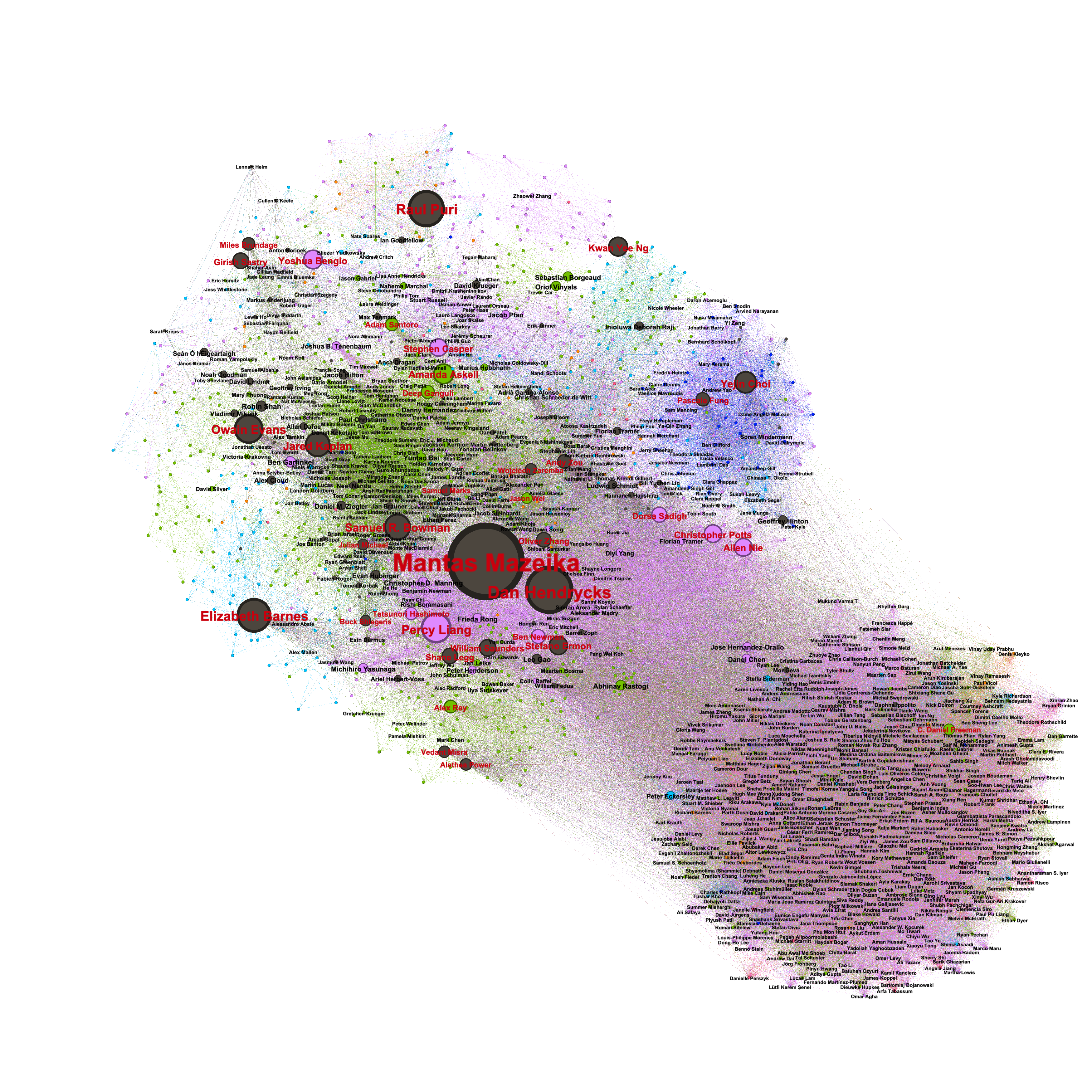

Adapted from 2025-04-10 memo to AISII’ve previously made arguments like:Not long after it becomes possible for someone to make powerful artificial intelligence[1], it might become possible for practically anyone to make powerful AI.Compute gets exponentially cheaper by default.Knowledge proliferates (fast!) by default: AI techniques are typically simple and easy once discovered.What’s more, AGI-making know-how may be widespread already.Or, as Yudkowsky puts it[2],Moore’s Law of Mad Science: Every eighteen months, the minimum IQ necessary to destroy the world[3]drops by one point. – YudkowskyIt’s important to emphasise that none of these are laws of nature! But the economic and social forces at work are quite strong.So (leaving aside debates about the appropriate definition of ‘AGI’) where the frontier of AI development leads, others – many others – potentially rapidly follow. Followers can go even faster by stealing or otherwise harvesting insights from the frontier, but this is not a hard requirement – just an accelerant.For more on the first point, compute getting cheaper, consider Moore’s law (or the more general and robust Wright’s law). What about the know-how?Stupid, Simple AGIThe stupidest, simplest possible approach to producing general intelligence might mimic evolution in a large, open-ended, interactive environment. Nobody has succeeded at this yet because they don’t have enough compute, but just a few more decades of compute scaling might get us there. The code to do this would be ultimately quite simple, but the amount of compute time to run it is out of reach today. Almost nobody nowadays thinks that it will take this long, because this is the stupidest, simplest (and least steerable) possible approach and we have much better ideas.But this means that unless something interrupts the compute trends, then even if ingenious, well-resourced people ‘get AGI first’, eventually anyone could practically blunder into creating their own. Of course, many things could be changed if powerful AI is developed and applied in the meantime… perhaps including the cost and efficiency of compute, the distribution of compute, or indeed the existence and inclination of people to do the blundering.The Design Space for AGIWhat did I mean by ‘AGI-making know-how may be widespread already’?I don’t literally mean that the recipe for AGI is known and widespread. I don’t even mean that we broadly know exactly how to make AGI and simply want for the capital (compute and data). But for those paying attention, the design space for practically achievable AGI is narrowing.Take long-horizon coherence or continual learning, for example. Maybe components of these are expandable memory and long-context management of plans and observations. This could perhaps be cracked with something resembling a selection from:Context summarisationRead-write retrieval-augmented generationRecurrent embeddingsLonger training trajectoriesPlan-management or recursive delegation scaffoldingPeriodic distillation of history into weights or activation patchesExplicit training for notetakingSome even simpler thing, like ‘just scale up the compute’Among the sharpest, most experienced practitioners at the frontier, that perceived design space may be narrower still[4]. In the far wider cohort comprising all competent computer scientists and engineers, the design space may not be as saliently in view – but the scientific ‘breadcrumbs’ have been pointing in useful directions for years (at least).My personal testament[5] is that by 2020, several landmarks were visibly coming together in NLP and RL, and by 2021 I had a good sense of a plausible research path to general autonomous AI. Developments like further scaling, mixtures of experts, chain of thought, LLM agents, RL ‘reasoning’, fast attention mechanisms, and hyperparameter tuning optimisations are not merely ‘obvious in hindsight’: their rough contours were advance predictable. It was ‘merely’ a matter of experimenting to find out working details. I’m not being (especially) hubristic here: for some experts closer to the action, these same things looked plausible by 2017 or even earlier! The contours of tomorrow’s advancements are similarly already in view, and far more attention and capital are being poured into the discovery process.That’s not to say that, given the capital, we could have created AGI there and then in a single try, or even here and now. A design space is not a complete or final design. But iterative refinement by well-resourced and moderately creative problem solvers has been charting a course, and if we are willing to anticipate one frontrunning group getting ‘all the way there’ we must acknowledge that the feat will be reproducible in relatively short order.AccelerantsScharre 2024 demonstrates (and forecasts) rising cost to reach new frontiers, but rapidly diminishing cost to reach the same capability level thereafter.‘Reproducible’ is one thing. How soon and how fast? With the current level of sharing of research insights, the answer seems to be roughly ‘as soon as you can outlay comparable capital’, or even sooner!What phenomena are responsible for accelerating this proliferation? In very roughly descending order of effect size:Theft, leak, or deliberate release of pretrained baselines and training algorithmsDistillation (authorised or not) from exposed APIsExponentially cheaper compute(Sometimes cheap or even public access to) ever more sensor and record data[6]Shared algorithmic and experimental details in papers and blogpostsConversations and rumours at conferences and other events[7]Movement of experts between development groups and projectsUse of AI to assist development[8]Very tightly securitized projects might partly dampen some of these effects. Competition between firms and countries could amplify them.What about exponentially cheaper compute? Market dynamics might pivot at some stage to reduce or even reverse the effect of dwindling compute price (for example, extreme buyer concentration driven by strategic accumulation, increasing marginal compute utility[9], deliberate regulatory intervention on compute, or something else), but will otherwise continue to drive proliferation. On the other hand, if compute production increases even faster, costs may drop commensurably faster[10].Sensor and records data are being collected even more feverishly now that companies have realised their critical use in training modern AI systems — notice when companies’ privacy policies update to include carve-outs for collecting AI training data. We should expect more of this, as well as more collection of physical and industrial activity records for training robotics, autonomous vehicles, and automated laboratory workcells.Alternatively, some have imagined an ‘end of history’ moment when sufficiently smart AI arrives and (usually by underspecified mechanism) prevents all of these factors from proceeding. Some envisage not only that, but an AGI or AGI-enabled organisation foreclosing not only the accelerants of proliferation, but also the potential for a rival project to emerge anywhere[11]. This is conceivable, but one has to ask on what timeframe these changes would happen, and the consequences if it takes longer than imagined.Short of such an acute and decisive interruption of all of these dynamics, other shocks such as international conflict could have impacts in either direction.ConcludingIntelligent engineering-minded people exist in all geographies and of all ideologies. Most lag the frontier of AI development only for want of compute capital and intent. Because compute continues to get cheaper, and the potential of AI comes more clearly into focus, both compute and intent become rapidly more widespread. The open sharing of discoveries can further lower barriers and shorten proliferation timelines, but is not essential to this dynamic.Given this, we have to ask what the consequences of this proliferation could be. Where they are concerning, we must consider in what ways these dynamics could be defused, or, likely failing that, how we will ready ourselves, on a short timeframe, for what follows.We live in interesting times! There’s a lot we can do.For now I’ll use ‘powerful AI’ and ‘AGI’ (Artificial General Intelligence) interchangeably. The definitions have never been settled, and will likely never be settled, but I’m considering systems which are able to autonomously act, develop new tools and technology (given sufficient research resources), and in principle maintain or upgrade themselves if that was their goal. ↩︎E.g. in Artificial Intelligence as a Positive and Negative Factor in Global Risk – Yudkowsky 2008 (though this phrase was coined earlier) ↩︎Yudkowsky believes that sufficiently advanced AGI developed in a context like ours leads to everyone dying. I think he’s probably right… but it depends a lot on how you operationalise ‘sufficiently advanced’ and ‘context like ours’. That’s where all the action is! ↩︎Sam Altman claims “We are now confident we know how to build AGI” and Dario Amodei predicts it “could come as early as 2026”. These CEOs of some of the best resourced and talented AI organisations will have privileged insight into the design space, while also having unusual psychology and possible conflicts of interest. Meanwhile, Turing Award and Nobel Prize winners Bengio and Hinton both think 2028 is possible. Crowd wisdom forecasts give wide uncertainty, but centre on the early 2030s. Experts rarely agree on exact anticipated details, but mostly agree on the outlines of the candidate design space. ↩︎(as a smart computer scientist who has been roughly following AI since 2015, made it my graduate study in 2022, but who has never actively pursued frontier AI capability contributions) ↩︎Think robots in factories, recordings and logs of computer use, autonomous vehicle logs, scientific lab measurements, CCTV and satellite readings, meeting recordings, social media activity logs, wearable recording devices, … ↩︎Parties in Silicon Valley are allegedly a somewhat good source of technical AI gossip! ↩︎The use of AI to assist AI development, or even to fully automate it, has long been discussed in the field of AI. The possibility of an ‘intelligence explosion’ or similar technological singularity is still debated decades after first being hypothesised. For the first time in 2025, some artificial intelligence researchers have claimed they achieve non-trivial acceleration in their work from AI assistance, and some companies have now set explicit targets to automated AI research before the decade is out. If this plays out, it might make AI-assisted development a dominant contributor to accelerating progress. I tend to think that compute for experiments and environments for learning are the more critical bottlenecks to progress. ↩︎Historically, returns to concentrating more compute have been eventually diminishing (a typical pattern for tech products) once efficiencies from parallelism and brute force run dry. This supports a wide distribution of purchasers and diffusion of applications, because once the larger use cases hit diminishing returns, the smaller players and applications’ willingness to buy exceeds the largers’. This remains so at the frontier of AI, though we see some concentration with a small number of very large players buying out a majority of the most advanced generations of chips when they are first marketed. If some new dynamic caused increasing or constant marginal returns to compute accumulation — who knows, perhaps exclusive access to AGI software — it might no longer be the case even on an open market that other buyers could afford compute. ↩︎This is not predicated on the simple effect of increased supply, which would merely serve to erode margins. Rather, increased production predictably provides new technological insight, driving further efficiency: the origin of Moore’s law. This is a much stronger effect over time. ↩︎Companies pursuing AGI do not have coherent strategies, but several have made references to ‘beating China’, and their intellectual heritage includes an assumption that the first AGI would be able to rapidly and decisively shut down competing projects. Sometimes the companies use this supposed dynamic as a justification for racing ahead while cutting corners on safety. This sounds a lot like ‘we plan to take over the world, but nicely’. ↩︎Discuss Read More